Results for: "Healthcare"

Keyword Search 9 results

Stanford AI Predicts Disease Risk from a Single Night's Sleep

THE GIST: Stanford researchers developed an AI, SleepFM, that predicts disease risk by analyzing physiological signals from one night of sleep.

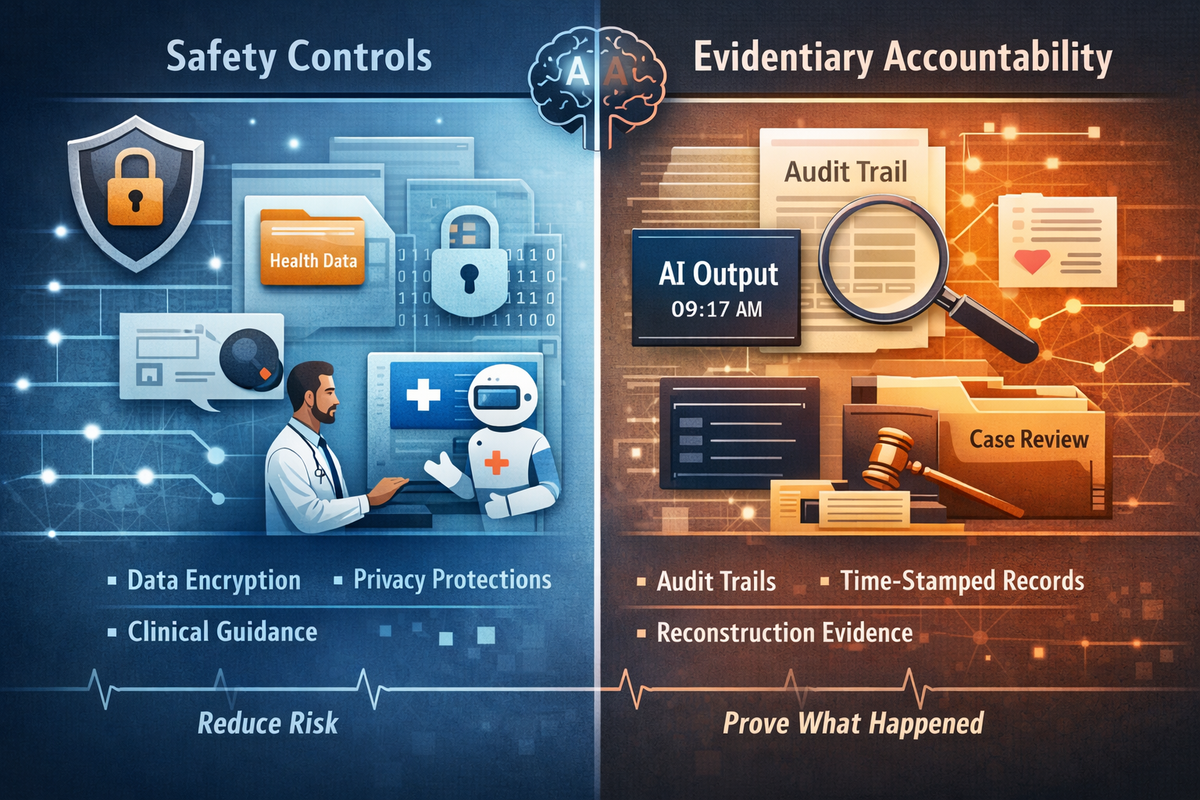

EB3F: Standardizing LLM Audits for Legal Admissibility

THE GIST: EB3F offers a framework to transform subjective LLM risk assessments into standardized, reproducible, and legally-admissible exhibits.

Ozlo Turns Sleepbuds into Sleep Data Platform

THE GIST: Ozlo is transforming its sleepbuds into a platform, partnering with Calm and acquiring a neurotech startup to expand its reach.

AI-Powered Telehealth Addresses Primary Care Shortage

THE GIST: AI-powered telehealth solutions are emerging to combat the growing shortage of primary care physicians, offering quicker access to medical consultations.

AI Incidents Often Stem from Evidence Failures, Not Model Flaws

THE GIST: AI incidents often escalate due to institutions' inability to reconstruct AI system outputs, not model failures.

Tiiny AI: Pocket-Sized AI Supercomputer Debuts at CES 2026

THE GIST: Tiiny AI unveils a pocket-sized AI supercomputer with on-device processing at CES 2026.

ChatGPT Health Prioritizes Safety, Accountability Still a Question

THE GIST: OpenAI's ChatGPT Health prioritizes user safety and privacy but doesn't fully address accountability concerns in healthcare applications.

Utah Pilot Program Allows AI to Autonomously Refill Prescriptions

THE GIST: Utah is piloting a program allowing AI to autonomously refill prescriptions, raising safety concerns among public advocates.

OpenAI Launches ChatGPT Health Amid Privacy Concerns

THE GIST: OpenAI introduces ChatGPT Health, a dedicated space for health-related conversations, while addressing privacy and accuracy concerns.