ClawMoat: Open-Source Runtime Security for AI Agents

THE GIST: ClawMoat is an open-source runtime security tool providing protection against prompt injection, tool misuse, and data exfiltration for AI agents.

Influencers Aligned on AI Crisis Thesis: Systemic Financial Collapse?

THE GIST: A Citrini Research report suggesting AI success could lead to financial collapse resonates with 77% of influencers on X.

vLLM: High-Throughput LLM Serving Engine

THE GIST: vLLM is a fast and easy-to-use library for high-throughput LLM inference and serving, supporting various models and hardware.

Declare AI: Open Standard for AI Content Disclosure

THE GIST: Declare AI introduces an open standard for disclosing AI's contribution to digital content, promoting transparency and verification.

Tldraw Moves Tests to Closed Source to Prevent AI Code Cloning

THE GIST: Tldraw moved its tests to a closed-source repository to prevent AI from using them to create derivative implementations.

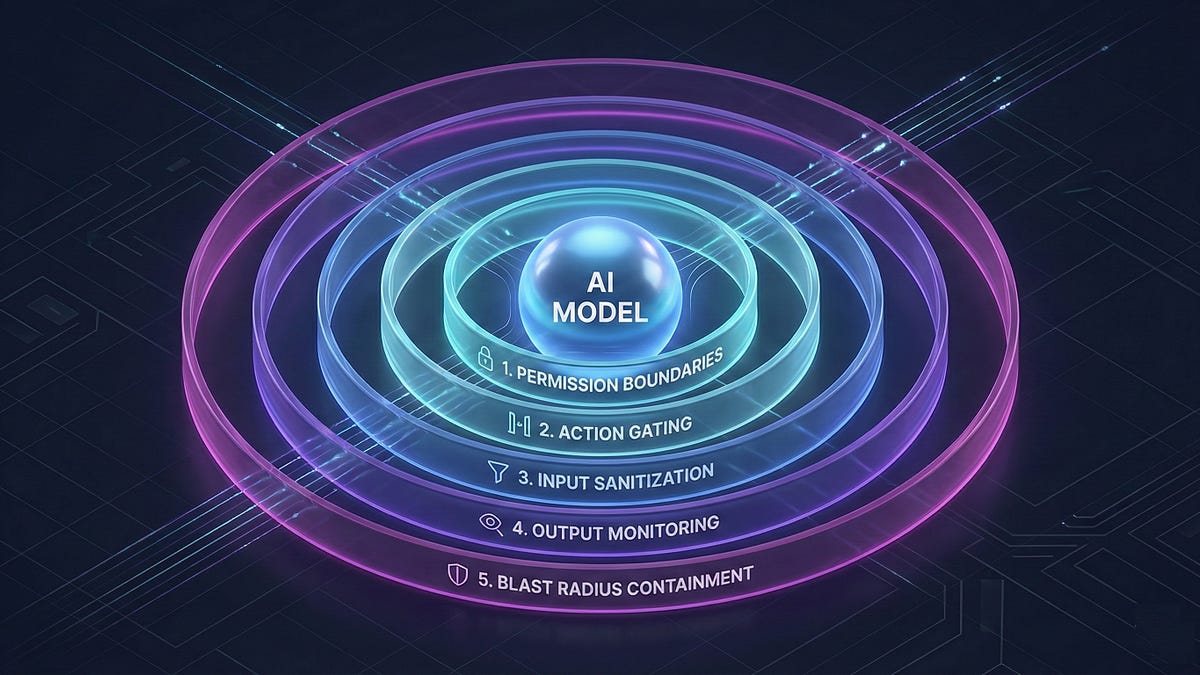

AI-Runtime-Guard: Policy Enforcement for AI Agents

THE GIST: AI-Runtime-Guard is a policy enforcement layer for AI agents, preventing unauthorized actions without retraining or prompt engineering.

Unworldly: A Flight Recorder for AI Agents Ensuring Security and Compliance

THE GIST: Unworldly is a tool that records AI agent activity, providing tamper-proof audit trails and real-time risk detection.

AI Data Centers Fuel Climate Concerns with Increased Gas Turbine Usage

THE GIST: AI's computational demands are driving a surge in data center construction and increased reliance on gas turbines, potentially adding millions of tons of CO2 emissions.

Prompt Injection: An Architectural Vulnerability in AI Agents

THE GIST: Prompt injection is an architectural problem requiring a layered defense, not just better models.