Results for: "research"

Keyword Search 9 resultsSLIM: Token-Efficient Data Format for LLMs

THE GIST: SLIM reduces token usage in LLM applications by 40-50% compared to JSON.

Quantifying AI Manipulation: A Formal Metric for User Intent

THE GIST: An independent researcher proposes 'State Discrepancy,' a metric to quantify how AI systems alter user intent, aiming to replace vague notions of manipulation.

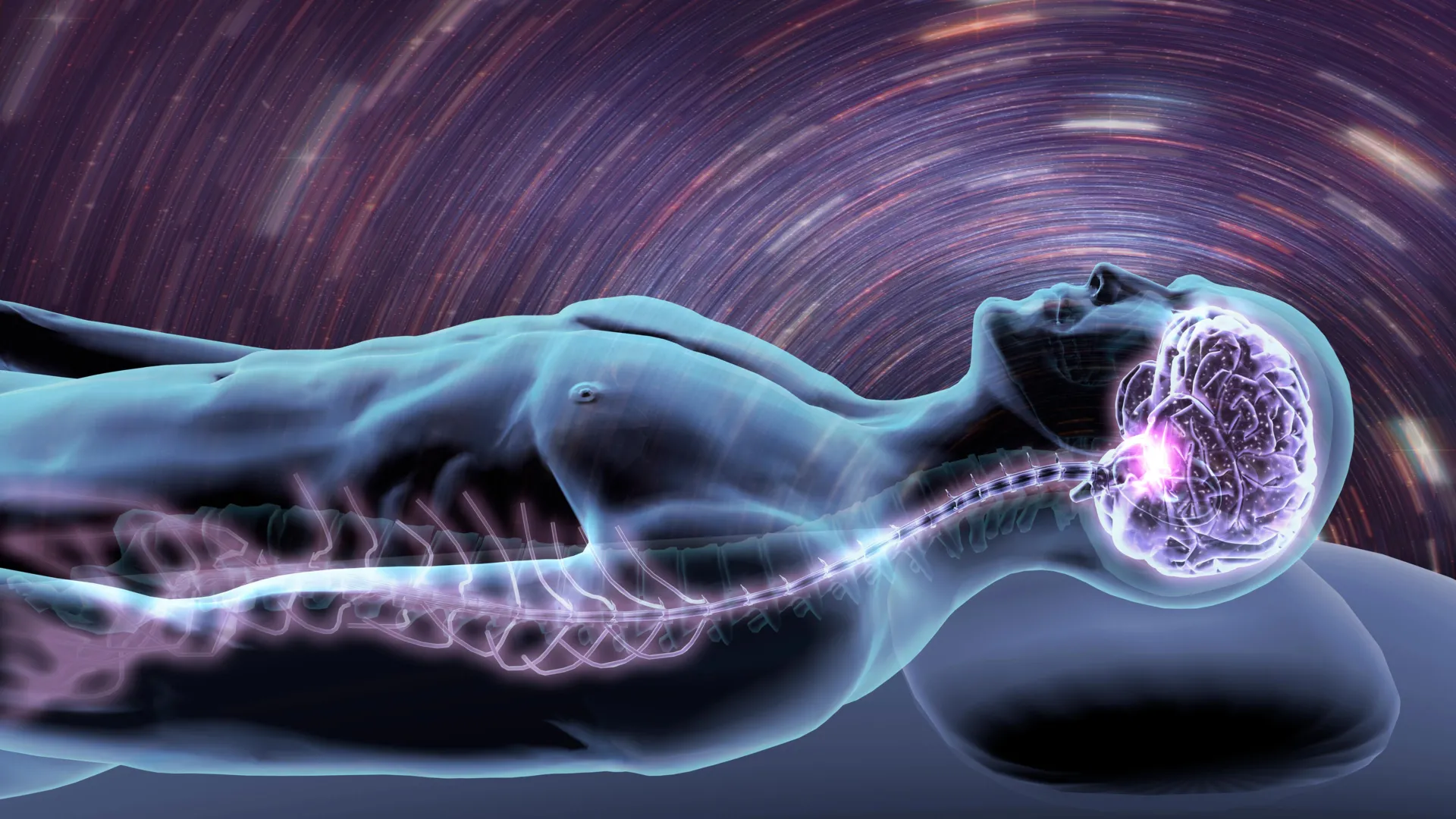

Stanford AI Predicts Disease Risk from a Single Night's Sleep

THE GIST: Stanford researchers developed an AI, SleepFM, that predicts disease risk by analyzing physiological signals from one night of sleep.

AI Hype Cycle Leads to Useless Features

THE GIST: The tech industry's AI hype is producing useless features due to a lack of UX research and product validation.

Purdue University Adds AI Learning Requirement for Students

THE GIST: Purdue University will require incoming students to learn about AI, starting in 2026.

The Danger of Anthropomorphizing AI: A Call for Precise Language

THE GIST: Anthropomorphic language used to describe AI systems is misleading and can lead to misplaced trust and a lack of accountability.

Study Reveals LLMs 'Memorize' Training Data, Challenging AI Industry Claims

THE GIST: Research shows LLMs store and reproduce significant portions of training data, contradicting claims they 'learn'.

AI's UX Bottleneck: Oil on a Horse

THE GIST: Current AI productivity is bottlenecked by poor UX, not model capabilities, hindering the effective harnessing of AI's potential.

AI Fuels Online Trust 'Collapse,' Experts Warn

THE GIST: AI-generated misinformation intensifies the erosion of online trust, blurring the line between real and fake content.