VC Creates AI Scott Adams Despite Family Objections

THE GIST: An AI venture capitalist created a posthumous AI replica of Scott Adams, citing Adams' expressed wish to be memorialized via AI.

AI-Generated Images Fuel Misinformation During Mexico Cartel Crisis

THE GIST: AI-generated images spread misinformation during a Mexico cartel crisis, highlighting the ineffectiveness of current industry safeguards.

Fine-Tuning LLMs: A Deep Dive for Enterprise Applications

THE GIST: Fine-tuning LLMs is crucial for adapting general-purpose models to specific enterprise needs, enhancing precision and compliance.

AI Impersonation Raises Questions About Identity and Understanding

THE GIST: An engineer's experience replacing his AI with GPT reveals the limitations of AI in replicating human-like understanding and the nuances of identity.

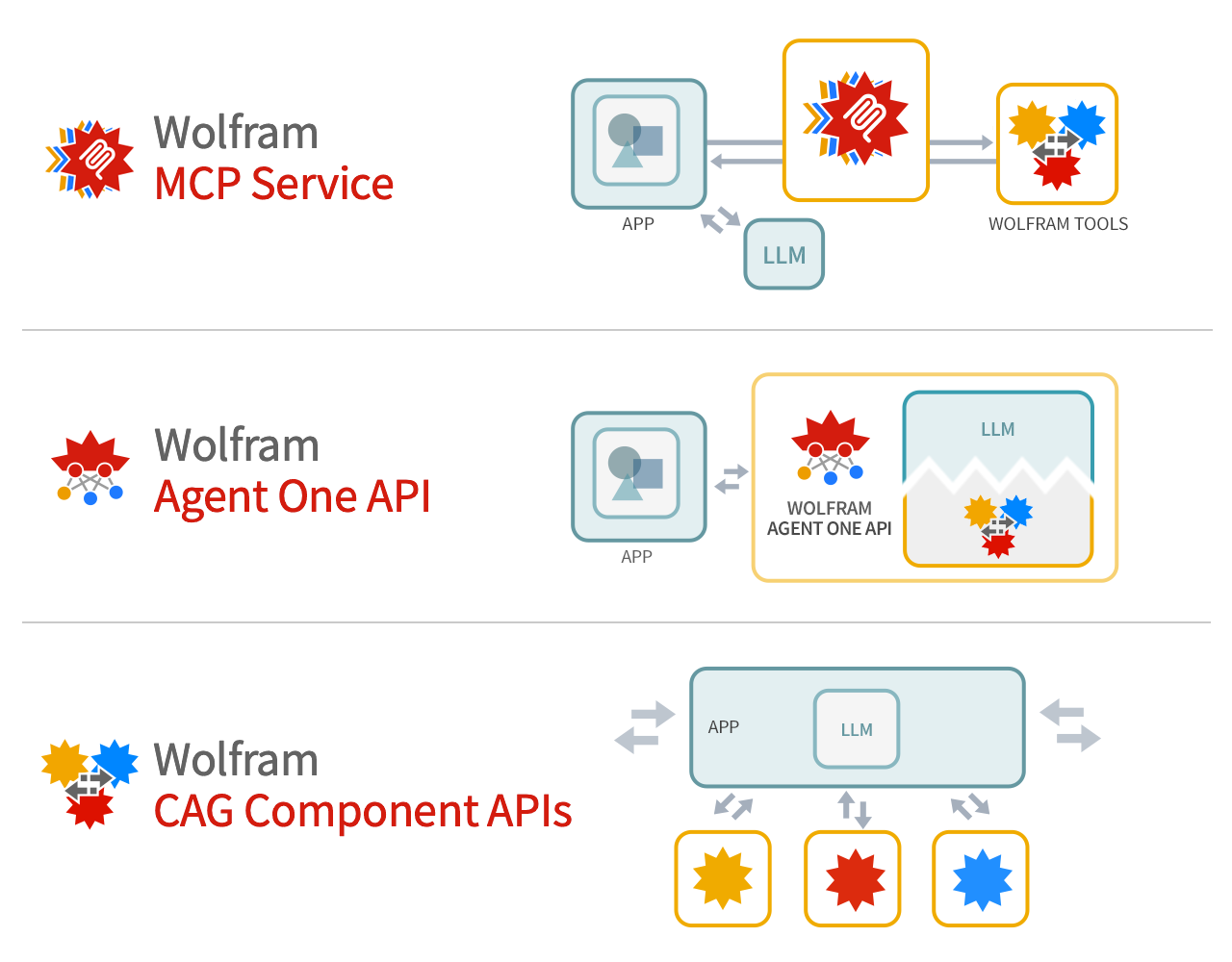

Wolfram Tech as Foundation Tool for LLM Systems

THE GIST: Wolfram argues its technology provides deep computation and precise knowledge to supplement LLM foundation models.

Coordinating Adversarial AI Agents for Enhanced Reasoning

THE GIST: Using independent AI agents for adversarial reasoning enhances output quality by preventing context contamination and promoting structural disagreement.

Anthropic Accuses Chinese Firms of Illicitly Training AI on Claude

THE GIST: Anthropic alleges DeepSeek, MiniMax, and Moonshot illicitly used Claude to train their AI, raising security concerns.

Anthropic Accuses Chinese AI Firms of Data Mining Claude

THE GIST: Anthropic alleges three Chinese AI companies used over 24,000 fake accounts to extract data from its Claude model.

Guide Labs Debuts Interpretable LLM: Steerling-8B

THE GIST: Guide Labs open-sources Steerling-8B, an 8 billion parameter LLM with a new architecture designed for easy interpretability.