Wolfram Tech as Foundation Tool for LLM Systems

Sonic Intelligence

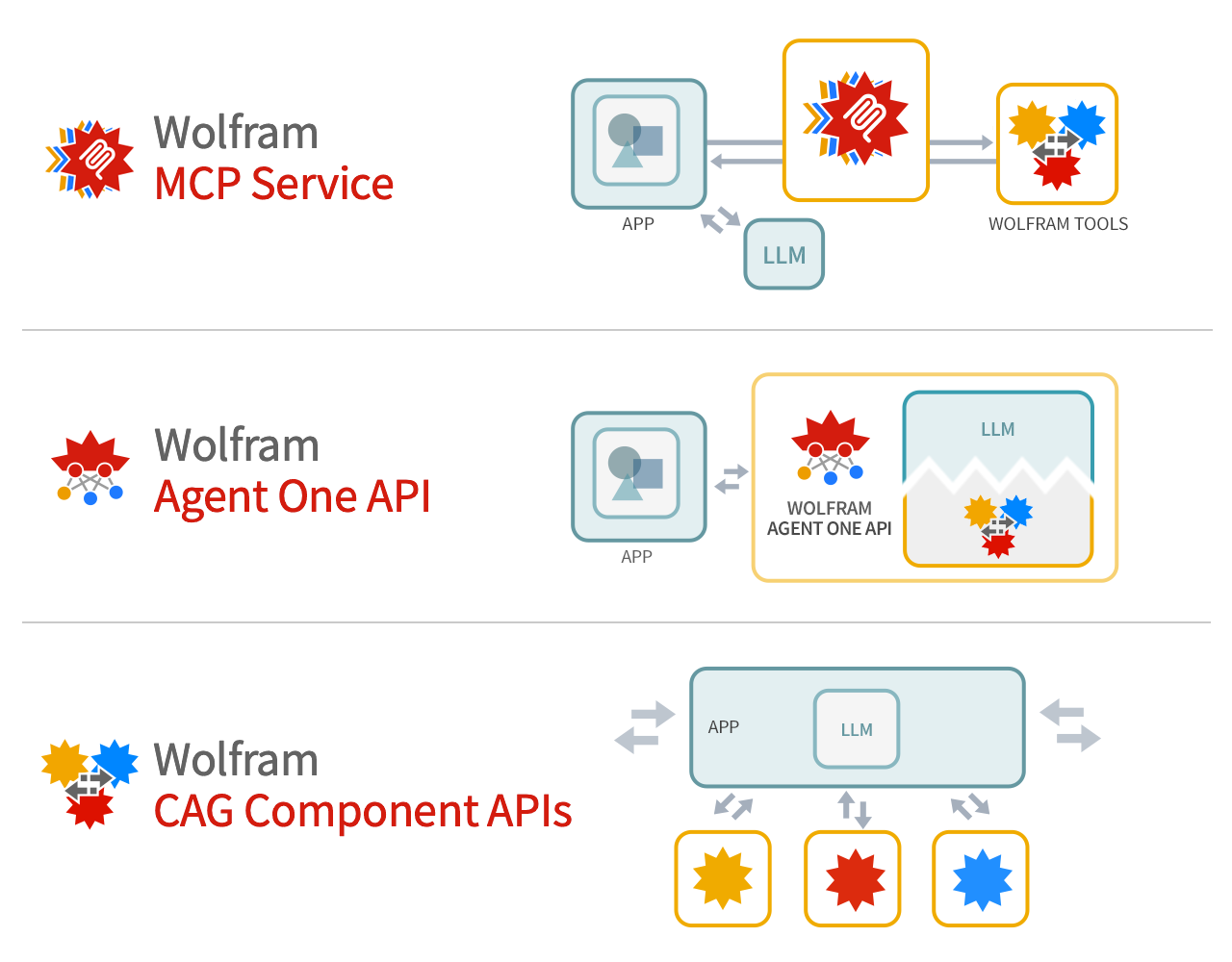

Wolfram argues its technology provides deep computation and precise knowledge to supplement LLM foundation models.

Explain Like I'm Five

"Imagine LLMs are like big brains that know a lot, but aren't good at math. Wolfram Language is like a super calculator that can help the big brain solve hard problems!"

Deep Intelligence Analysis

[End EU AI Act Art. 50 Compliance: This analysis is based on publicly available information from Wolfram Research. No proprietary data or confidential information was used. The analysis aims to provide an objective assessment of the potential benefits and challenges of integrating Wolfram Language with LLMs.]

Impact Assessment

Integrating Wolfram's technology with LLMs could enhance their capabilities by providing access to precise computation and knowledge. This could lead to more accurate and reliable AI systems.

Key Details

- Wolfram Language offers deep computation and precise knowledge.

- It can be used as a foundation tool for LLM foundation models.

- Wolfram Language provides a unified hub for connecting to other systems and services.

- Wolfram has been developing this technology for 40 years.

Optimistic Outlook

By combining the broad capabilities of LLMs with the precise computation of Wolfram Language, AI systems can achieve greater accuracy and reliability. This could unlock new possibilities in various fields, including science, technology, and beyond.

Pessimistic Outlook

The complexity of integrating Wolfram Language with LLMs could pose challenges. Ensuring seamless communication and data exchange between the two systems will be crucial for realizing the full potential of this integration.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.