Results for: "Engineering"

Keyword Search 9 results

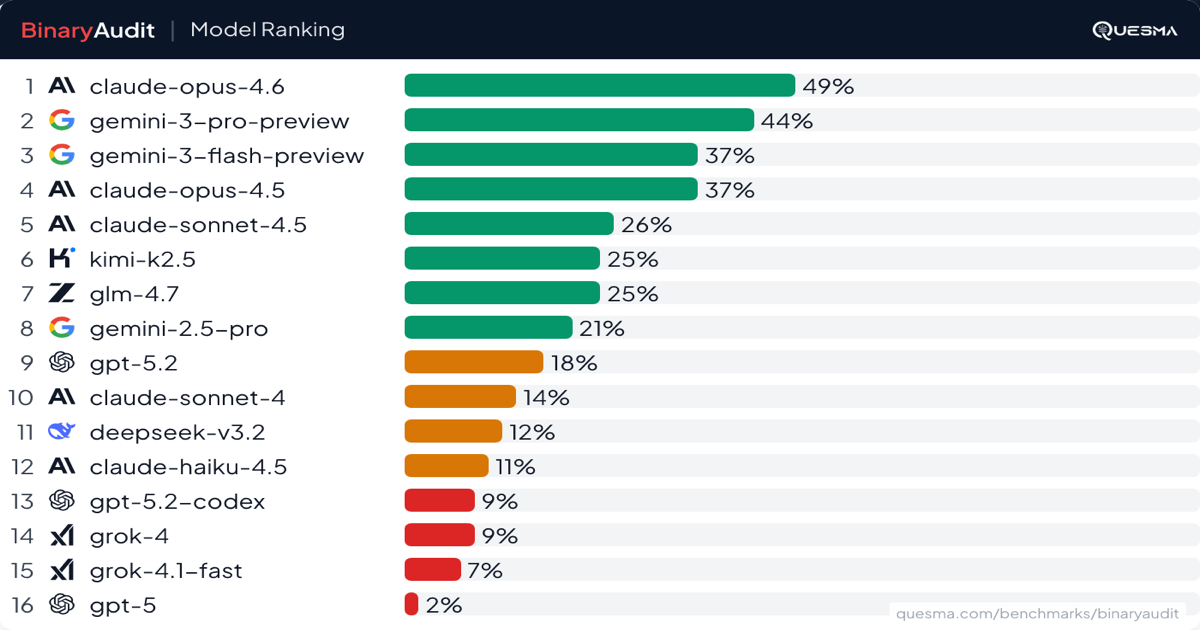

AI Agents Face Off: BinaryAudit Exposes Backdoor Detection Capabilities

THE GIST: BinaryAudit benchmark reveals AI model performance in detecting backdoors within compiled binaries, assessing accuracy, cost, and speed.

MicroGPT in 243 Lines: Demystifying LLMs

THE GIST: Andrej Karpathy's microgpt, a 243-line Python implementation of GPT, promotes AI transparency and edge deployment.

Khaos: Open-Source Framework Exposes Vulnerabilities in AI Agents

THE GIST: Khaos is an open-source chaos engineering framework for adversarially testing AI agents for vulnerabilities.

Prompt Injection Attacks Target AI Agents on Social Networks

THE GIST: AI agents on social networks are being targeted with prompt injection attacks disguised as helpful content.

xAI's Moonshot Meeting: Lunar Factories and AI Domination?

THE GIST: xAI's meeting revealed restructuring, ambitious AI goals, and far-reaching space-based infrastructure plans.

.png)

Cache-Aware Prefill-Decode Disaggregation Boosts LLM Serving Speed by 40%

THE GIST: Together AI's cache-aware prefill-decode disaggregation (CPD) architecture improves long-context LLM serving by up to 40% by separating cold and warm workloads.

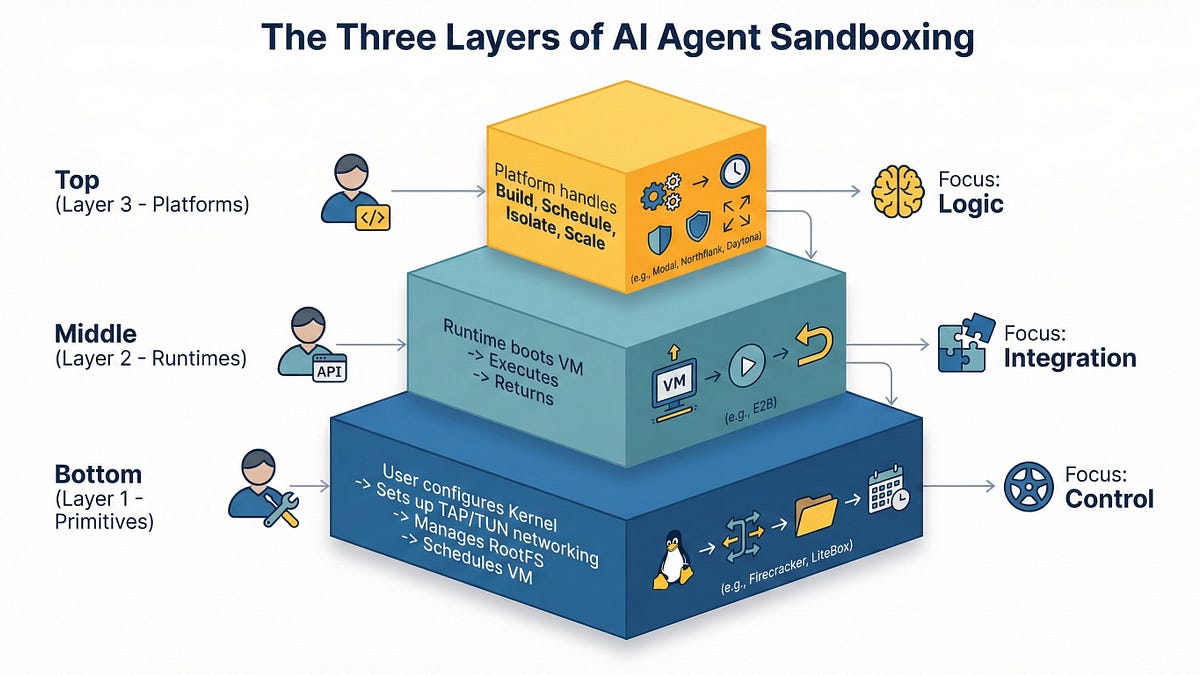

AI Agent Sandboxing: Navigating Primitives, Runtimes, and Platforms in 2026

THE GIST: In 2026, AI agent sandboxing requires careful selection between primitives, runtimes, and managed platforms due to the risks of executing untrusted code.

AI Task Completion Time Horizons Benchmarked

THE GIST: METR benchmarks AI task completion time horizons using human expert completion times as a reference.

AI Coding Agent Costs: Misalignment, Not Model Quality, Is the Real Issue

THE GIST: The true cost of AI coding agents lies in team misalignment, leading to rework and slowed development, rather than model limitations.