Results for: "Strategy"

Keyword Search 9 results

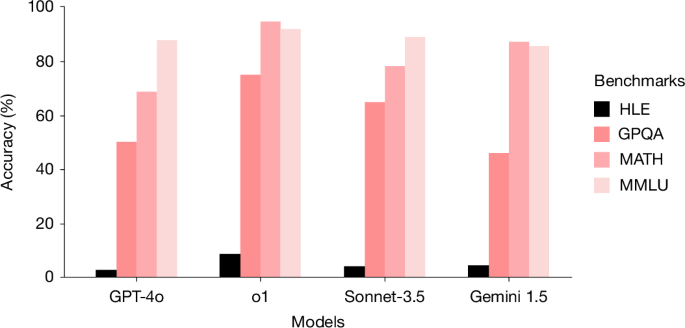

Humanity's Last Exam (HLE) Benchmark Challenges Advanced LLMs

THE GIST: HLE, a new benchmark of 2,500 expert-level academic questions, is designed to evaluate and challenge the capabilities of advanced large language models (LLMs).

Caddy Plugin Charges AI Crawlers USDC for Website Access

THE GIST: A Caddy middleware plugin enables websites to charge AI crawlers in USDC stablecoin for access to content.

AI Sandbox: Run Coding Agents in Disposable Linux Containers on Your Homelab

THE GIST: Pixels creates disposable, sandboxed Linux containers for AI coding agents, managed via TrueNAS and Incus.

Critical AI Architectural Decisions for Product Success

THE GIST: Poor AI architecture, not the model itself, often leads to product failure due to magnified design flaws and runaway costs.

FAR: AI Agents Gain Context via Persistent .meta Files

THE GIST: FAR enhances AI coding agents by generating persistent '.meta' files containing extracted content from binary files, making previously opaque data readable.

AI Reshapes Go Strategy, Blurring Human and Machine Ingenuity

THE GIST: AI's dominance in Go has revolutionized training and strategy, challenging traditional principles and raising questions about creativity.

MIT Study Exposes Security Risks in AI Agents

THE GIST: An MIT study reveals significant security flaws and lack of transparency in agentic AI systems, highlighting the need for developer responsibility.

LLM App Design: Prioritizing Model Swaps

THE GIST: Designing LLM applications for easy model swapping requires a seam-driven architecture with narrow interfaces.

Firefox's AI Kill Switch: A Shift in Responsibility?

THE GIST: Mozilla's AI kill switch in Firefox shifts the ethical burden of AI onto the user.