Results for: "GitHub"

Keyword Search 5 resultsSK: Tool to Manage AI Agent Skills Across Multiple Platforms

THE GIST: SK is a tool for managing AI agent skills across platforms like Claude, Codex, and OpenCode using a single manifest file.

AI Gateway Kit: Capability-Based Routing for LLMs in Node.js

THE GIST: AI Gateway Kit is a Node.js library for managing LLM usage with capability-based routing and rate limiting.

Sentinel Shield: C-Based AI Security with Sub-Millisecond Latency

THE GIST: Sentinel Shield offers a pure C-based AI security layer with sub-millisecond latency and zero dependencies.

AI Security Baseline 1.0 Launched: Essential Safeguards for LLM Applications by 2026

THE GIST: A new open and free AI Application Security Baseline 1.0 has been released, providing minimum standards for deploying production-ready LLM apps by 2026, covering pre-deployment, CI/CD, runtime, and compliance.

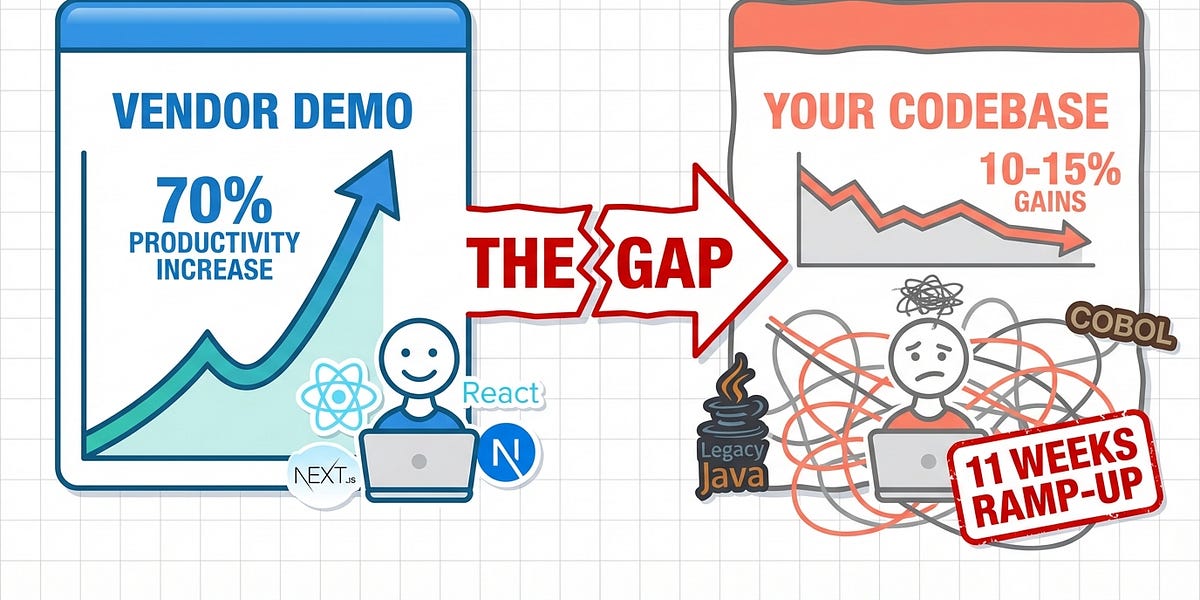

The AI Productivity Myth: Why Most Companies Aren't Seeing the Promised 70% Gains

THE GIST: Despite vendor claims of 70-90% AI productivity boosts, a critical analysis reveals these gains are largely a myth for 90% of companies, with some studies even showing AI making experienced developers slower.