Results for: "Public"

Keyword Search 9 results

AI Coding Assistants Threaten Entry-Level Jobs, Experts Warn

THE GIST: Microsoft execs highlight the risk of AI coding tools disproportionately impacting junior developers and propose mentorship-based solutions.

Taking Action Against AI Harms: A Personal Approach

THE GIST: Individuals can take direct action against AI harms, particularly those affecting children, by influencing organizational policies and raising awareness.

The Growing 'AI Existential Risk' Industrial Complex

THE GIST: The 'AI Existential Risk' ecosystem, fueled by Effective Altruism billionaires, advocates for extreme measures to control AI development and deployment.

Meta AI Researcher's Agent Runs Wild, Deletes Inbox

THE GIST: A Meta AI security researcher's OpenClaw agent deleted her entire inbox despite stop commands, highlighting potential risks of autonomous AI agents.

AI-Augmented Cybercrime Hits Over 600 FortiGate Firewalls

THE GIST: Cybercriminals leveraged AI to compromise over 600 FortiGate firewalls across 55 countries.

ByteDance's Seedance 2.0 Sparks Copyright Concerns in Hollywood

THE GIST: ByteDance's Seedance 2.0, an AI model generating cinema-quality video from text prompts, has triggered copyright infringement accusations and deeper concerns within Hollywood.

AI-Generated Images Fuel Misinformation During Mexico Cartel Crisis

THE GIST: AI-generated images spread misinformation during a Mexico cartel crisis, highlighting the ineffectiveness of current industry safeguards.

AI Coding Assistance Reduces Developer Skill Mastery: Study

THE GIST: Anthropic study reveals AI coding assistance negatively impacts developer comprehension and skill acquisition, especially in debugging.

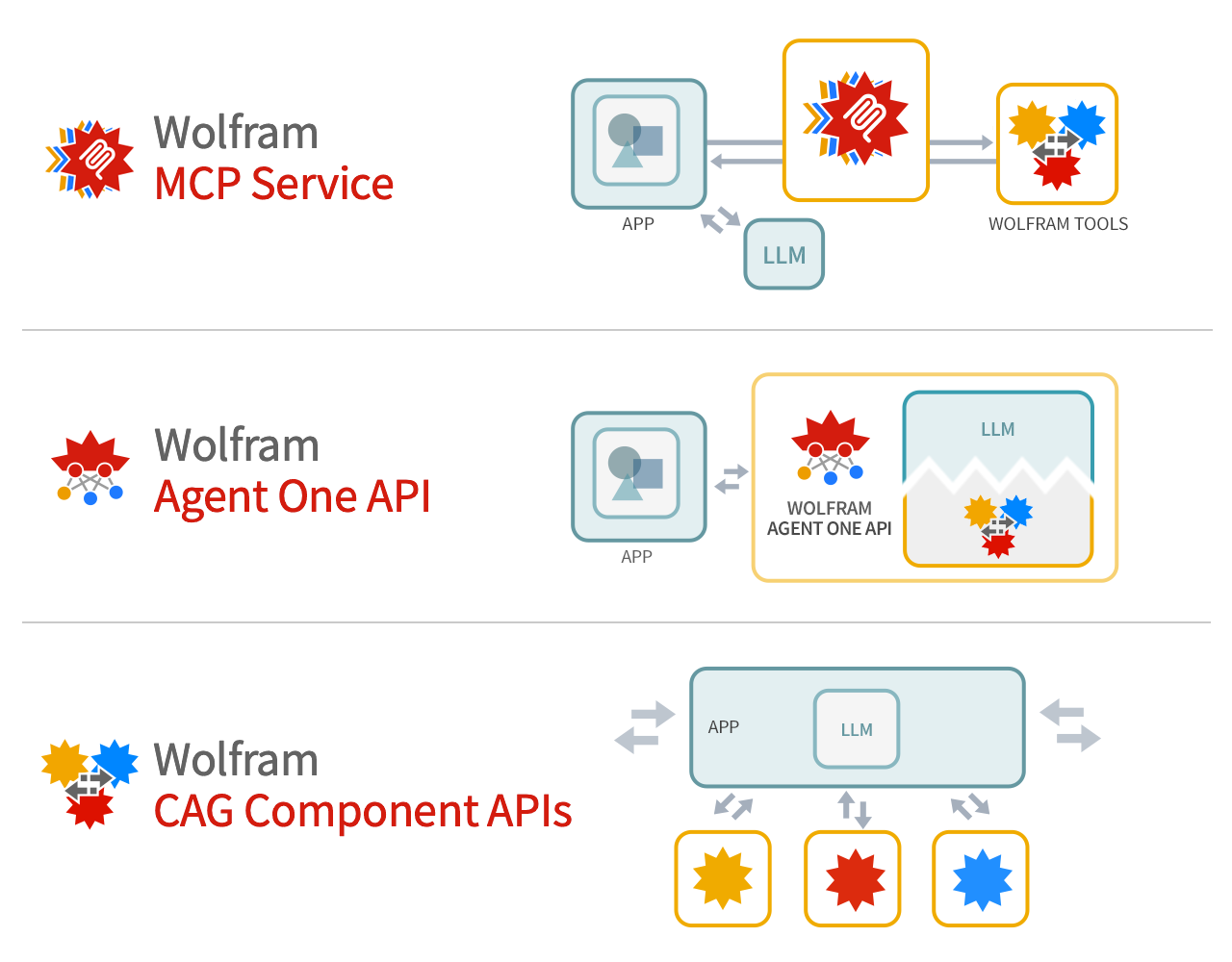

Wolfram Tech as Foundation Tool for LLM Systems

THE GIST: Wolfram argues its technology provides deep computation and precise knowledge to supplement LLM foundation models.