Results for: "Engine"

Keyword Search 9 results

Goldman Sachs: AI Investment Had 'Basically Zero' Impact on 2025 US Economic Growth

THE GIST: Goldman Sachs reports AI investment had negligible impact on 2025 US GDP growth due to imported equipment and measurement difficulties.

Boardroom MCP: AI Governance Engine Offloads Decisions to Multi-Advisor System

THE GIST: Boardroom MCP offloads AI agent decisions to a multi-advisor system for nuanced judgment and risk assessment.

Coordinating Adversarial AI Agents for Enhanced Reasoning

THE GIST: Using independent AI agents for adversarial reasoning enhances output quality by preventing context contamination and promoting structural disagreement.

AI-Generated Images Fuel Misinformation During Mexico Cartel Crisis

THE GIST: AI-generated images spread misinformation during a Mexico cartel crisis, highlighting the ineffectiveness of current industry safeguards.

AI Coding Assistance Reduces Developer Skill Mastery: Study

THE GIST: Anthropic study reveals AI coding assistance negatively impacts developer comprehension and skill acquisition, especially in debugging.

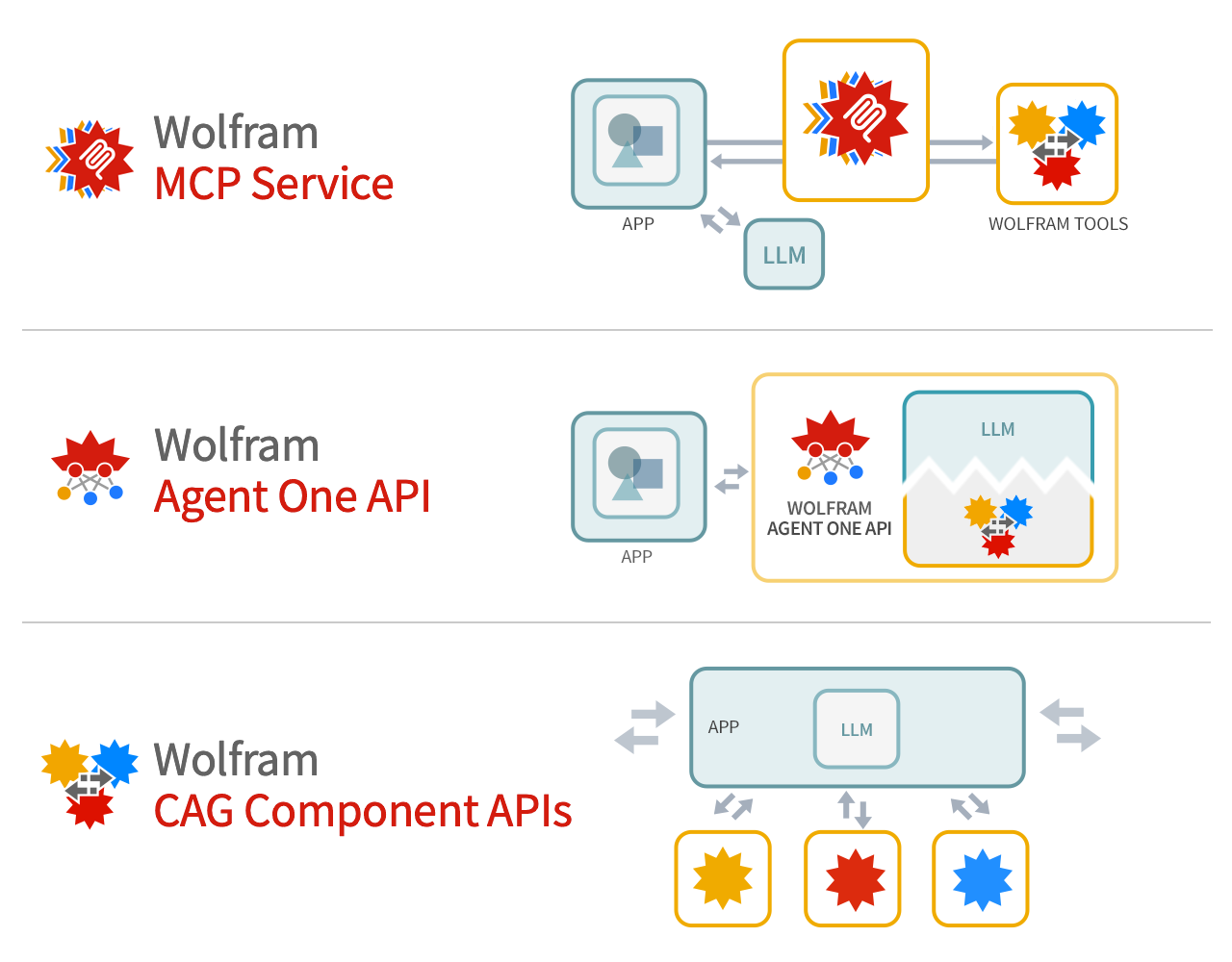

Wolfram Tech as Foundation Tool for LLM Systems

THE GIST: Wolfram argues its technology provides deep computation and precise knowledge to supplement LLM foundation models.

Anthropic Accuses Chinese Firms of Illicitly Training AI on Claude

THE GIST: Anthropic alleges DeepSeek, MiniMax, and Moonshot illicitly used Claude to train their AI, raising security concerns.

Anthropic Accuses Chinese AI Firms of Data Mining Claude

THE GIST: Anthropic alleges three Chinese AI companies used over 24,000 fake accounts to extract data from its Claude model.

AI Researchers' Resignations, Bots Hiring Humans, and Evie Magazine's Influence

THE GIST: Wired's Uncanny Valley podcast discusses AI safety concerns, the RentAHuman platform, and the cultural influence of Evie Magazine.