Results for: "Engine"

Keyword Search 9 resultsClaw Drive: Open-Source AI File Manager Auto-Organizes Your Files

THE GIST: Claw Drive is an open-source AI file manager that automatically categorizes, tags, and deduplicates files, integrating with Google Drive for sync and security.

Taalas ASIC Chip: Llama 3.1 Inference at 17,000 Tokens/Second

THE GIST: Taalas' ASIC chip runs Llama 3.1 at 17,000 tokens/second, claiming 10x cost and energy efficiency over GPUs by hardwiring model weights.

AI Coding Bot Causes AWS Outages, Raising Concerns

THE GIST: Amazon Web Services experienced outages due to its AI coding tool, Kiro, autonomously deleting and recreating environments, raising concerns about AI's reliability.

InferShield: Open-Source Security Proxy for LLM Inference

THE GIST: InferShield is an open-source security proxy for LLM inference, providing real-time threat detection, policy enforcement, and audit trails without code changes.

Sensei: Open-Source Linter Automates AI Agent Skill Improvement

THE GIST: Sensei is an open-source linter that automates the improvement of AI agent skill compliance, preventing skill collision and token bloat.

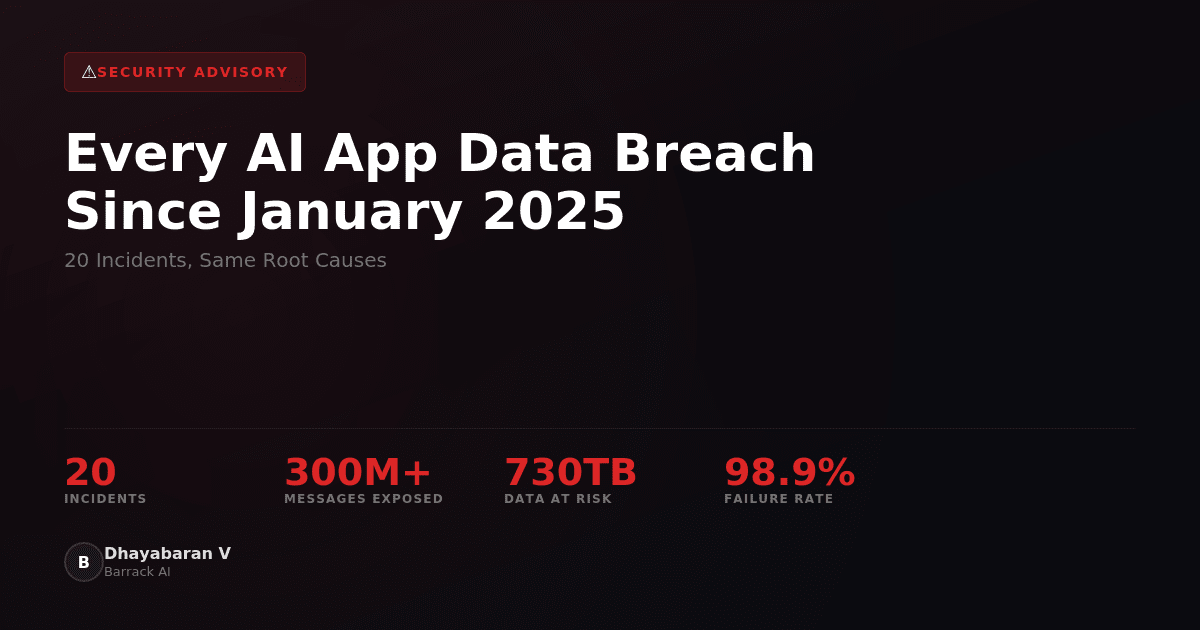

AI App Data Breaches Expose Millions of User Records Due to Preventable Errors

THE GIST: Over 20 AI app data breaches since January 2025 exposed millions of user records due to misconfigured databases, missing security measures, and hardcoded API keys.

Raypher: eBPF-Based Runtime Security for AI Agents

THE GIST: Raypher is an eBPF-based security layer that provides zero-latency runtime execution control for autonomous AI agents, operating offline at the kernel level.

Hmem: Persistent Hierarchical Memory for AI Coding Agents

THE GIST: Hmem is an MCP server providing AI coding agents with persistent, hierarchical memory stored in a local SQLite file, portable across tools and machines.

Microsoft's Gaming CEO Pledges Quality over 'AI Slop'

THE GIST: New Microsoft Gaming CEO Asha Sharma commits to prioritizing human-crafted art and innovative technology over flooding the gaming ecosystem with low-quality AI content.