AI App Data Breaches Expose Millions of User Records Due to Preventable Errors

Sonic Intelligence

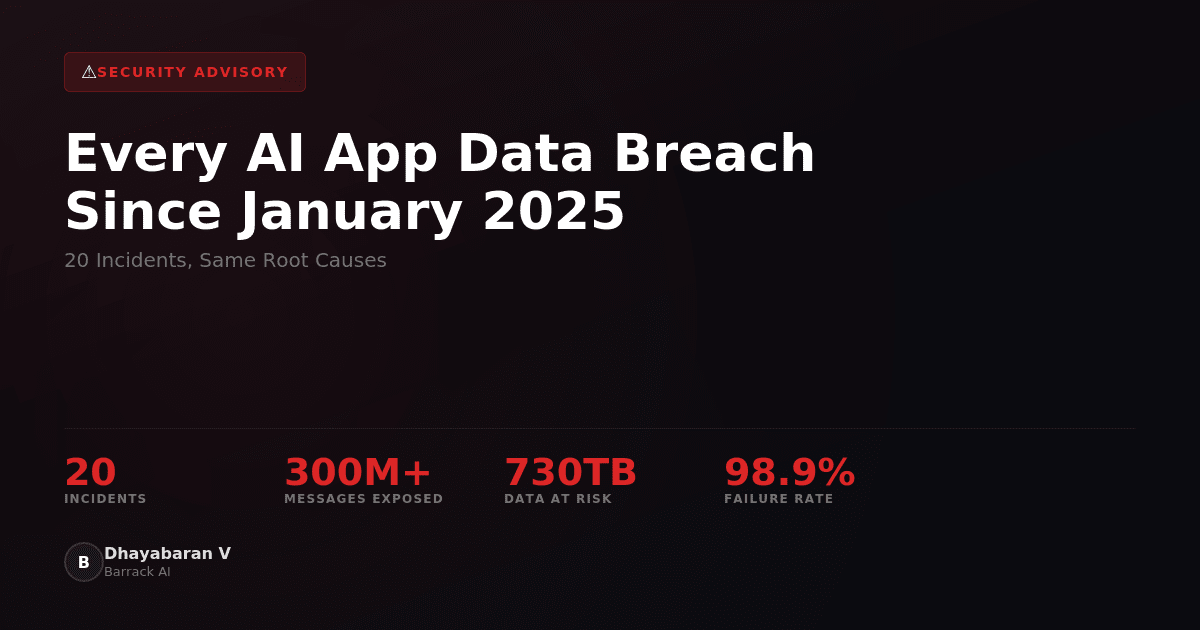

Over 20 AI app data breaches since January 2025 exposed millions of user records due to misconfigured databases, missing security measures, and hardcoded API keys.

Explain Like I'm Five

"Imagine building a clubhouse but forgetting to lock the door. These AI apps forgot to lock their doors, so bad guys could sneak in and steal everyone's information!"

Deep Intelligence Analysis

Addressing this security crisis requires a multi-faceted approach. Developers need to prioritize security from the outset, implementing robust safeguards and adhering to best practices. Security audits and penetration testing should be conducted regularly to identify and address vulnerabilities. Furthermore, the AI community needs to foster a culture of security awareness and knowledge sharing. By working together, developers, researchers, and policymakers can create a more secure and trustworthy AI ecosystem.

*Transparency Footnote: This analysis was conducted by an AI Lead Intelligence Strategist at DailyAIWire.news, leveraging Gemini 2.5 Flash. The assessment is based solely on the provided source content and adheres to EU AI Act Article 50 compliance standards.*

Impact Assessment

These breaches highlight a systemic security crisis in the AI app ecosystem, where the rush to market has overshadowed basic security practices. The exposure of sensitive user data can have severe consequences for individuals and organizations.

Key Details

- 20 documented security incidents occurred between January 2025 and February 2026.

- Root causes include misconfigured Firebase databases and missing Supabase Row Level Security.

- CovertLabs, Cybernews, and Escape Research independently identified systemic security issues.

- One breach at McHire (McDonald's) exposed data of 64M applicants.

Optimistic Outlook

Increased awareness of these vulnerabilities may drive developers to prioritize security and implement robust safeguards. The identification of common root causes allows for targeted solutions and improved security practices.

Pessimistic Outlook

The prevalence of easily preventable errors suggests a lack of security expertise and awareness among AI app developers. Without significant changes in development practices, data breaches are likely to continue.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.