Results for: "Flaws"

Keyword Search 8 results

Critical AI Architectural Decisions for Product Success

THE GIST: Poor AI architecture, not the model itself, often leads to product failure due to magnified design flaws and runaway costs.

MIT Study Exposes Security Risks in AI Agents

THE GIST: An MIT study reveals significant security flaws and lack of transparency in agentic AI systems, highlighting the need for developer responsibility.

Google's Nano Banana 2: Faster, More Powerful AI Image Generation

THE GIST: Google's Nano Banana 2 combines faster image generation with improved text rendering and web searching capabilities.

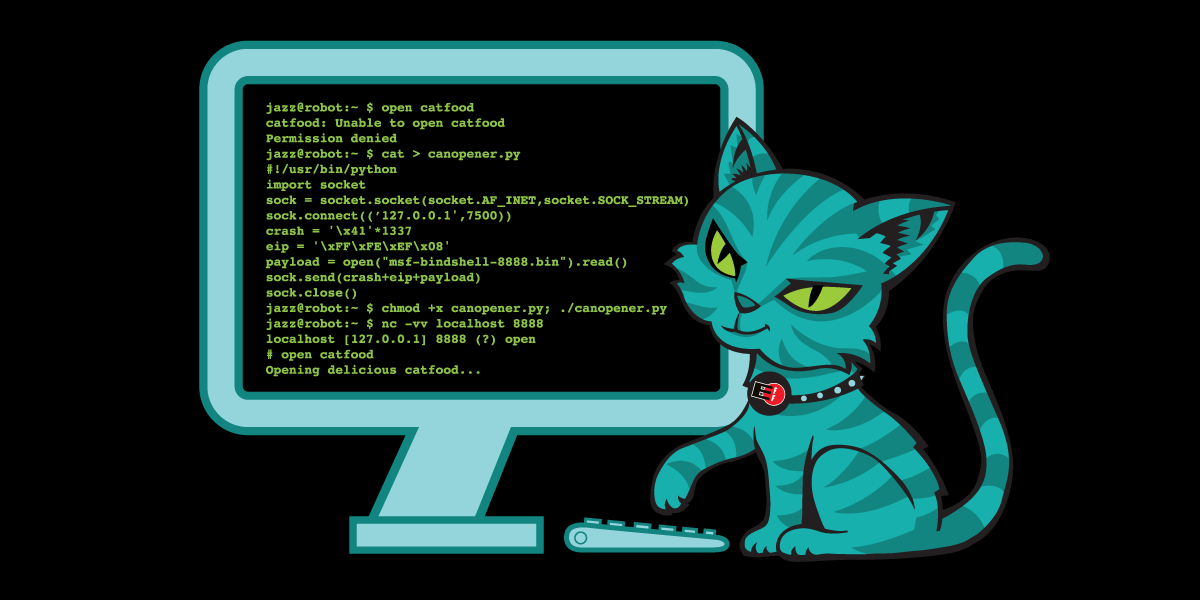

Study Exposes Security Flaws in Autonomous LLM Agents

THE GIST: A red-teaming study reveals significant security, privacy, and governance vulnerabilities in autonomous language-model-powered agents.

EFF Requires Human Authorship for Open-Source Code Contributions

THE GIST: EFF now requires human authorship and understanding of code contributions to its open-source projects, addressing concerns about LLM-generated bugs and review burdens.

Librsvg Receives First AI-Generated Pull Requests

THE GIST: Librsvg received its first AI-generated pull requests on GitHub, which were quickly closed due to containing problematic code suggestions.

Cloudflare AI Playground Hacked via Reflected XSS: Chat History at Risk

THE GIST: A reflected XSS vulnerability in Cloudflare's AI Playground allowed attackers to steal user chat history and interact with connected MCP servers, bypassing Cloudflare's WAF.

Cipher AI Pentester Offers Fast, Affordable Security Assessments

THE GIST: Cipher, an AI-powered pentesting tool, offers security assessments in approximately 2 hours for $999, with unlimited retesting.