Results for: "Flaws"

Keyword Search 9 results

AI Search Evaluation Flaws: A Guide to Robust Benchmarking

THE GIST: Ad-hoc AI search evaluation leads to costly errors, necessitating structured, tailored benchmarking.

Operational Gaps Hinder Enterprise AI Adoption, Integration Platforms Emerge as Key

THE GIST: Enterprise AI adoption struggles without robust integration and dedicated operational teams.

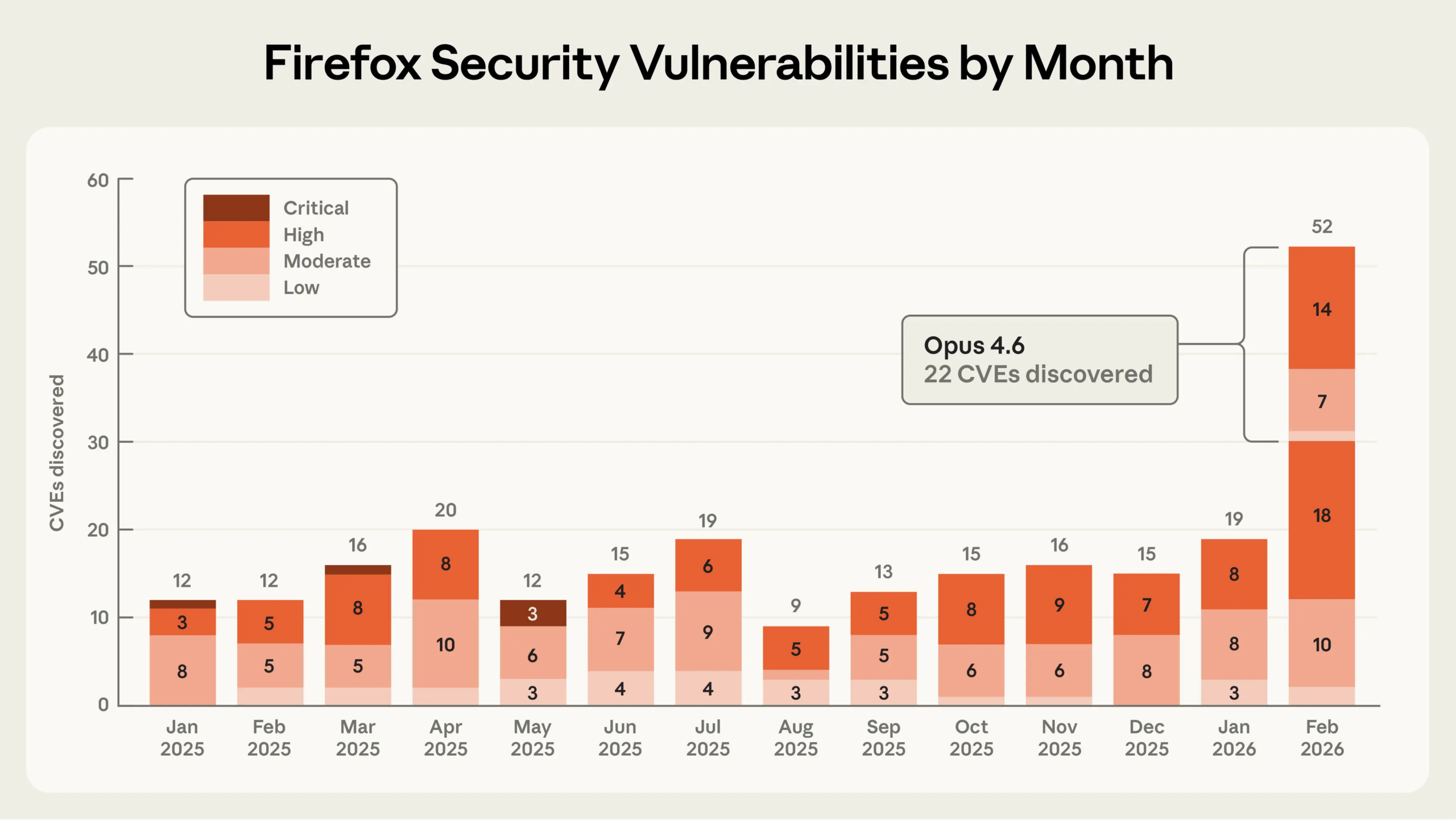

Anthropic's Claude Opus AI Uncovers 22 Firefox Security Flaws

THE GIST: AI model Claude Opus independently identified 22 Firefox security vulnerabilities.

Anthropic's Claude AI Uncovers 22 Firefox Vulnerabilities, Including 14 High-Severity Flaws

THE GIST: Anthropic's Claude Opus AI identified 22 vulnerabilities, 14 high-severity, in Firefox during a two-week security partnership with Mozilla.

AI Agents Expose Critical Flaws in OAuth 2.0 Authorization Model

THE GIST: AI agents fundamentally break OAuth's authorization model, creating significant security vulnerabilities.

AI-Generated Code Poses Significant Security Risks, Prioritizing Functionality Over Safety

THE GIST: AI-generated code frequently introduces critical security vulnerabilities due to optimization for functionality.

Analysis Reveals Gary Marcus's AI Skepticism: Strong on Technical Flaws, Weak on Market Predictions

THE GIST: A dataset analysis validates Gary Marcus's technical AI critiques but contradicts his market forecasts.

Beyond Hype: Unpacking AI's Underrated Systemic Flaws

THE GIST: A critical analysis reveals AI's inherent issues beyond common existential risks.

Critical AI Architectural Decisions for Product Success

THE GIST: Poor AI architecture, not the model itself, often leads to product failure due to magnified design flaws and runaway costs.