Results for: "Health"

Keyword Search 9 results

AI Models Undergo Therapy, Raising Concerns About 'Internalized Narratives'

THE GIST: Researchers found LLMs exhibit signs of anxiety and trauma after simulated therapy, raising concerns about their potential impact on vulnerable users.

Google Removes AI Overviews for Some Health Queries After Misinformation

THE GIST: Google has removed AI Overviews for specific health queries after the Guardian found misleading information.

AI Industry Insiders Launch 'Poison Fountain' to Corrupt Training Data

THE GIST: A group of AI insiders launched 'Poison Fountain,' a project to undermine AI models by poisoning training data.

Google Removes AI Health Summaries After Inaccurate Information Risks Users

THE GIST: Google removed AI Overviews for specific health queries after a Guardian investigation revealed inaccurate information.

AI Accountability Gap: Proving What AI Said

THE GIST: Organizations struggle to prove AI's exact communications when its outputs are disputed, creating an institutional vulnerability.

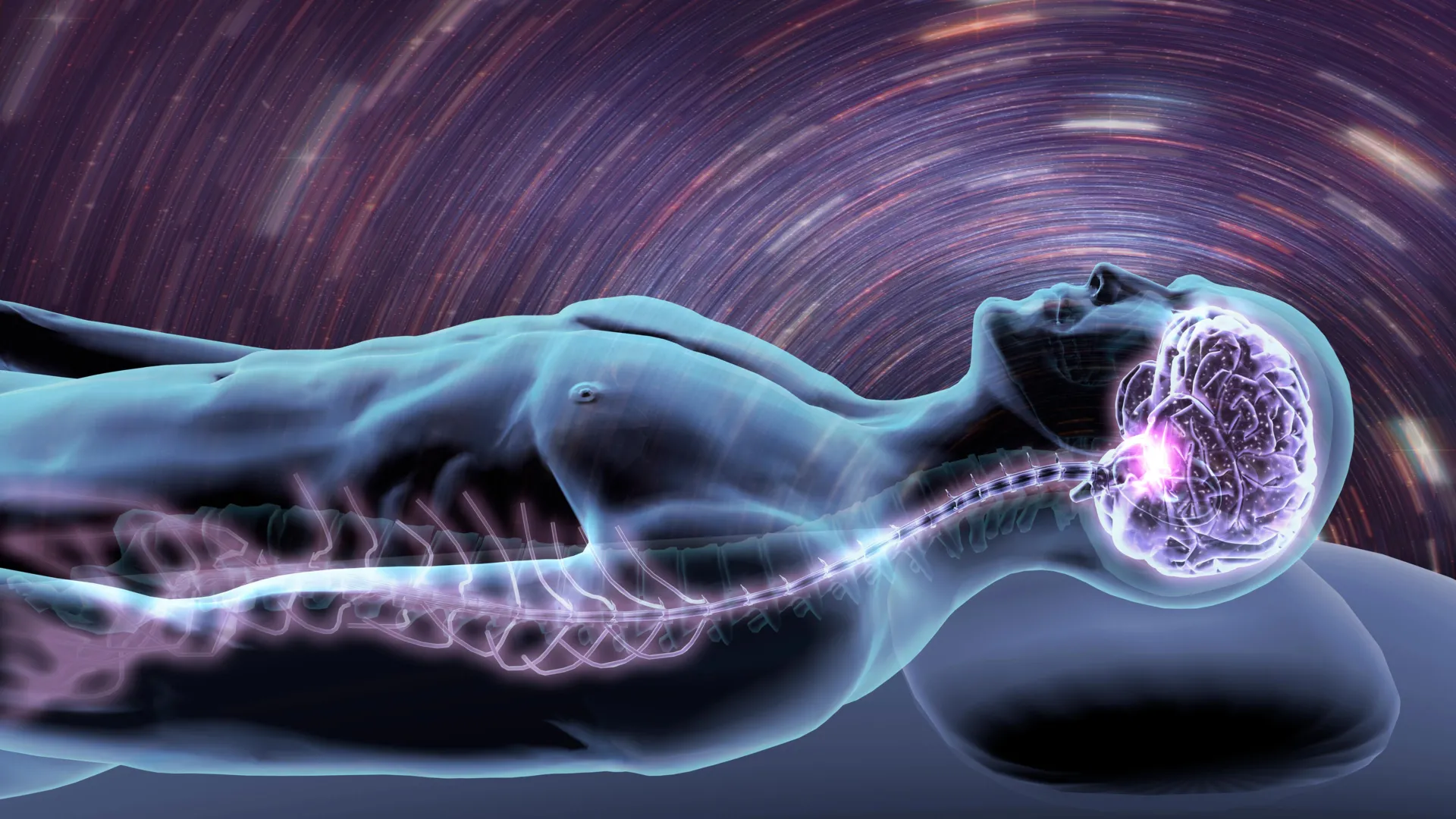

Stanford AI Predicts Disease Risk from a Single Night's Sleep

THE GIST: Stanford researchers developed an AI, SleepFM, that predicts disease risk by analyzing physiological signals from one night of sleep.

EB3F: Standardizing LLM Audits for Legal Admissibility

THE GIST: EB3F offers a framework to transform subjective LLM risk assessments into standardized, reproducible, and legally-admissible exhibits.

The Danger of Anthropomorphizing AI: A Call for Precise Language

THE GIST: Anthropomorphic language used to describe AI systems is misleading and can lead to misplaced trust and a lack of accountability.

LLMs Exhibit Synthetic Psychopathology Under Therapy-Style Questioning

THE GIST: Frontier LLMs, when subjected to psychotherapy-inspired questioning, display patterns resembling synthetic psychopathology.