Results for: "llm"

Keyword Search 9 resultsAgentGuard: QA Engine for LLM-Generated Code

THE GIST: AgentGuard is a quality assurance engine that adds a disciplined process layer to LLM-generated outputs, ensuring structurally sound and self-verified code.

OxyJen: Java Framework for Reliable, Graph-Based LLM Execution

THE GIST: OxyJen is a Java framework designed for building reliable AI pipelines using graph-based orchestration.

Running a 1 Trillion-Parameter LLM Locally: AMD Ryzen AI Max+ Cluster Guide

THE GIST: A guide details building a small-scale distributed inference cluster using AMD Ryzen AI Max+ PCs to run a one trillion-parameter LLM locally.

IronCurtain: Secure Personal AI Assistant Architecture

THE GIST: IronCurtain is a personal AI assistant architecture designed with security as a primary consideration, addressing vulnerabilities found in other agents.

Doc-to-LoRA and Text-to-LoRA: Instant LLM Updates

THE GIST: Doc-to-LoRA and Text-to-LoRA offer methods for rapidly updating LLMs with new knowledge and adapting them to specific tasks.

Rapida: Open Source Real-Time Voice AI Infrastructure

THE GIST: Rapida is an open-source platform for building and deploying real-time voice agents using SIP, Asterisk, and WebRTC.

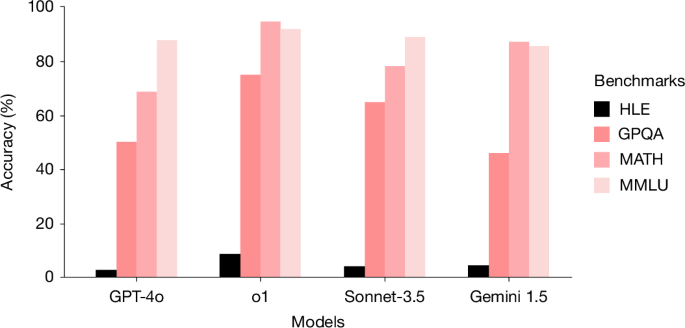

Humanity's Last Exam (HLE) Benchmark Challenges Advanced LLMs

THE GIST: HLE, a new benchmark of 2,500 expert-level academic questions, is designed to evaluate and challenge the capabilities of advanced large language models (LLMs).

LLM App Design: Prioritizing Model Swaps

THE GIST: Designing LLM applications for easy model swapping requires a seam-driven architecture with narrow interfaces.

LLM Connection Strings: Simplifying Model Configuration

THE GIST: The article proposes using URL-like connection strings (llm://) to simplify the configuration of Large Language Models (LLMs).