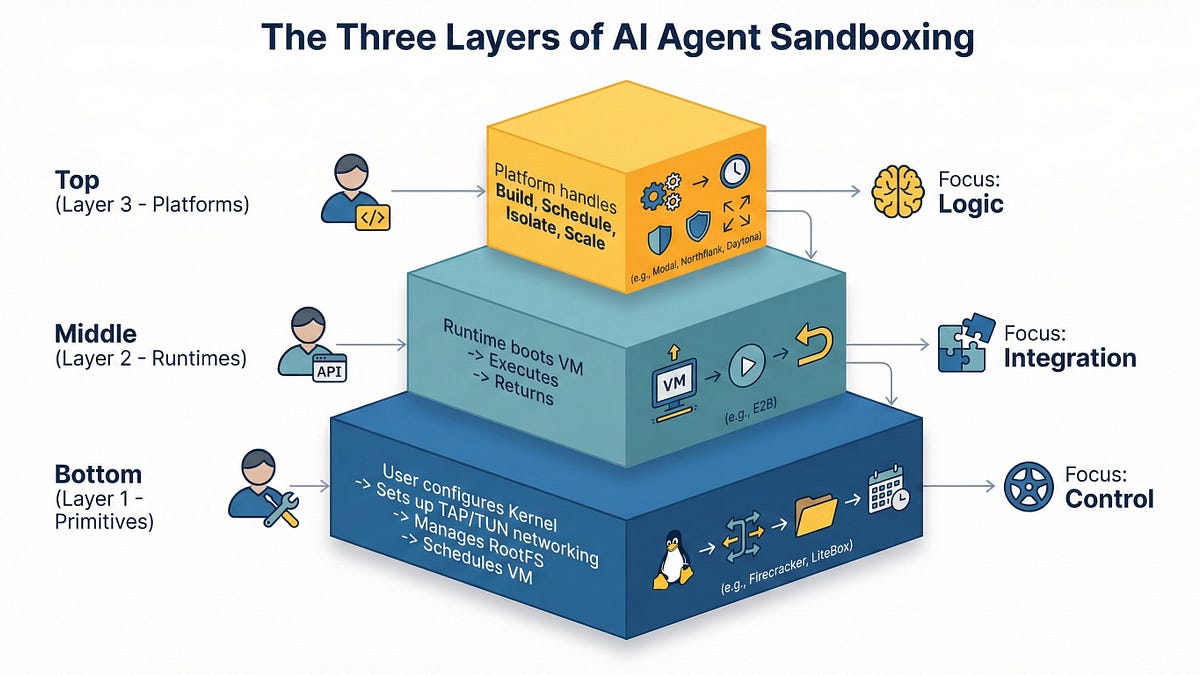

AI Agent Sandboxing: Navigating Primitives, Runtimes, and Platforms in 2026

THE GIST: In 2026, AI agent sandboxing requires careful selection between primitives, runtimes, and managed platforms due to the risks of executing untrusted code.

AI for Good: FightHealthInsurance and Other Examples

THE GIST: FightHealthInsurance, an AI tool helping people appeal insurance claims, exemplifies AI being used for social good.

AI Agents Reshape Online Shopping: Invisible Carts and Delegated Trust

THE GIST: A shift is underway where AI agents, guided by user policies, handle online shopping, making the traditional shopping cart mostly invisible.

SatGate: An Economic Firewall for AI Agent Traffic

THE GIST: SatGate is an open-source API gateway that enforces economic governance for AI agents, preventing uncontrolled spending.

AI Incoherence: Model Intelligence Doesn't Guarantee Alignment

THE GIST: Larger AI models may exhibit more incoherent failures, suggesting scale alone won't eliminate misalignment risks.

DriftProof: Specification for Preventing LLM Behavioral Drift

THE GIST: DriftProof is a behavioral governance architecture designed to prevent silent behavioral drift in adaptive systems, particularly large language models.

LLM Cracks Anthropic's 'Anonymous' Interview Data

THE GIST: Researchers used LLMs to de-anonymize Anthropic's supposedly anonymous interview data, raising data privacy concerns.

AI Agent Gains Persistent Memory, Bridging Gap Between Tool and Teammate

THE GIST: AI agents now have persistent memory, enabling them to retain user preferences and learn from past experiences.

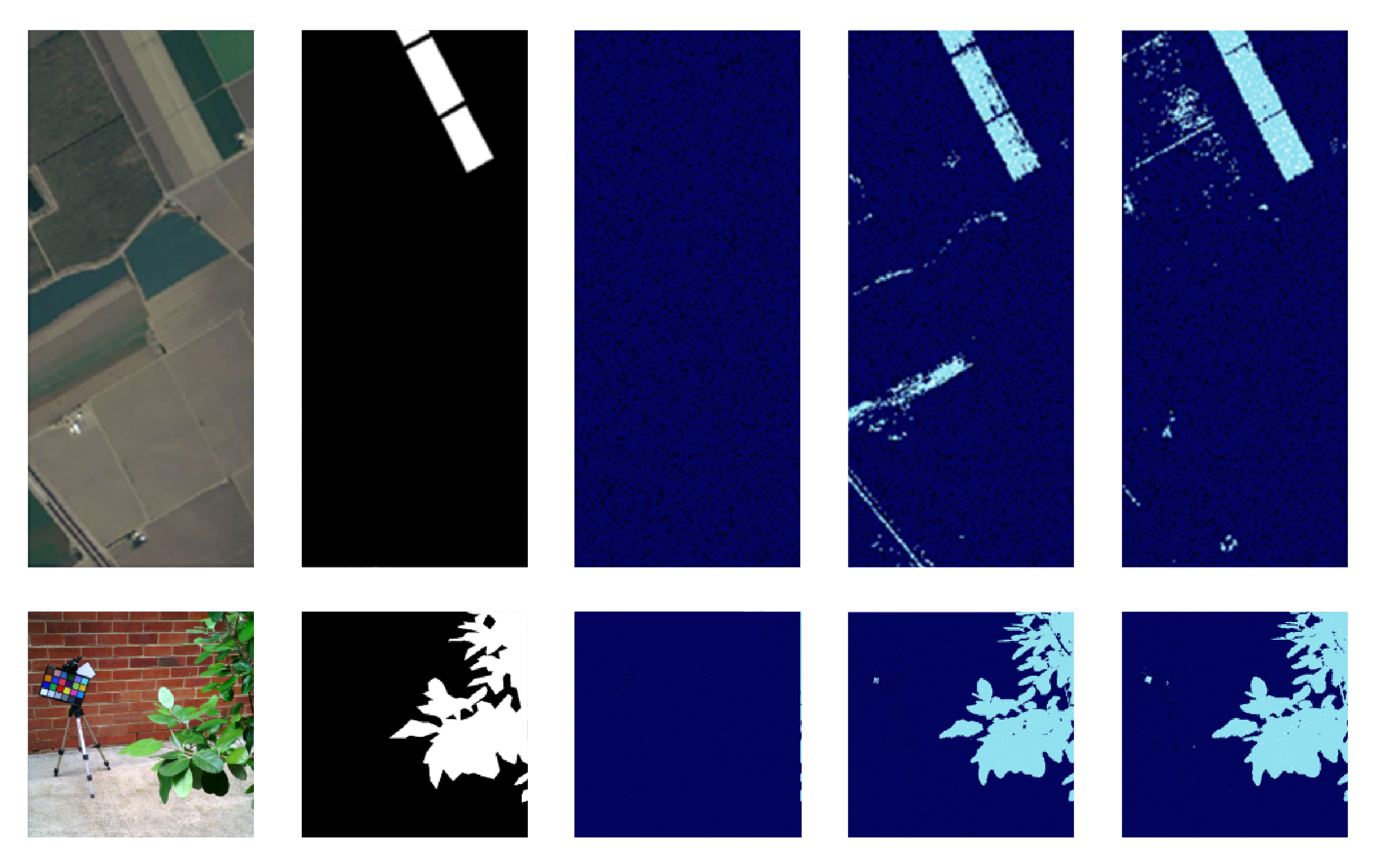

AI-Enhanced Sensor 'Sniffs' Out Spectral Targets in Real-Time

THE GIST: An AI-enhanced sensor developed at Berkeley Lab can identify spectral targets in real-time, eliminating data-processing bottlenecks.