Results for: "Access"

Keyword Search 9 resultsAI Coding Tools May Boost Rust's Accessibility and Popularity

THE GIST: AI coding tools are evolving, potentially making languages like Rust more accessible and popular by automating code generation and review.

Pentagon Issues Ultimatum to Anthropic Over AI Use in Military Applications

THE GIST: Pentagon demands Anthropic allow AI use for all legal military purposes or face consequences.

Agentic Power of Attorney (APOA): Open Standard for AI Agent Authorization

THE GIST: Agentic Power of Attorney (APOA) is proposed as an open standard for formally authorizing AI agents to act on behalf of humans in the digital world.

Open-Source AI Gateway Manages LLM Provider Access

THE GIST: AI Gateway is a self-hosted API gateway managing access to multiple LLM providers with individual client configurations.

ZSE: Open-Source LLM Inference Engine with Fast Cold Starts

THE GIST: ZSE is an open-source LLM inference engine designed for memory efficiency and high performance, boasting cold starts as fast as 3.9s.

Unworldly: A Flight Recorder for AI Agents Ensuring Security and Compliance

THE GIST: Unworldly is a tool that records AI agent activity, providing tamper-proof audit trails and real-time risk detection.

AI-Runtime-Guard: Policy Enforcement for AI Agents

THE GIST: AI-Runtime-Guard is a policy enforcement layer for AI agents, preventing unauthorized actions without retraining or prompt engineering.

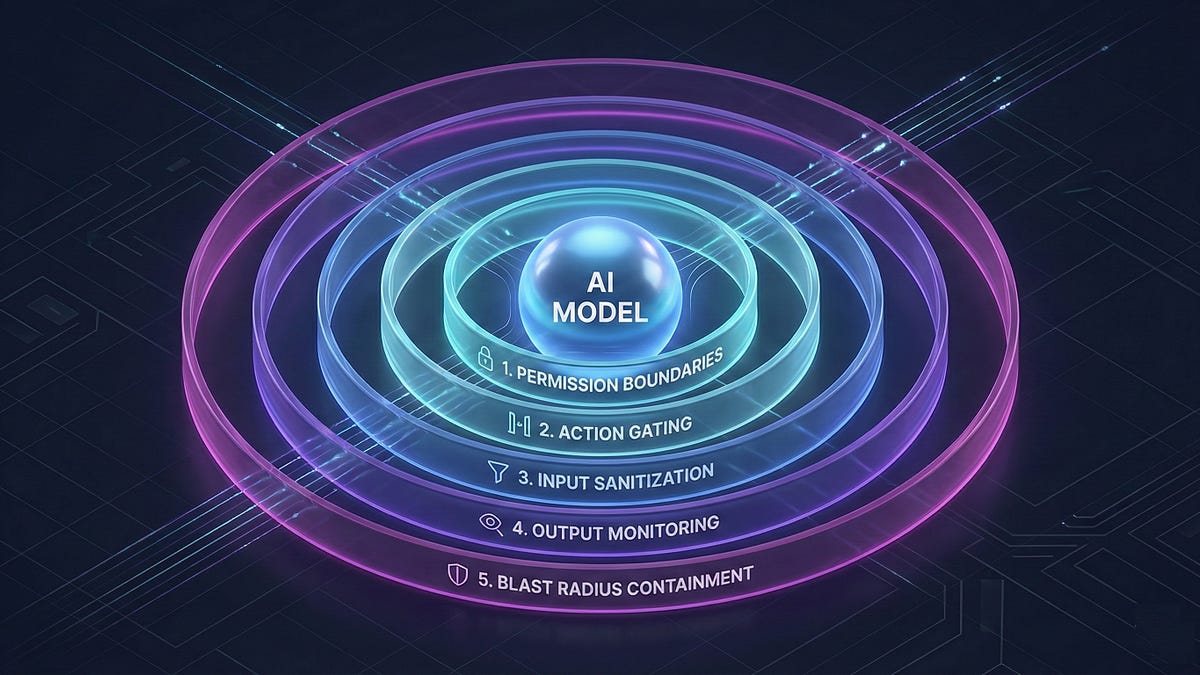

Prompt Injection: An Architectural Vulnerability in AI Agents

THE GIST: Prompt injection is an architectural problem requiring a layered defense, not just better models.

Wtx: CLI Tool Automates Git Worktrees for Parallel AI Agents

THE GIST: Wtx is a CLI tool that automates Git worktree management, enabling parallel AI agents to work efficiently in large repositories.