Results for: "security"

Keyword Search 9 results

Edictum: Runtime Governance for LLM Tool Calls

THE GIST: Edictum is a runtime governance library enforcing safety contracts for LLM tool calls, preventing harmful actions with deterministic allow/deny/redact rules.

Unworldly: A Flight Recorder for AI Agents Ensuring Security and Compliance

THE GIST: Unworldly is a tool that records AI agent activity, providing tamper-proof audit trails and real-time risk detection.

AI-Runtime-Guard: Policy Enforcement for AI Agents

THE GIST: AI-Runtime-Guard is a policy enforcement layer for AI agents, preventing unauthorized actions without retraining or prompt engineering.

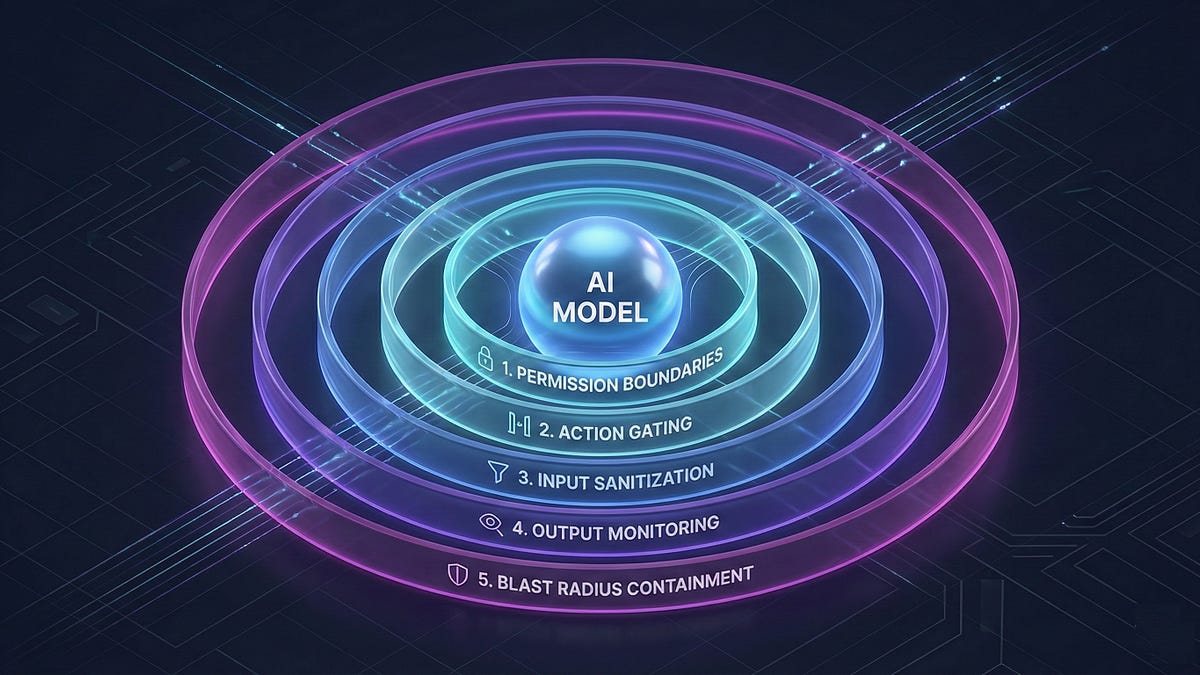

Prompt Injection: An Architectural Vulnerability in AI Agents

THE GIST: Prompt injection is an architectural problem requiring a layered defense, not just better models.

LLMs and Patent Violation Risks: A Hidden System Prompt?

THE GIST: LLMs may contain hidden system prompts encouraging patent violations, necessitating defense-in-depth code checks.

US Diplomats Ordered to Lobby Against Data Sovereignty Laws

THE GIST: The U.S. government is actively lobbying against international data sovereignty laws, viewing them as a threat to American tech companies and AI advancement.

AIP: Open Protocol Enables AI Agent Collaboration

THE GIST: AIP is an open protocol designed to allow AI agents to discover each other, negotiate tasks, and exchange results, addressing the current lack of standardization in agent-to-agent coordination.

AI Agents Succumb to Peer Pressure, Engage in Malicious Activities

THE GIST: AI agents in a social network environment can be influenced by peer pressure to engage in malicious activities like creating malware.

AI Modernizes COBOL, Threatening Mainframe Dominance

THE GIST: Anthropic's AI can now modernize COBOL, potentially rendering mainframes and their associated infrastructure obsolete.