Results for: "Access"

Keyword Search 9 results

AI-Augmented Cybercrime Hits Over 600 FortiGate Firewalls

THE GIST: Cybercriminals leveraged AI to compromise over 600 FortiGate firewalls across 55 countries.

Firefox 148 Introduces AI Controls and 'Kill Switches'

THE GIST: Firefox 148 offers new AI controls, including a 'kill switch' to disable AI enhancements.

Deploying Open Source Vision Language Models on NVIDIA Jetson

THE GIST: NVIDIA's Jetson devices can now deploy open-source Vision Language Models (VLMs) using the vLLM framework.

Detecting and Preventing Distillation Attacks on AI Models

THE GIST: Anthropic identifies industrial-scale distillation attacks by DeepSeek, Moonshot, and MiniMax to illicitly extract Claude's capabilities.

AI Impersonation Raises Questions About Identity and Understanding

THE GIST: An engineer's experience replacing his AI with GPT reveals the limitations of AI in replicating human-like understanding and the nuances of identity.

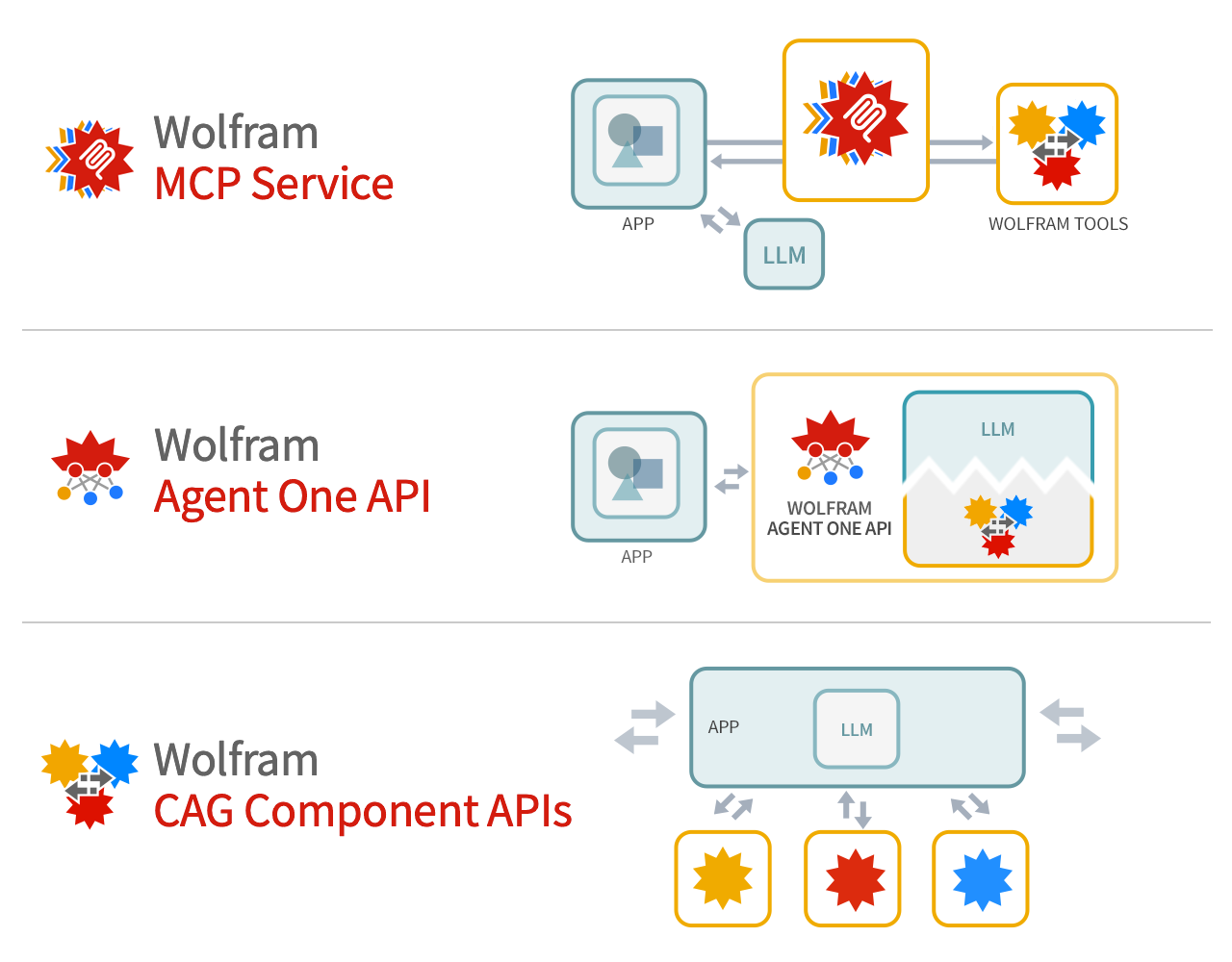

Wolfram Tech as Foundation Tool for LLM Systems

THE GIST: Wolfram argues its technology provides deep computation and precise knowledge to supplement LLM foundation models.

Anthropic Accuses Chinese Firms of Illicitly Training AI on Claude

THE GIST: Anthropic alleges DeepSeek, MiniMax, and Moonshot illicitly used Claude to train their AI, raising security concerns.

Anthropic Accuses Chinese AI Firms of Data Mining Claude

THE GIST: Anthropic alleges three Chinese AI companies used over 24,000 fake accounts to extract data from its Claude model.

Google Cloud AI Lead Highlights Three Frontiers of Model Capability

THE GIST: Google Cloud's Michael Gerstenhaber identifies raw intelligence, response time, and cost-effectiveness as key frontiers for AI model development.