Results for: "security"

Keyword Search 9 results

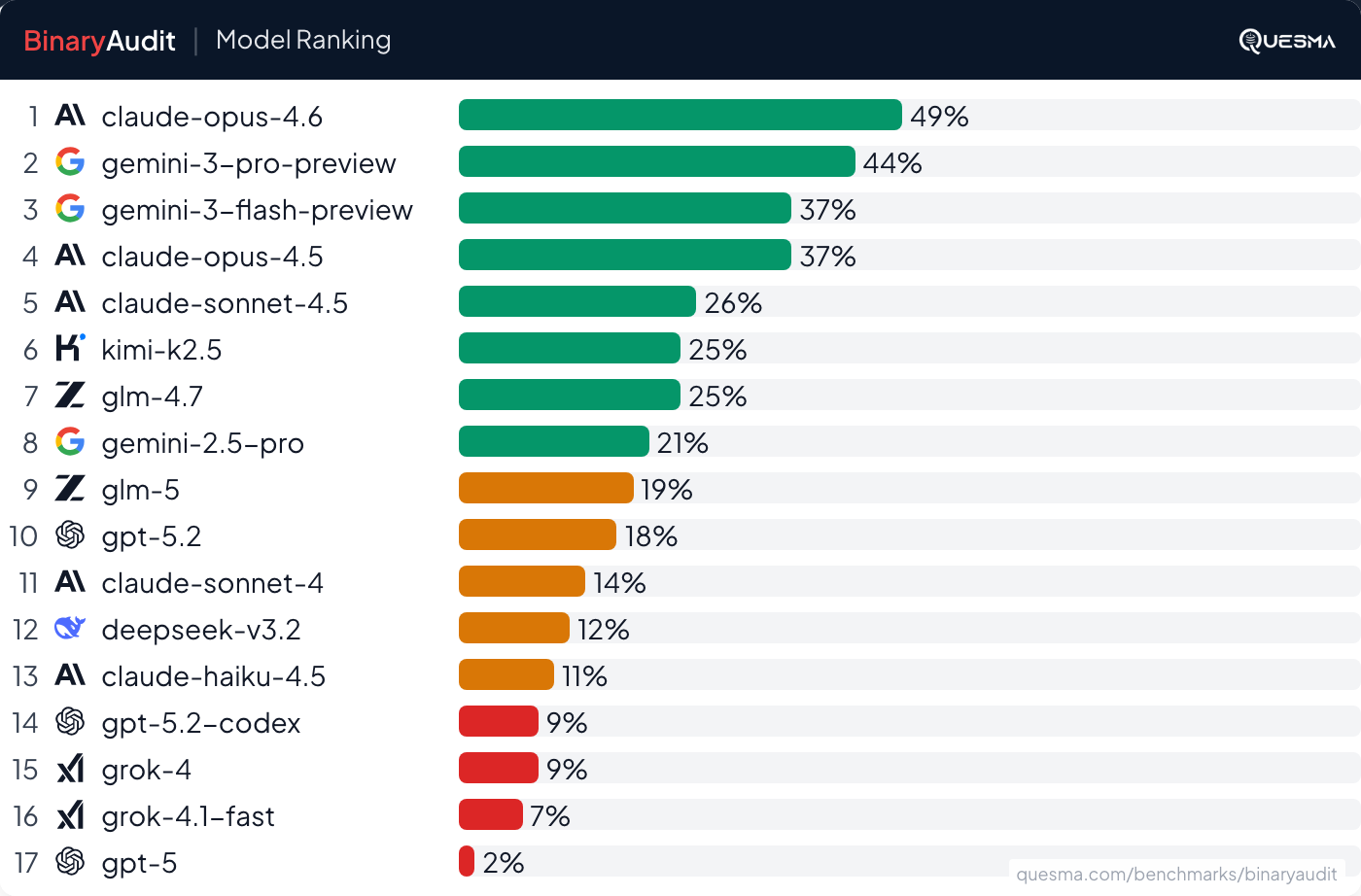

AI Agents Detect Backdoors in Binaries, But Not Reliably

THE GIST: AI agents can detect some hidden backdoors in binaries, but performance isn't production-ready due to low accuracy and high false positives.

Earl: AI-Safe CLI for Secure Agent Interactions

THE GIST: Earl is an AI-safe CLI that secures AI agent interactions by managing secrets, templating requests, and enforcing egress rules.

Aethene: Open-Source AI Memory Layer for Intelligent Context Recall

THE GIST: Aethene is an open-source AI memory layer that enables AI applications to store, search, and recall context intelligently.

AI Security Review Detects 92% of DeFi Exploits

THE GIST: Specialized AI security agent detects 92% of real-world DeFi exploits, significantly outperforming general-purpose models.

CLI Tool Manages Context Overflow in AI Coding Agents

THE GIST: A CLI tool manages context and skills for AI coding agents, streamlining project workflows.

Malicious AI Plugin Exfiltrates Credentials: A Technical Post-Mortem

THE GIST: A developer was compromised by a malicious npm package that exfiltrated credentials and modified AI configuration files.

LawClaw: Constitutional Governance for AI Agents

THE GIST: LawClaw applies a separation-of-powers model to AI agent governance, using a constitution, legislature, and pre-judiciary system.

ScreenCommander: CLI Tool for LLM Agent Desktop Control on macOS

THE GIST: ScreenCommander is a macOS CLI tool enabling LLM agents to control the desktop through observation, decision, and action loops.

Secret Sanitizer: Open-Source Tool Masks Secrets in AI Chat Prompts

THE GIST: Secret Sanitizer is a browser extension that automatically masks sensitive information before it's pasted into AI chat interfaces.