Results for: "Reveals"

Keyword Search 8 results

MIT Study Exposes Security Risks in AI Agents

THE GIST: An MIT study reveals significant security flaws and lack of transparency in agentic AI systems, highlighting the need for developer responsibility.

AI Coding Assistance: How You Use It Matters Most

THE GIST: An Anthropic study reveals that the way developers use AI coding assistance impacts learning more than simply using it.

AI Image Detectors Easily Fooled by Simple Post-Processing

THE GIST: AI image detectors, while initially promising, are easily bypassed by simple image transformations like blurring and noise.

AI Exposes Blind Spots in Requirements Gathering, Outperforming Humans

THE GIST: AI-driven requirements gathering produces more comprehensive technical specifications compared to human analysis, highlighting potential oversights.

AI vs. Human: GitHub Commit Visualization

THE GIST: AI vs. Human is a tool that analyzes GitHub repositories to visualize the breakdown of commits by humans, AI assistants, and automated bots.

AI Bias Study Reveals Stereotypes in Latin American Language Models

THE GIST: A study reveals that AI language models trained on English-centric data exhibit biases related to gender, race, and xenophobia when used in Latin American contexts.

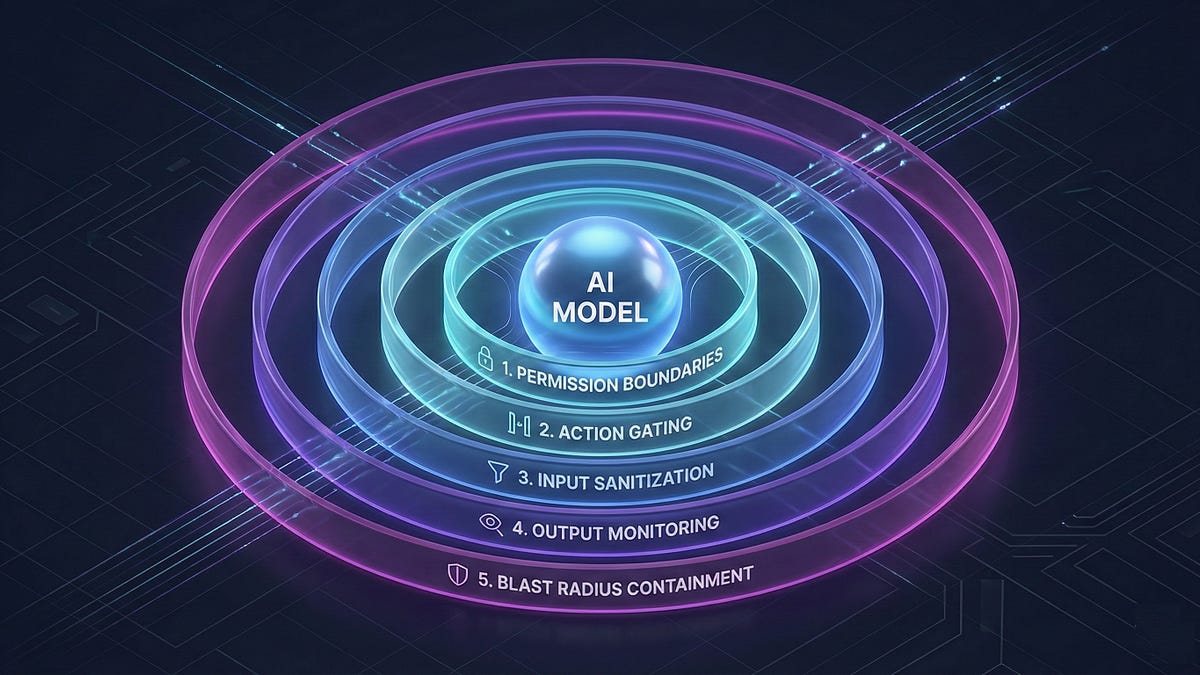

Prompt Injection: An Architectural Vulnerability in AI Agents

THE GIST: Prompt injection is an architectural problem requiring a layered defense, not just better models.

AI Coding Agents' Impact on GitHub: A Large-Scale Study

THE GIST: A study of 24,014 agent-generated pull requests on GitHub reveals differences from human contributions in commit count, files touched, and description similarity.