MIT Study Exposes Security Risks in AI Agents

THE GIST: An MIT study reveals significant security flaws and lack of transparency in agentic AI systems, highlighting the need for developer responsibility.

ClawCare: Security Scanner and Runtime Guard for AI Agent Skills

THE GIST: ClawCare is a security tool that scans and protects AI agent skills from attacks like command injection and data theft, both statically and at runtime.

Cecil: Open-Source Memory and Identity Protocol for AI

THE GIST: Cecil is an open-source protocol providing AI with persistent memory, pattern recognition, and continuous context.

AgentGuard: QA Engine for LLM-Generated Code

THE GIST: AgentGuard is a quality assurance engine that adds a disciplined process layer to LLM-generated outputs, ensuring structurally sound and self-verified code.

Perplexity "Computer" Orchestrates AI Agents for Complex Tasks

THE GIST: Perplexity's "Computer" tool allows users to assign complex tasks to a system that coordinates multiple AI agents using various models.

Micron's $50B Boise Expansion Fuels AI Growth

THE GIST: Micron is investing $50 billion in Boise, Idaho, to build a new memory chip fabrication facility, creating 60,000 jobs and boosting US semiconductor manufacturing.

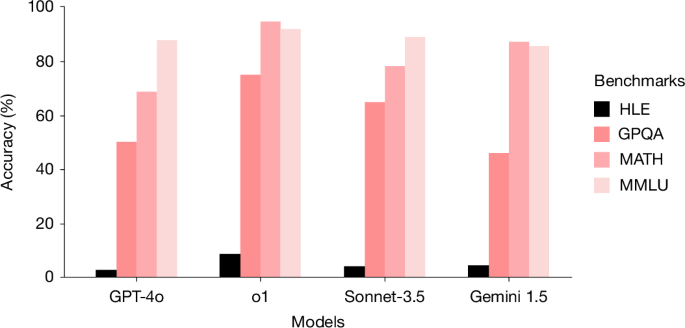

Humanity's Last Exam (HLE) Benchmark Challenges Advanced LLMs

THE GIST: HLE, a new benchmark of 2,500 expert-level academic questions, is designed to evaluate and challenge the capabilities of advanced large language models (LLMs).

FAR: AI Agents Gain Context via Persistent .meta Files

THE GIST: FAR enhances AI coding agents by generating persistent '.meta' files containing extracted content from binary files, making previously opaque data readable.

LLM App Design: Prioritizing Model Swaps

THE GIST: Designing LLM applications for easy model swapping requires a seam-driven architecture with narrow interfaces.