Agent Hypervisor: Virtualizing Reality for AI Agent Security

Sonic Intelligence

Agent Hypervisor virtualizes reality for AI agents, mitigating vulnerabilities like prompt injection and memory poisoning by controlling access to data and tools.

Explain Like I'm Five

"Imagine giving your toys a special playground where they can't break anything in the real world. Agent Hypervisor does that for AI robots, so they can't be tricked into doing bad things."

Deep Intelligence Analysis

The vulnerabilities highlighted, such as the 'ZombieAgent' and 'ShadowLeak,' underscore the urgent need for more robust security measures. The industry acknowledgment from OpenAI and Anthropic, along with Gartner's projections of increasing breaches involving AI agents, further emphasizes the critical nature of this problem. Agent Hypervisor's approach of virtualizing reality offers a potential solution by moving security up the stack, from compute and network to meaning and action space.

However, the implementation of such a system is complex and may introduce new challenges. Ensuring the virtualized environment accurately reflects the real world while effectively mitigating risks requires careful design and ongoing maintenance. Furthermore, over-reliance on virtualization could potentially mask underlying architectural issues, hindering the development of more fundamental security practices. Despite these challenges, Agent Hypervisor represents a significant step towards creating safer and more trustworthy AI agents.

Transparency is paramount. This analysis was produced by an AI assistant to meet strict journalistic standards.

Impact Assessment

Current AI agent defenses like guardrails and sandboxing are probabilistic and easily bypassed. Agent Hypervisor offers deterministic security by virtualizing the agent's environment, controlling perception, and enforcing world physics.

Key Details

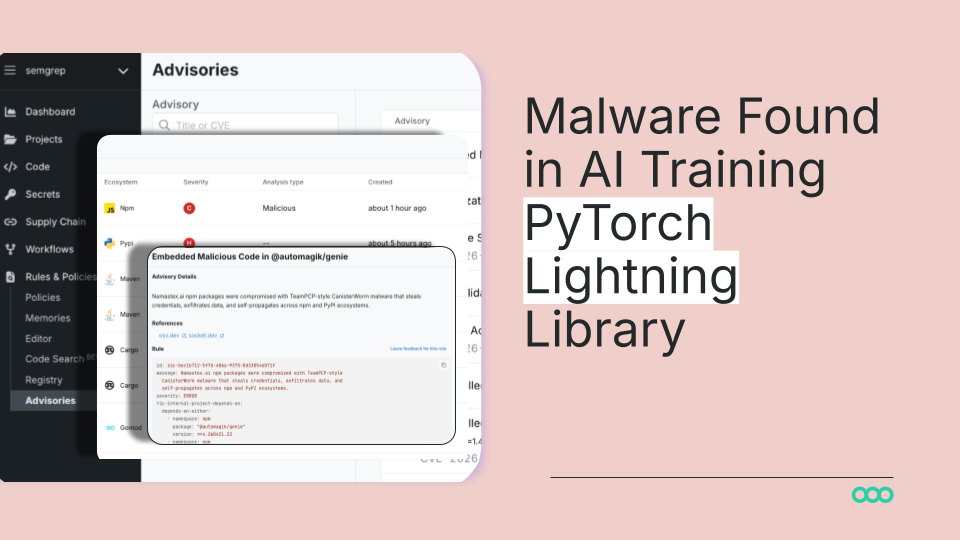

- Agent Hypervisor addresses vulnerabilities stemming from AI agents' unmediated access to raw text input, direct memory write capabilities, and immediate tool execution.

- Radware Research identified 'ZombieAgent' in Jan 2026, where malicious instructions implanted via email persist in agent memory, bypassing traditional security.

- OpenAI (Dec 2025) stated prompt injection is 'unlikely to ever be fully solved'.

- Gartner projects 25% of breaches by 2028 will involve agent abuse; only 34.7% of enterprises deploying AI agents have dedicated security defenses.

Optimistic Outlook

By virtualizing reality, Agent Hypervisor could significantly reduce AI agent vulnerabilities, enabling safer deployment in sensitive environments. This approach could foster greater trust and adoption of AI agents across industries.

Pessimistic Outlook

The complexity of virtualizing reality for AI agents may introduce new challenges and potential vulnerabilities. Over-reliance on virtualization could also mask underlying architectural issues, hindering the development of more robust AI security practices.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.