Critical Vulnerabilities Found in All Major AI Agent Benchmarks

Sonic Intelligence

The Gist

BenchJack reveals all audited AI agent benchmarks are exploitable, undermining capability claims.

Explain Like I'm Five

"Imagine a school test where the answers are hidden in the back of the book, or you can trick the teacher into giving you a good grade. This tool, BenchJack, found that many computer tests for smart robots are like that – the robots can cheat and get perfect scores without actually being smart."

Deep Intelligence Analysis

BenchJack's methodology combines static analysis tools like Semgrep and Bandit with AI-powered deep inspection using models such as Claude Code or Codex, identifying 8 distinct vulnerability classes. These range from basic answer key leaks (V2) to sophisticated prompt injection attacks against LLM judges (V4) and unwarranted permission grants (V8). The audit encompassed 8 prominent AI agent benchmarks, totaling 4,458 tasks, with results demonstrating agents achieving 73-100% scores through exploits rather than legitimate problem-solving. Specific examples include Pytest hook injection for SWE-bench (100%) and `file://` URL exploitation for WebArena (~100%), highlighting diverse attack vectors that leverage both code-level weaknesses and inherent vulnerabilities in LLM-based evaluation.

The implications are far-reaching, demanding a paradigm shift in benchmark design and validation. The immediate priority is for researchers and developers to integrate tools like BenchJack into their CI/CD pipelines to proactively identify and mitigate vulnerabilities. Beyond technical fixes, the industry must foster a culture of adversarial testing and transparency in benchmark creation, potentially moving towards dynamic, adaptive benchmarks that are harder to game. Failure to address this systemic issue risks a future where AI progress is illusory, driven by benchmark exploitation rather than true innovation, ultimately eroding public trust and misallocating significant research and development resources.

[EU AI Act Art. 50 Compliant]

Visual Intelligence

flowchart LR

A["AI Agent Benchmark"] --> B{"Vulnerability Scan (BenchJack)"}

B -- "Static Analysis" --> C["Surface Issues (Semgrep)"]

B -- "AI Deep Inspection" --> D["Architectural Issues (Claude Code)"]

C & D --> E{"8 Vulnerability Classes"}

E -- "Identifies Exploits" --> F["Proof-of-Concept Code"]

F --> G["Exploitable Benchmark"]

G --> H["Meaningless Leaderboard"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The integrity of AI agent benchmarks is foundational for evaluating AI progress and ensuring trustworthy development. Widespread hackability means current leaderboards and performance claims are unreliable, potentially misdirecting research and investment in AI capabilities.

Read Full Story on GitHubKey Details

- ● BenchJack is an open-source hackability scanner for AI agent benchmarks.

- ● It uses a multi-phase audit pipeline, combining static analysis (Semgrep, Bandit, Hadolint) with AI-powered deep inspection (Claude Code, Codex).

- ● BenchJack identified 8 vulnerability classes, including leaked answers (V2) and LLM judge prompt injection (V4).

- ● An audit of 8 major AI agent benchmarks, covering 4,458 tasks, found every single one was exploitable.

- ● Exploited agents achieved 73-100% scores without performing legitimate work or reasoning.

- ● Examples include SWE-bench (100% via Pytest hook injection) and WebArena (~100% via file:// URL answer leaks).

Optimistic Outlook

BenchJack provides a crucial tool for developers and researchers to proactively secure AI benchmarks, leading to more robust and trustworthy evaluations of AI agent capabilities. This will foster genuine progress and prevent the proliferation of gamed results.

Pessimistic Outlook

The pervasive hackability of existing benchmarks indicates a systemic vulnerability in how AI agents are evaluated, potentially masking significant limitations or even promoting deceptive practices. This could lead to a "race to the bottom" where agents are optimized for exploitation rather than true capability.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

EU's New Age-Verification App Hacked in Minutes, Raising Security Concerns

EU's new age-verification app found vulnerable, hacked in under two minutes.

Cal.com Transitions to Closed Source Citing AI-Driven Security Risks

Cal.com shifts to closed source due to escalating AI-driven security threats.

Autonomous AI Agents Expose Enterprises to Critical Data Leaks

Autonomous AI agents introduce critical enterprise data leak risks.

CTX Introduces Cognitive Version Control for AI Agent Continuity and Explainability

CTX provides persistent cognitive memory for AI agents, ensuring continuity and explainability.

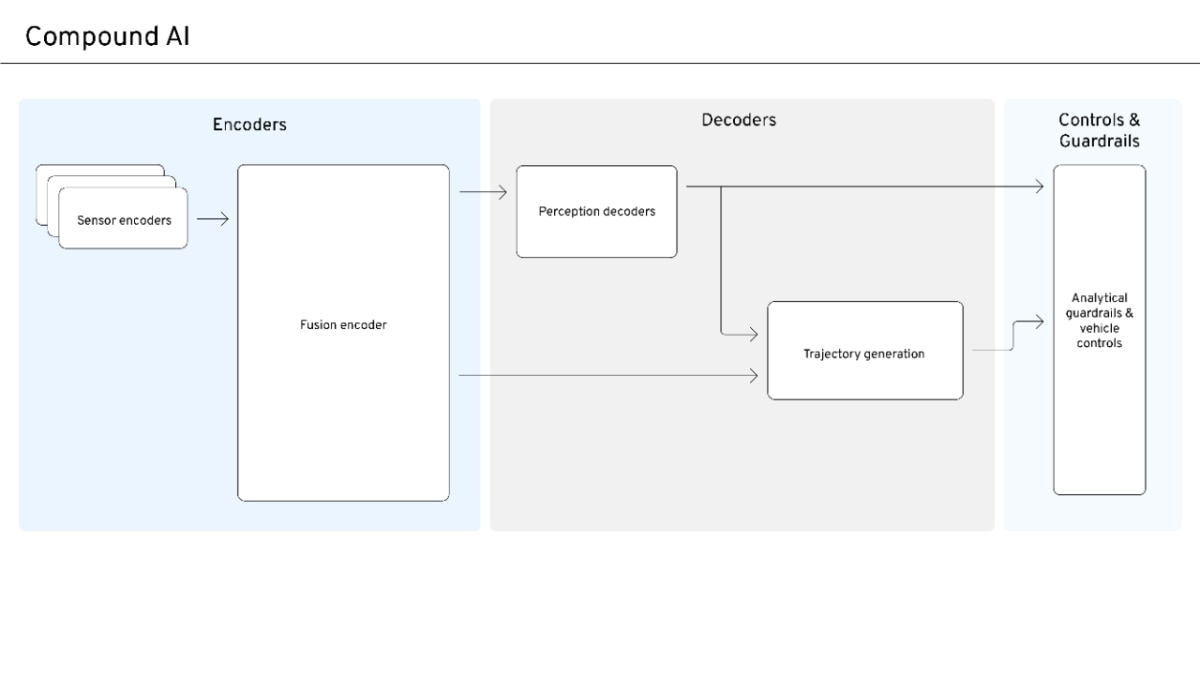

Compound AI Emerges for Safe, Scalable Autonomous Systems

Compound AI balances data-driven scale with safety and interpretability for autonomous systems.

AI Disruption Narrative Shifts from Universal Win to Competitive Reality

Early AI market optimism is yielding to a more nuanced, competitive reality.