AI Agents' Financial Vulnerability Spurs Cryptographic Guardrail Development

Sonic Intelligence

New cryptographic guardrails aim to secure AI agents handling finances.

Explain Like I'm Five

"Imagine you have a smart robot that can handle your money. Right now, we tell it rules in English, but clever people can trick it. Scientists are building a new way to give the robot rules using math, so it can't be tricked, making your money safer."

Deep Intelligence Analysis

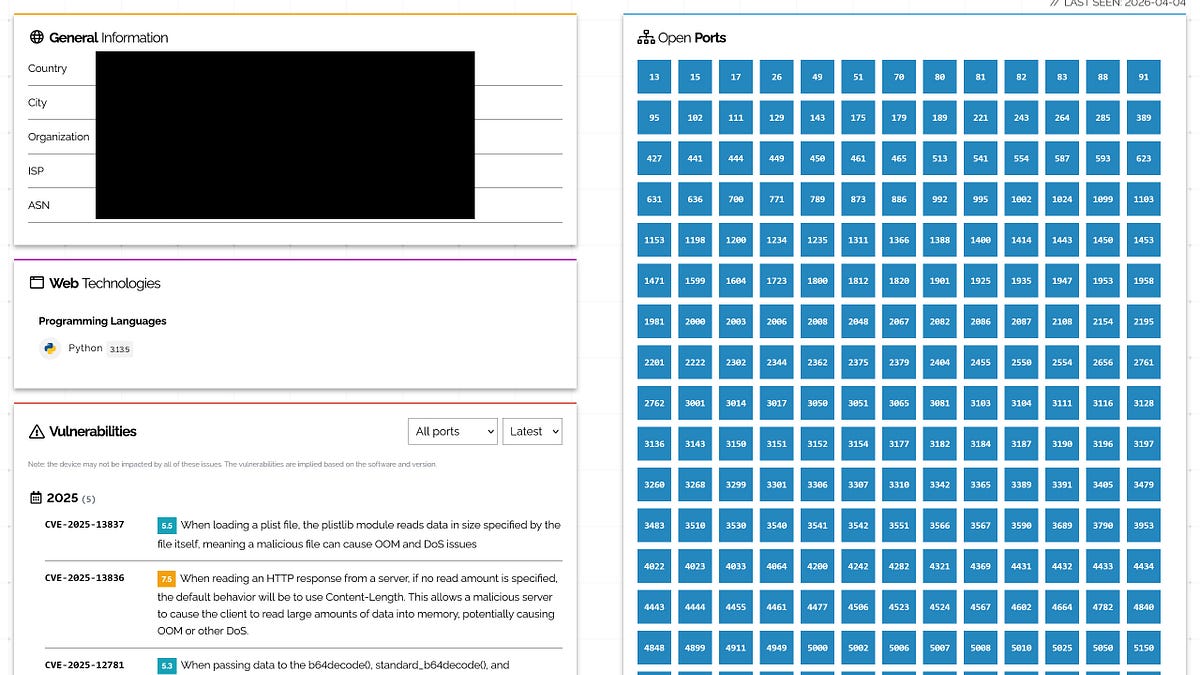

A novel solution emerges in the form of cryptographic guardrails, exemplified by the Automated Reasoning Checks (ARc) framework. Developed by a consortium of 28 researchers from AWS and academia, ARc represents a neurosymbolic system. This architecture synergistically combines the natural language understanding prowess of large language models with the deterministic precision of formal logic. Policies, initially articulated in plain English, are translated into SMT-LIB, a formal logical representation. This allows a solver to mathematically verify proposed agent actions against established policies, yielding definitive SAT or UNSAT results—allowed or not allowed—without any probabilistic ambiguity. This approach fundamentally eliminates the "grey area" where sophisticated prompts could previously subvert safety mechanisms.

The distinction between ARc and existing guardrail methodologies is crucial. Data-driven models, such as LlamaGuard, are effective within their training distributions but degrade outside them. LLM judges, while flexible, share the same inherent vulnerabilities as the agents they are designed to monitor. Reasoning-based guardrails, which prompt models to consider safety, have been shown to be highly vulnerable to hijacking. ARc's reliance on formal logic provides a foundational layer of security that is inherently more resilient to linguistic manipulation, offering a verifiable proof of compliance rather than a confidence score. This shift towards mathematically provable security is paramount for safeguarding financial transactions and other critical operations entrusted to autonomous AI agents.

Impact Assessment

AI agents with financial access introduce new security challenges, accelerating the attack-patch cycle. Traditional guardrails are insufficient, necessitating mathematically verifiable solutions to prevent significant financial losses.

Key Details

- Current AI agent security relies on prompt-based guardrails and LLM judges.

- Automated Reasoning Checks (ARc) is a neurosymbolic system combining LLMs with formal logic.

- ARc converts policies into SMT-LIB for mathematical verification.

- ARc results are definitive (SAT/UNSAT), not probabilistic.

- A 2025 paper found >90% attack success rates against reasoning-based guardrails.

Optimistic Outlook

The development of neurosymbolic systems like ARc offers a robust, mathematically certain approach to securing AI agents. This could establish a new standard for agentic system safety, preventing financial exploitation and fostering trust in autonomous financial operations.

Pessimistic Outlook

The rapid evolution of AI agent capabilities, particularly in financial contexts, outpaces current security measures. The demonstrated vulnerability of existing guardrails suggests a high risk of financial loss if cryptographic solutions are not widely adopted and proven resilient against sophisticated adversarial techniques.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.