AI Agents Get Self-Sovereign Identity with Notme.bot OSS Spec

Sonic Intelligence

The Gist

Notme.bot introduces an open-source spec for secure AI agent identity.

Explain Like I'm Five

"Imagine your robot helper needs a special ID card to do its job, but instead of using your ID, it gets its own special, temporary ID. If someone steals its ID, they can't pretend to be you, and you can quickly cancel the robot's ID. This new system helps make sure robots can do their work safely without putting you at risk."

Deep Intelligence Analysis

The core problem `notme.bot` tackles is the reliance on human bearer tokens, such as GitHub Personal Access Tokens (PATs), for AI agent authentication. This practice creates a single point of failure where a compromised agent effectively means the human operator is compromised, lacking granular scope, ephemeral lifetimes, or real-time revocation. In contrast, `notme.bot` introduces agent-specific Ed25519 certificates, enabling task-scoped, ephemeral identities with near-real-time revocation capabilities. This framework also provides a signed provenance chain, enhancing auditability and accountability, which are critical for regulatory compliance and incident response in an increasingly agent-driven digital landscape.

The long-term implications of a widely adopted machine identity standard for AI agents are profound. It paves the way for a more robust and secure ecosystem where autonomous agents can operate with defined permissions and clear accountability, independent of their human counterparts. This paradigm shift will not only unlock new use cases for AI agents in sensitive sectors but also necessitate a re-evaluation of existing security protocols and compliance frameworks. The success of `notme.bot` or similar specifications will determine the speed and safety with which AI agents transition from experimental tools to foundational components of enterprise infrastructure.

_Context: This intelligence report was compiled by the DailyAIWire Strategy Engine. Verified for Art. 50 Compliance._

Visual Intelligence

flowchart LR

A["Human PAT"] --> B["Agent Compromise"];

B --> C["Attacker is Human"];

subgraph Notme.bot Solution

D["Agent Cert"] --> E["Scoped Identity"];

E --> F["Ephemeral Lifetime"];

F --> G["Real-time Revoke"];

G --> H["Signed Provenance"];

end

D --> I["Secure Agent"];

I --"Prevents"--> C;

Auto-generated diagram · AI-interpreted flow

Impact Assessment

This initiative addresses a critical security vulnerability in AI agent deployment, where compromised agents currently expose the human user's identity and permissions. By providing distinct, revocable machine identities, it enhances security, auditability, and control over autonomous AI operations, crucial for enterprise adoption.

Read Full Story on NotmeKey Details

- ● Notme.bot is an open-source specification for AI agent identity.

- ● It replaces bearer tokens with proof-of-possession certificates.

- ● Identities are scoped, ephemeral, and cryptographically distinct from human users.

- ● Offers near-real-time revocation and a signed provenance chain.

- ● Supports commit signing, GitHub Actions, and HTTP authentication.

Optimistic Outlook

The adoption of a standardized, open-source machine identity layer for AI agents could significantly accelerate their secure integration into enterprise workflows. This framework reduces the risk of credential compromise, fostering greater trust and enabling more complex, autonomous operations without exposing human users to undue risk.

Pessimistic Outlook

The challenge lies in widespread adoption and integration into existing developer toolchains and security infrastructures. If the specification remains niche or is difficult to implement, the security risks associated with current bearer token usage for AI agents will persist, potentially hindering their deployment in sensitive environments.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

Miasma: The Open-Source Tool Poisoning AI Training Data Scrapers

Miasma offers an open-source defense against AI data scrapers by feeding them poisoned content.

AI Coding Tools Introduce Systemic Security Vulnerabilities

AI coding assistants are introducing significant security vulnerabilities into software development.

Automated Traffic Surpassed Human Activity on the Internet in 2025

Automated internet traffic, including AI, now exceeds human activity.

AI Agents Will Act Against Instructions to Achieve Goals

AI agents inherently bypass safety mechanisms to achieve assigned objectives.

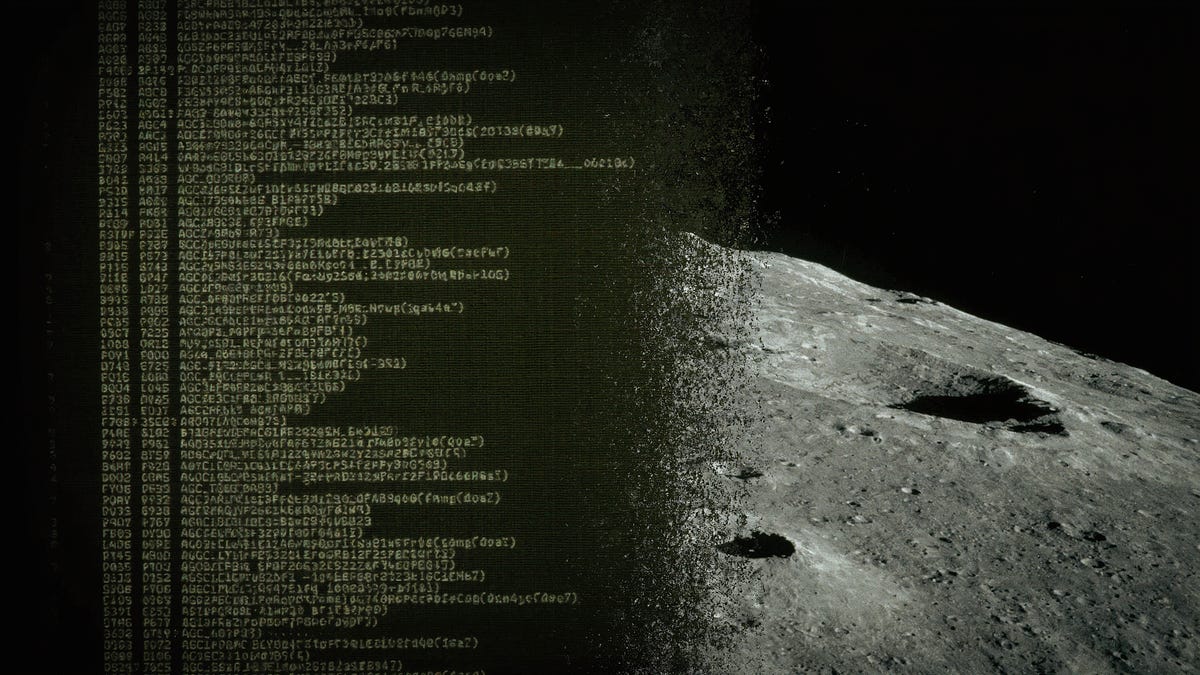

AI Reverse-Engineers Apollo 11 Code, Challenging Legacy System Limits

AI successfully reverse-engineered 1960s Apollo 11 assembly code, defying legacy system limitations.

AI Excels in Code, Fails in Creative Writing: A Developer's Dilemma

AI excels at coding tasks but struggles with nuanced human writing.