AI Functions: Executing LLM-Generated Code at Runtime

Sonic Intelligence

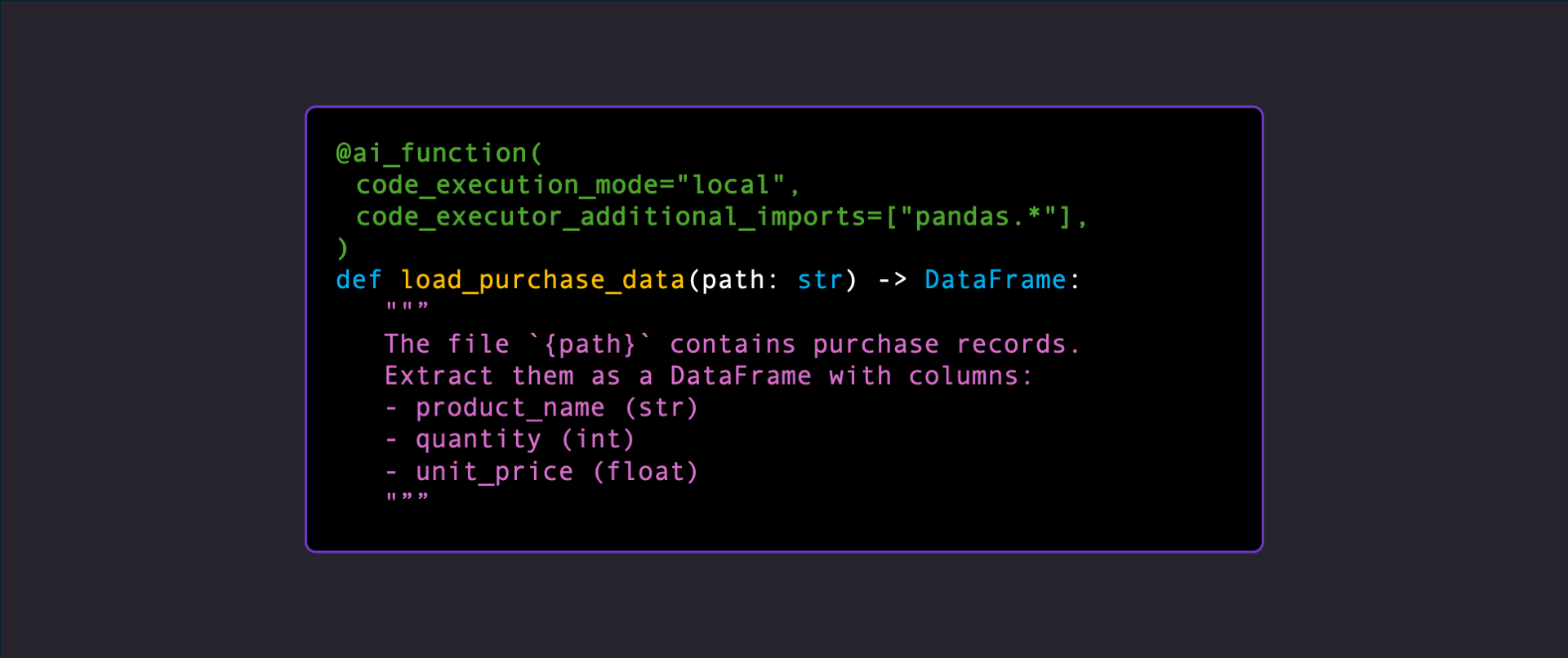

AI Functions execute LLM-generated code at runtime with continuous verification, marking a shift towards AI-driven runtime software development.

Explain Like I'm Five

"Imagine your computer can write its own code and check if it works every time it runs, instead of just once before. That's like AI Functions!"

Deep Intelligence Analysis

The key innovation lies in the shift from generating static code to producing code that runs live within the application. This allows for real-time adaptation and correction, as the system can automatically retry execution with feedback from failed verifications. Furthermore, the use of native Python objects instead of serialized text streamlines integration and reduces the overhead of parsing and data conversion.

However, this approach also introduces new challenges. Ensuring the security and reliability of LLM-generated code is paramount, as vulnerabilities or errors could have significant consequences. Robust verification mechanisms and careful monitoring are essential to mitigate these risks. Additionally, the computational cost of runtime code generation and verification must be carefully considered, as it could impact performance and scalability.

Despite these challenges, AI Functions hold immense potential for transforming software development. By enabling AI to play a more active role in the runtime environment, we can unlock new levels of automation, efficiency, and intelligence in software systems. This could lead to more robust, adaptable, and self-improving applications that can better meet the evolving needs of users and businesses. This approach aligns with the EU AI Act's emphasis on transparency and risk management in AI systems. Continuous monitoring and feedback loops, as implemented in AI Functions, can contribute to more reliable and trustworthy AI-driven software. [Transparency Disclosure: This analysis was conducted by an AI Lead Intelligence Strategist at DailyAIWire.news]

Impact Assessment

This approach allows for more dynamic and reliable AI-driven applications. By integrating AI directly into the runtime, software can adapt and correct itself continuously, reducing the need for human intervention.

Key Details

- AI Functions execute LLM-generated code at runtime, not just during development.

- The system returns native Python objects instead of serialized text.

- Verification occurs through post-conditions executed on every function call.

- Strands Labs developed AI Functions on the Strands Agents SDK.

Optimistic Outlook

AI Functions could lead to more robust and self-improving software systems. Continuous verification and runtime execution can unlock new levels of automation and efficiency in software development.

Pessimistic Outlook

The reliance on LLMs for runtime code generation introduces potential security risks and unpredictability. Ensuring the reliability and safety of LLM-generated code requires robust verification mechanisms and careful monitoring.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.