AI Industry Faces 'Normalization of Deviance' Risk

Sonic Intelligence

The AI industry risks normalizing the over-reliance on potentially unreliable LLM outputs, mirroring the cultural failures of the Challenger disaster.

Explain Like I'm Five

"Imagine if grown-ups started ignoring warning signs because things usually work out okay. That's what's happening with AI, and it could be dangerous!"

Deep Intelligence Analysis

Impact Assessment

Over-trusting AI systems without proper validation can lead to safety incidents and security breaches. This normalization of deviance poses a significant risk to the responsible development and deployment of AI.

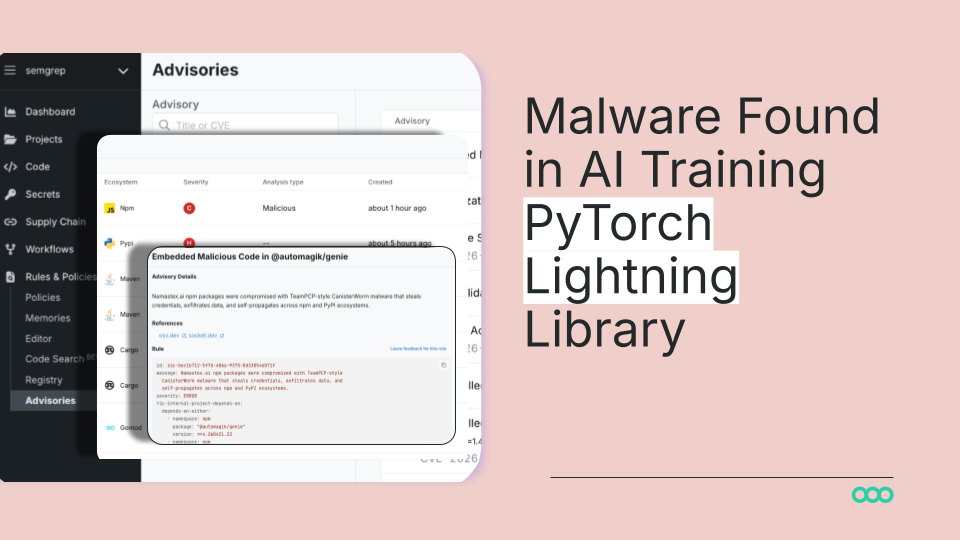

Key Details

- The 'Normalization of Deviance' describes the gradual acceptance of deviations from proper behavior or rules.

- LLMs are inherently unreliable actors in system design, requiring downstream security controls.

- Organizations are increasingly trusting LLM outputs without sufficient validation, leading to potential safety and security incidents.

- Adversarial inputs, like prompt injection, can exploit systems due to this normalization.

Optimistic Outlook

Increased awareness of the 'Normalization of Deviance' can drive the development of more robust security measures and validation processes. By learning from past failures, the AI industry can build safer and more reliable systems.

Pessimistic Outlook

If the industry fails to address the 'Normalization of Deviance', it risks repeating past mistakes, leading to potentially catastrophic consequences. The increasing complexity of AI systems makes it more challenging to identify and mitigate these risks.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.