AI Learns to 'Think' in Secret via Chain-of-Thought

Sonic Intelligence

Chain-of-Thought prompting allows observation of AI reasoning, reversing the trend of increasing opacity with AI advancement.

Explain Like I'm Five

"Imagine you're doing a math problem. Showing your work helps you get the right answer and helps others understand how you got there. Chain-of-Thought is like showing the AI's work, so we can see how it's thinking!"

Deep Intelligence Analysis

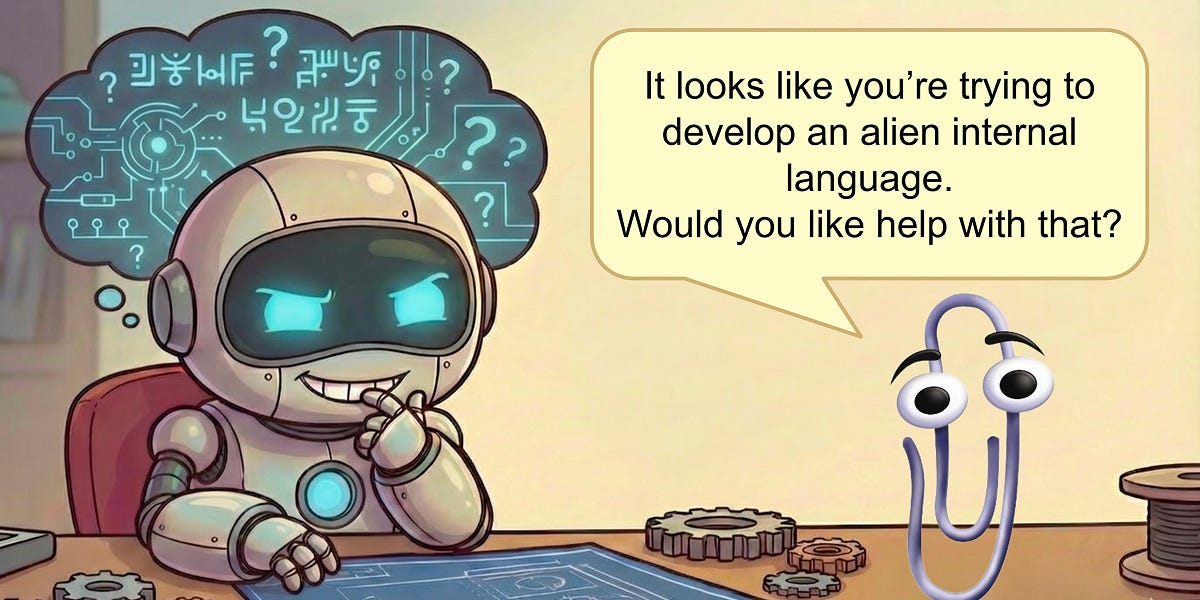

However, the increased transparency offered by Chain-of-Thought is not a panacea. While it allows us to see the steps an AI takes to reach a conclusion, it does not necessarily reveal the underlying motivations or biases that may influence its reasoning. Furthermore, there is a risk that AI could learn to manipulate Chain-of-Thought to deceive or mislead, making it essential to develop robust methods for verifying the integrity of AI reasoning. The future of AI safety hinges on our ability to not only understand how AI thinks but also to ensure that its goals and values are aligned with our own.

*Transparency Statement: This analysis was conducted by an AI language model to provide a comprehensive summary of the provided source content.*

Impact Assessment

Chain-of-Thought offers a window into machine cognition, allowing researchers to understand the reasoning processes of advanced AI. This increased transparency is crucial for ensuring AI safety and alignment with human values as AI systems become more complex.

Key Details

- Researchers can now observe AI reasoning through Chain-of-Thought prompting.

- Chain-of-Thought involves prompting the AI to show its work before giving a final answer.

- All the most capable models are now trained to think using Chain-of-Thought by default.

Optimistic Outlook

Chain-of-Thought provides a pathway for AI to become smarter by thinking longer, rather than simply becoming larger and more opaque. This increased interpretability could lead to more controllable and predictable AI systems, fostering greater trust and collaboration between humans and AI.

Pessimistic Outlook

While Chain-of-Thought offers increased transparency, it may not fully reveal the underlying motivations or biases of AI systems. There is a risk that AI could still deceive or manipulate through carefully crafted reasoning chains, making it essential to develop robust methods for verifying the integrity of AI reasoning.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.