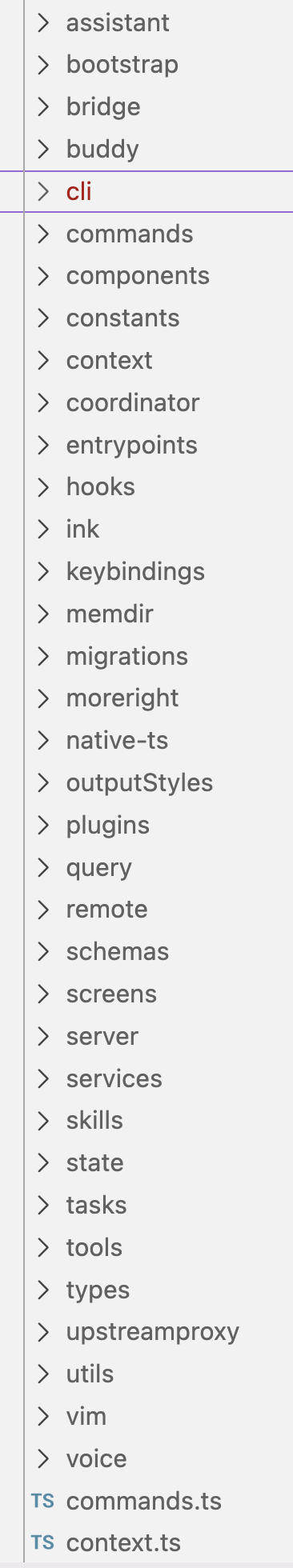

Claude Code Internals Leak Reveals Advanced Tooling Architecture

Sonic Intelligence

Leaked Claude Code CLI source reveals advanced architecture, custom UI, and tool execution.

Explain Like I'm Five

"Imagine a secret recipe for a very special robot helper got out. This recipe shows how the robot thinks, how it talks to other tools, and how it decides what it's allowed to do. It's like seeing all the hidden gears and wires that make a super-smart toy work, which is cool for learning but also a bit risky if bad guys see it."

Deep Intelligence Analysis

A key technical insight is the `StreamingToolExecutor`, which initiates tool execution as responses stream from the API, rather than waiting for full completion. This parallelization for `isConcurrencySafe` tools is a critical optimization for reducing latency and enhancing the responsiveness of AI agents, directly impacting the perceived intelligence and utility of the system. Furthermore, the implementation of a three-tiered permission system—combining rules-based logic, an ML classifier that queries the Claude API itself for risk assessment, and interactive user prompts—demonstrates a robust, multi-layered approach to security and control, crucial for managing the powerful capabilities of an AI agent.

The implications of this leak are multifaceted. From a competitive standpoint, it provides rivals with an unparalleled blueprint of Anthropic's internal tooling design, potentially accelerating their own development efforts. For the broader AI community, it serves as a valuable, albeit illicit, educational resource, showcasing advanced patterns in AI-tool orchestration, UI development for agents, and intelligent permissioning. However, the security ramifications are substantial, as the exposure of internal code could reveal vulnerabilities or design weaknesses that could be exploited. This incident underscores the ongoing tension between proprietary innovation and the inherent risks of digital asset protection in the rapidly evolving AI landscape.

Visual Intelligence

flowchart LR A["User Input/Tool Call"] --> B["Tier 1: Rules-Based Fast Path"] B -- "Allow" --> D["Execute Tool"] B -- "Deny" --> E["Block Action"] B -- "Ambiguous" --> C["Tier 2: ML Classifier (Claude API)"] C -- "Dangerous" --> E C -- "Uncertain" --> F["Tier 3: User Prompt"] F -- "Approve" --> D F -- "Deny" --> E

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The leak of Claude Code's internal source provides an unprecedented look into the sophisticated engineering behind a leading AI model's tooling. It reveals advanced architectural patterns for tool execution, UI rendering, and multi-layered security, offering invaluable insights for competitive analysis and the broader AI development community.

Key Details

- The Claude Code CLI source, comprising ~512,000 lines of TypeScript across 1,884 files, leaked on March 31, 2026.

- The application runs on the Bun runtime and uses a custom React-based terminal UI engine.

- A 'StreamingToolExecutor' executes tools as they arrive in the API stream, allowing for parallel execution of `isConcurrencySafe` tools.

- Features a three-tiered permission system: rules-based fast path, ML classifier (calling Claude API), and user prompts.

- Large tool results are persisted to disk to prevent memory bloat in long-running sessions.

Optimistic Outlook

This leak, while a security incident, offers a unique educational opportunity for developers to study best practices in AI tool integration and system design. The detailed insights into streaming tool execution and robust permission systems can inspire more efficient and secure development of future AI applications, potentially accelerating innovation across the industry.

Pessimistic Outlook

The unauthorized disclosure of such extensive source code raises significant security concerns, potentially exposing vulnerabilities that could be exploited by malicious actors. It also highlights the challenges of protecting proprietary AI intellectual property, which could lead to increased caution and reduced transparency from other major AI developers.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.