Evaluating AI Agents: A Two-Layer Approach to Stochastic Outputs

Sonic Intelligence

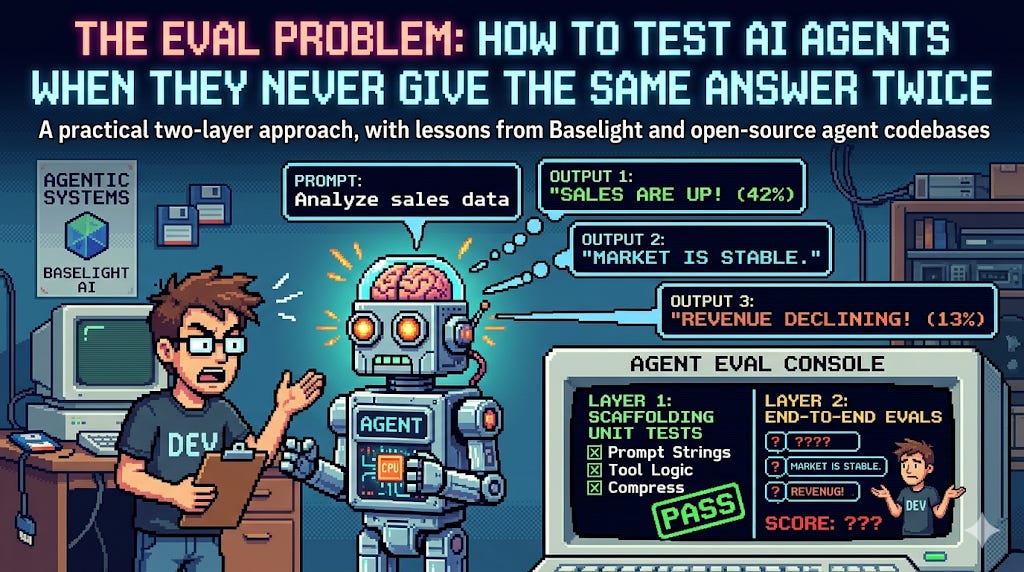

Testing AI agents with stochastic outputs requires a two-layer evaluation strategy.

Explain Like I'm Five

"Imagine you have a robot friend that helps you with homework, but sometimes it gives slightly different answers even for the same question. How do you know if your robot friend is doing a good job if its answers are never exactly the same? This article talks about smart ways to check if these robot friends are working correctly, even when they don't always say the exact same thing twice."

Deep Intelligence Analysis

Companies like Baselight AI, in developing tools such as a SQL error assistant, have directly encountered these issues, reporting 'flaky tests' where expected outputs did not consistently match generated queries due to ambiguity. This highlights that the problem extends beyond mere non-determinism to the difficulty of establishing clear, unambiguous success criteria for complex tasks. The industry is currently grappling with how to define 'working as intended' when the system's outputs are variable, a problem that intensifies with the complexity of the agent's task and the breadth of its operational context. This calls for a multi-layered approach that can accommodate variability while still ensuring functional correctness and user satisfaction.

The development of robust, two-layer evaluation frameworks is paramount for the maturation of AI agent technology. Without reliable testing, the deployment of AI agents in critical enterprise applications will remain constrained by concerns over unpredictability and potential regressions. The future success of AI agents hinges on the industry's ability to establish new paradigms for quality assurance that can effectively manage stochastic outputs, define success contextually, and catch regressions proactively. This will not only build user trust but also unlock the full potential of autonomous AI systems across diverse domains, from code generation to complex decision-making.

Visual Intelligence

flowchart LR A["Stochastic AI Output"] B["Traditional Testing Fails"] C["Define Success Criteria"] D["Two-Layer Evaluation"] E["Production Deployment"] A --> B B --> C C --> D D --> E

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The inherent stochasticity of large language models (LLMs) makes traditional software testing methodologies ineffective for AI agents. Developing robust evaluation frameworks is critical for deploying reliable AI agents in production, directly impacting their trustworthiness and adoption across industries.

Key Details

- Traditional assertion-based testing fails for AI agents due to their stochastic nature.

- The core challenge in AI agent evaluation is defining 'success' for non-deterministic outputs.

- Baselight AI's SQL error assistant faced issues with flaky tests due to output ambiguity.

- The proposed solution involves a two-layer testing approach to manage variability.

Optimistic Outlook

Implementing structured two-layer evaluation frameworks could significantly enhance the reliability and predictability of AI agents. This would accelerate their deployment in critical applications, fostering greater enterprise adoption and enabling more complex, robust AI-driven solutions across various sectors.

Pessimistic Outlook

Without standardized and effective testing protocols, AI agents risk frequent failures and unpredictable behavior in production environments. This could erode user trust, lead to significant operational challenges, and slow down the broader integration of AI agent technology, limiting its transformative potential.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.