GIST Enhances Embodied AI Navigation in Complex Environments

Sonic Intelligence

GIST creates semantically annotated navigation maps for embodied AI.

Explain Like I'm Five

"Imagine a robot trying to find a specific toy in a messy room. GIST helps the robot by making a special map that not only shows where things are but also what they are (like 'toy aisle' or 'medicine cabinet'). It then tells the robot how to get there using simple words, just like a person would."

Deep Intelligence Analysis

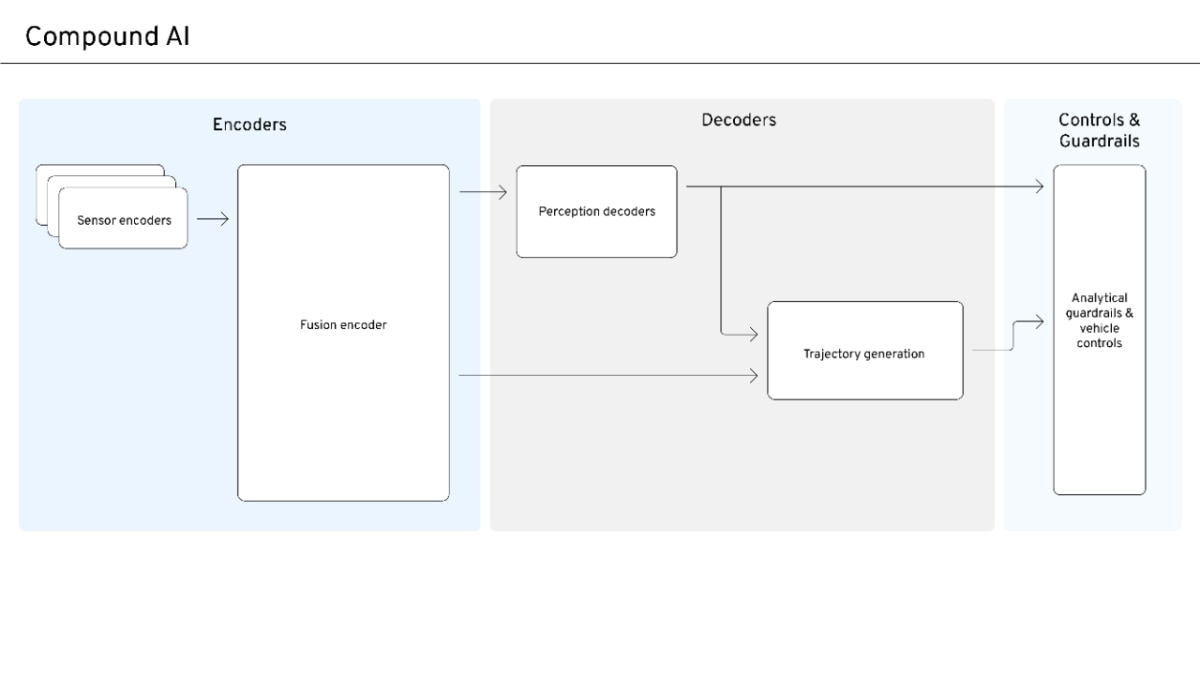

GIST's architecture distills scene data into 2D occupancy maps, extracts topological layouts, and overlays a lightweight semantic layer, addressing the limitations of traditional computer vision and even advanced Vision-Language Models in dense environments. Its performance metrics are notable, including a 1.04-meter top-5 mean translation error for semantic localization and an 80% navigation success rate in a formative evaluation (N=5) using only verbal cues. This structured spatial knowledge underpins critical human-AI interaction tasks, such as intent-driven semantic search and visually-grounded instruction generation, outperforming sequence-based LLM baselines in multi-criteria evaluations.

The implications extend to a wide array of applications where precise, semantically-aware navigation is paramount, from logistics and healthcare to assistive robotics. GIST's ability to synthesize optimal paths into egocentric, landmark-rich natural language routing facilitates more intuitive human oversight and collaboration, aligning with universal design principles. Future advancements will likely focus on scaling the system to more dynamic environments and integrating real-time semantic updates, paving the way for a new generation of highly adaptable and context-aware autonomous systems.

Visual Intelligence

flowchart LR A["Mobile Point Cloud"] --> B["2D Occupancy Map"] B --> C["Topological Layout"] C --> D["Semantic Layer Overlay"] D --> E["Semantically Annotated Topology"] E --> F["Semantic Search"] E --> G["Semantic Localizer"] E --> H["Instruction Generator"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

This system addresses a critical challenge for embodied AI in complex, dynamic environments, enabling more reliable and human-interpretable navigation. Its ability to generate landmark-rich instructions could significantly improve human-AI collaboration in logistics, healthcare, and retail.

Key Details

- GIST transforms consumer-grade mobile point clouds into semantically annotated navigation topologies.

- The architecture distills scenes into 2D occupancy maps and overlays a lightweight semantic layer.

- Achieves a 1.04 m top-5 mean translation error in one-shot Semantic Localizer tasks.

- Outperforms sequence-based instruction generation baselines in multi-criteria LLM evaluations.

- Demonstrated an 80% navigation success rate relying solely on verbal cues in a formative evaluation (N=5).

Optimistic Outlook

GIST's approach could unlock new levels of autonomy for robots in previously inaccessible or highly dynamic human-centric spaces. The improved spatial grounding and natural language instruction generation promise safer and more efficient human-robot interaction, accelerating adoption in critical sectors.

Pessimistic Outlook

The formative evaluation (N=5) is small, limiting generalizability. The reliance on consumer-grade mobile point clouds might introduce variability, and the system's robustness to novel, highly dynamic changes in environments needs further validation beyond quasi-static settings.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.