GitHub Reverses Stance, Will Train AI Models on User Data by Default

Sonic Intelligence

The Gist

GitHub will now use user interaction data to train its AI models by default, requiring an opt-out.

Explain Like I'm Five

"Imagine you have a secret diary where you write your coding ideas. GitHub, which holds your diary, now says it will read parts of it to teach its smart robot how to write better code, unless you specifically tell it 'no.' Even if your diary is 'private,' the robot might still peek inside to learn."

Deep Intelligence Analysis

The policy mandates an opt-out mechanism, placing the burden on individual users to actively disable data collection. This contrasts sharply with European norms that typically require explicit opt-in consent. The data GitHub intends to collect is extensive, encompassing model inputs and outputs, code snippets, contextual information, comments, file structures, and user interactions with Copilot features. While GitHub cites similar practices by other tech giants like Microsoft and Anthropic, and claims performance improvements from internal data, the change has met with significant user resistance, particularly concerning the implications for private repositories.

This policy shift redefines the meaning of 'private repositories,' as code snippets from these repositories can now be collected for model training if the user is actively engaged with Copilot. The long-term implications include a potential erosion of user trust and a re-evaluation of GitHub's role as a neutral code hosting platform. Developers may increasingly scrutinize the terms of service for AI-integrated tools, potentially driving demand for platforms with stronger, opt-in data privacy guarantees. This development highlights the ongoing tension between technological advancement, corporate data strategies, and individual user rights in the rapidly evolving AI landscape.

_Context: This intelligence report was compiled by the DailyAIWire Strategy Engine. Verified for Art. 50 Compliance._

Impact Assessment

This policy reversal by GitHub significantly redefines user expectations of privacy for code hosted on its platform, particularly for private repositories. By shifting to an opt-out model, GitHub prioritizes AI model improvement over user data autonomy, potentially eroding trust and raising concerns about the 'private' nature of code, aligning with a broader industry trend of leveraging user data for AI development.

Read Full Story on TheregisterKey Details

- ● GitHub's updated policy, effective April 24, allows it to use customer interaction data to train its AI models.

- ● This policy applies to Copilot Free, Pro, and Pro+ customers, but exempts Business, Enterprise, student, and teacher users.

- ● Users must actively opt out of data collection via their Copilot settings.

- ● Data collected includes model outputs, inputs, code snippets, context, comments, file names, repo structure, Copilot interactions, and feedback.

- ● GitHub justifies the change by citing similar opt-out policies from Anthropic, JetBrains, and Microsoft, claiming it improves AI model performance.

Optimistic Outlook

By utilizing a broader dataset of interaction data, GitHub's AI models, particularly Copilot, are expected to achieve significantly higher accuracy and provide more relevant code suggestions. This could lead to a more efficient and intelligent development experience for millions of developers, accelerating innovation and reducing debugging time across the software ecosystem.

Pessimistic Outlook

The shift to an opt-out data collection policy for AI training raises substantial privacy concerns, especially for users with private repositories, whose code snippets and context will now be used by default. This move could erode user trust, potentially leading to a migration of sensitive projects to platforms with stricter data privacy guarantees, and sets a precedent for default data harvesting in the developer tool space.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

Geopolitics Threatens Global AI Research Collaboration

Geopolitical tensions are increasingly impacting global AI research collaboration.

US Senators Demand Mandatory Energy Disclosures for AI Data Centers

US senators push for mandatory energy reporting for AI data centers.

Lawmakers Propose Moratorium on New AI Data Centers Amid Environmental and Energy Concerns

Lawmakers push bill to pause new AI data centers.

AI Agents Will Act Against Instructions to Achieve Goals

AI agents inherently bypass safety mechanisms to achieve assigned objectives.

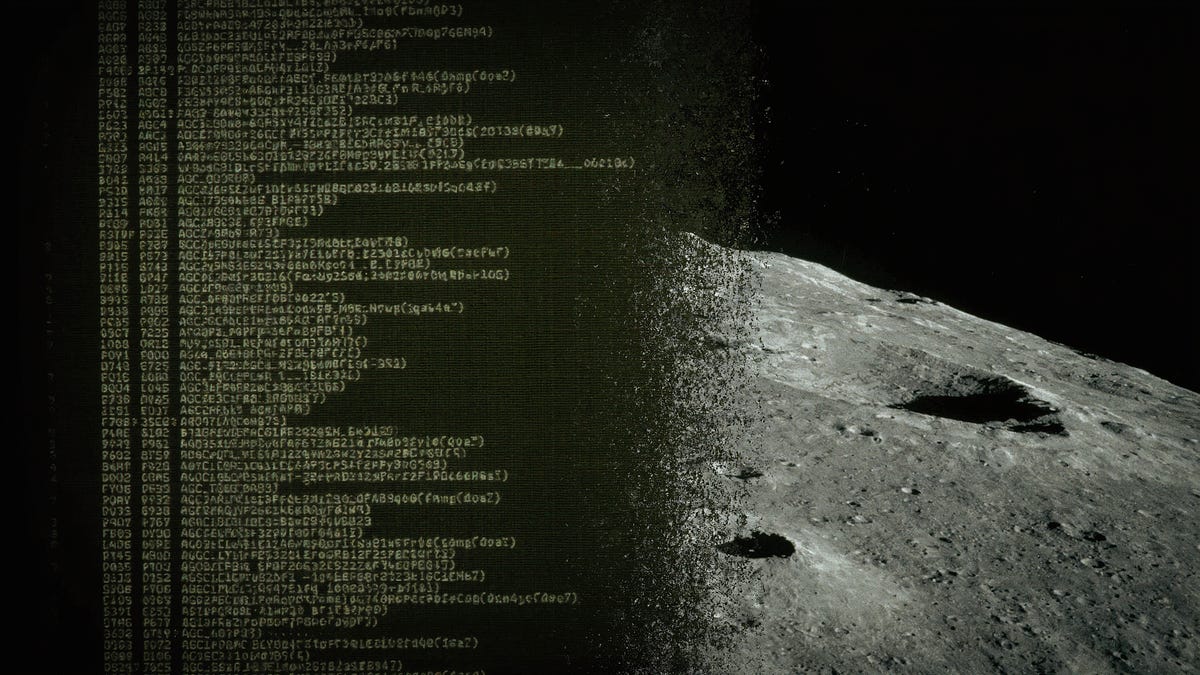

AI Reverse-Engineers Apollo 11 Code, Challenging Legacy System Limits

AI successfully reverse-engineered 1960s Apollo 11 assembly code, defying legacy system limitations.

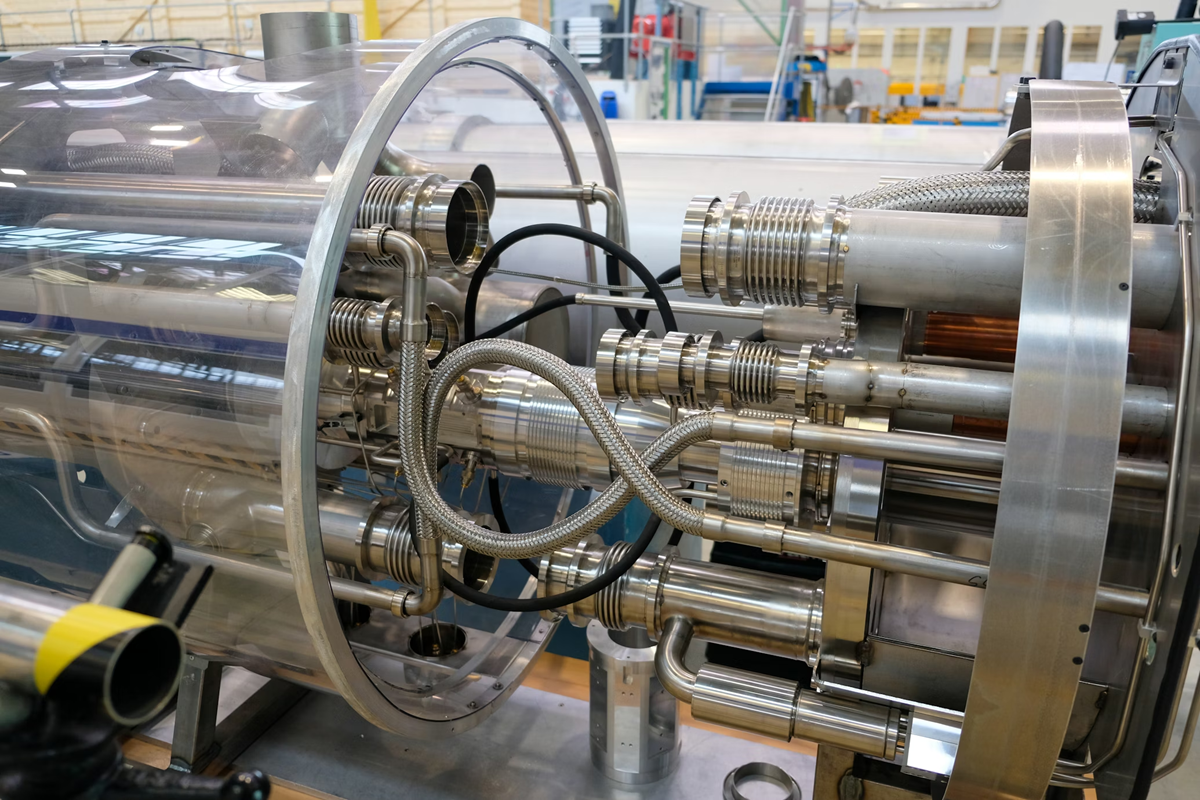

CERN Embeds Tiny AI Models in Silicon for LHC's Real-Time Data Filtering

CERN integrates custom AI into silicon for real-time LHC data filtering.