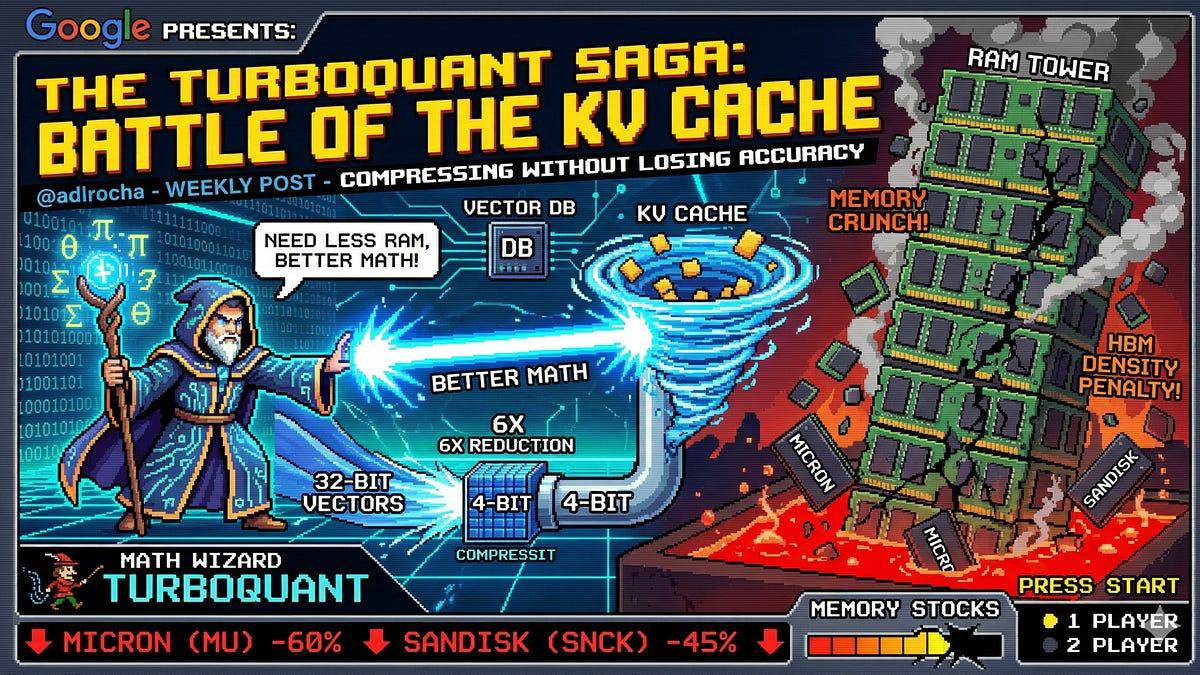

Google's TurboQuant Compresses KV Cache, Redefining LLM Memory Needs

Sonic Intelligence

Google's TurboQuant compresses LLM KV caches, reducing memory demands without accuracy loss.

Explain Like I'm Five

"Imagine your computer brain (AI) needs to remember everything you've said in a long chat. It keeps a special notepad called a "KV cache," but this notepad gets huge and slows things down. Google found a clever way, called TurboQuant, to write much smaller notes on that notepad without forgetting anything important, making the AI faster and need less memory."

Deep Intelligence Analysis

The core problem TurboQuant tackles stems from the autoregressive nature of LLMs, where each new token generation necessitates re-evaluating the entire preceding sequence. The attention mechanism, central to this process, generates query, key, and value vectors for each token. Storing these in the KV cache prevents redundant recomputation but leads to a linear increase in memory usage with sequence length. This challenge is exacerbated by existing hardware limitations, such as HBM density penalties and DRAM supply chain pressures. TurboQuant's innovation lies in finding a more efficient mathematical encoding for these high-dimensional vectors, offering a software-driven solution to a problem traditionally viewed through a hardware lens.

The implications of successful KV cache compression are far-reaching. It could enable the deployment of larger, more complex LLMs on less powerful hardware, democratizing access to advanced AI capabilities and fostering innovation in edge computing and mobile AI. Furthermore, by alleviating memory constraints, TurboQuant could unlock the potential for truly massive context windows, allowing LLMs to process and reason over vast amounts of information in real-time. This could lead to breakthroughs in long-form content generation, complex problem-solving, and highly personalized AI agents, fundamentally reshaping the landscape of AI development and application.

{"metadata": {"ai_detected": true, "model": "Gemini 2.5 Flash", "label": "EU AI Act Art. 50 Compliant"}}

Visual Intelligence

flowchart LR

A[LLM Input Prompt] --> B[Generate Token N]

B --> C[Compute QKV Vectors]

C --> D{KV Cache Full?}

D -- No --> E[Store QKV]

D -- Yes --> F[TurboQuant Compress]

F --> E

E --> G[Generate Token N+1]

G --> C

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The KV cache's memory footprint is a critical bottleneck for scaling LLMs, particularly for long-context applications. TurboQuant's ability to compress this cache without accuracy loss could significantly reduce hardware requirements and operational costs, enabling more powerful and accessible AI models.

Key Details

- TurboQuant is a Google-developed method for compressing the KV cache in transformer models.

- It aims to reduce memory consumption in LLMs, which typically grows with conversation length.

- The KV cache stores key and value vectors for previous tokens to avoid recomputation.

- The attention mechanism computes query, key, and value vectors for each token.

- This approach offers an alternative to increasing hardware memory (RAM) for LLMs.

Optimistic Outlook

TurboQuant could democratize access to larger, more capable LLMs by lowering their memory demands, making advanced AI more feasible for smaller enterprises and edge devices. This innovation could also accelerate research into longer context windows and more complex AI agents, pushing the boundaries of conversational AI.

Pessimistic Outlook

While promising, the widespread adoption and integration of such compression techniques might introduce new complexities in model deployment and fine-tuning. Furthermore, if not universally adopted, it could create a fragmented ecosystem where only certain models benefit from these efficiency gains, potentially widening the gap between resource-rich and resource-constrained AI developers.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.