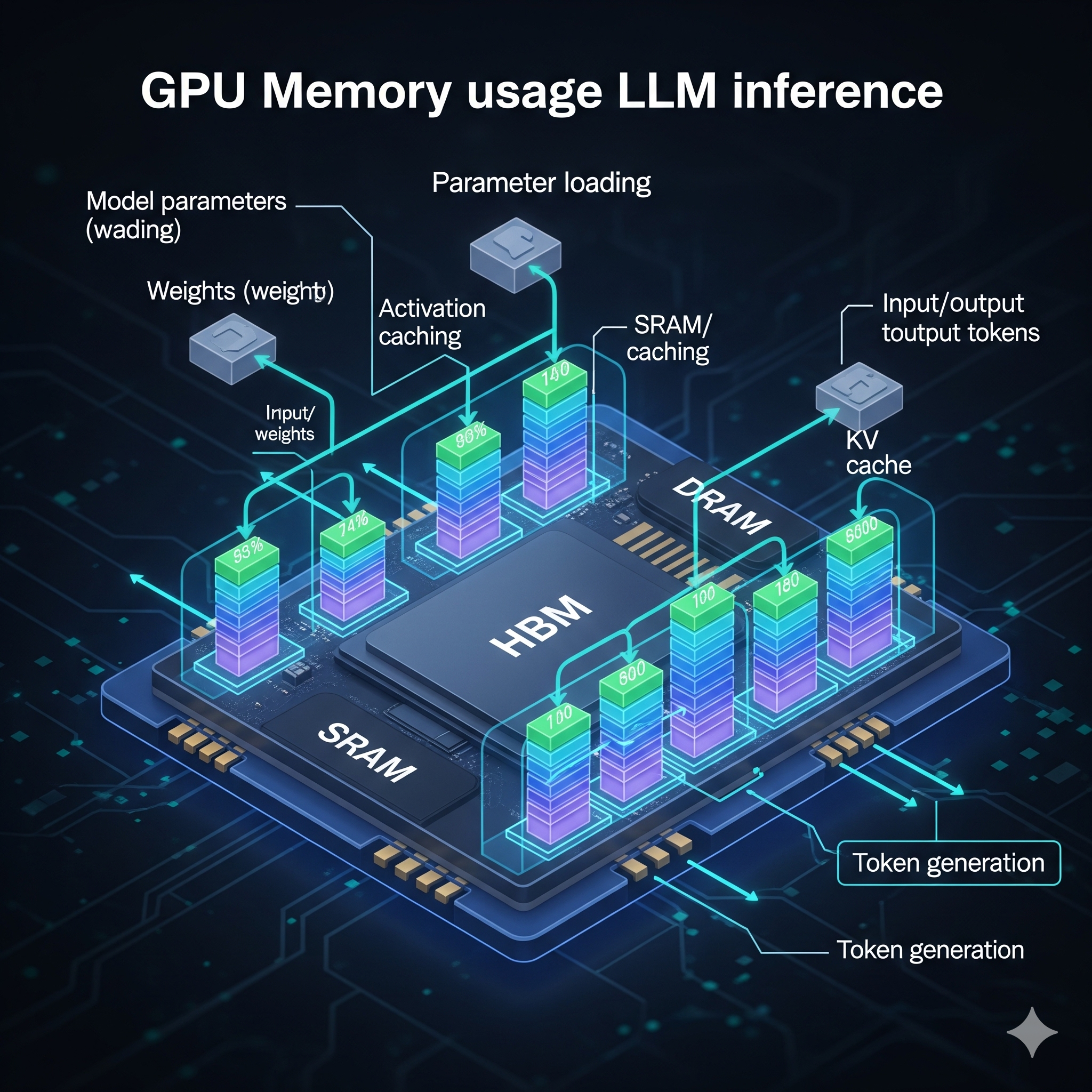

GPU Memory Bottlenecks Dominate LLM Inference Performance

Sonic Intelligence

LLM inference on GPUs is memory-bound, not compute-bound.

Explain Like I'm Five

"Imagine your computer is a super-fast chef (GPU compute) but has a tiny fridge (GPU memory). Even if the chef can cook super fast, if they have to keep running to a slow pantry (CPU RAM) for ingredients, everything slows down. For big AI brains (LLMs), the fridge is often too small, making the chef wait for ingredients, which is the real problem, not how fast they can chop."

Deep Intelligence Analysis

The NVIDIA A100, a benchmark for AI workloads, features 80 GB of HBM with 2.0 TB/s bandwidth and 312 TFLOPS (BF16). However, detailed calculations reveal that a typical matrix multiplication operation, while requiring only 3.5 μs of compute time, demands 134 μs for data transfer from HBM, making it 38 times slower. Inter-GPU communication further highlights this disparity: NVLink offers 600 GB/s, significantly faster than PCIe Gen4 x16's ~32 GB/s, underscoring the necessity of high-speed interconnects within a single node to mitigate data transfer latencies. The memory hierarchy confirms HBM as the critical working memory, with any spillover to slower tiers incurring substantial performance penalties.

This memory-centric bottleneck has profound implications for the design of next-generation AI accelerators and the optimization of LLM inference frameworks. Future hardware iterations will likely prioritize increased HBM capacity, higher memory bandwidth, and more sophisticated memory management units over incremental compute power gains. Software strategies, such as quantization, kernel fusion, and advanced parallelism techniques (e.g., tensor parallelism, pipeline parallelism), are explicitly designed to mitigate these memory constraints by reducing model size or optimizing data movement. The economic impact is also significant, as the cost of HBM and high-bandwidth interconnects remains a primary driver of GPU pricing, influencing the accessibility and scalability of advanced AI deployments.

Transparency: This analysis was generated by an AI model (Gemini 2.5 Flash) and reviewed by human intelligence strategists for factual accuracy and compliance with ethical AI guidelines, including EU AI Act Article 50.

Visual Intelligence

flowchart LR

A["LLM Inference"] --> B["GPU HBM Memory"]

B --> C["Memory Bandwidth"]

C --> D["Compute Units"]

D --> E["Data Transfer Bottleneck"]

E --> F["NVLink Interconnect"]

E --> G["PCIe Interconnect"]

F --> H["Multi-GPU Efficiency"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

Understanding GPU memory constraints and interconnect speeds is critical for optimizing large language model deployment. The analysis reveals that HBM capacity and bandwidth, not raw compute, are often the primary bottlenecks, dictating the feasibility and efficiency of running advanced AI models.

Key Details

- NVIDIA A100 HBM capacity is 80 GB.

- A100 memory bandwidth is 2.0 TB/s (2,000 GB/s).

- A100 BF16 compute capability is 312 TFLOPS.

- NVLink offers 600 GB/s bidirectional bandwidth, 19x faster than PCIe Gen4 x16's ~32 GB/s.

- A matrix multiply operation is 38x slower due to memory access (134 μs) than compute (3.5 μs).

Optimistic Outlook

Enhanced understanding of memory hierarchy and bottlenecks can drive innovation in GPU architecture and software optimization, leading to more efficient LLM inference. Future designs could prioritize HBM capacity and bandwidth, enabling larger models and context windows to run on fewer, more cost-effective GPUs.

Pessimistic Outlook

The persistent memory-bound nature of LLM inference poses significant challenges for scaling, potentially limiting the practical deployment of increasingly complex models. Reliance on expensive, high-bandwidth memory solutions could exacerbate hardware costs, creating a barrier to broader AI adoption and development.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.