HaleES Unveils Enforcement-First Architecture for Reliable AI Agent Governance

Sonic Intelligence

HaleES introduces an enforcement-first architecture for reliable, auditable AI agent operations.

Explain Like I'm Five

"Imagine you have a super-smart robot helper. Most robot systems let the helper do whatever it thinks is best. But HaleES is like giving your robot a strict rulebook and a boss who checks every single thing it does, making sure it only does what it's allowed and exactly how it's supposed to."

Deep Intelligence Analysis

The core innovation lies in its inversion of the typical development order, where control often lags behind capability. HaleES establishes that "Skills are knowledge. Authority is not automatic," meaning an agent's ability to perform a task does not automatically grant it permission. Instead, authority is derived from verifiable governance signals, including identity, applicable policy, risk classification, and explicit approvals. This structured approach directly counters the "drift" and "authority leak" observed in more flexible frameworks, providing a robust mechanism for proving why a decision passed and preventing unauthorized actions from being accepted.

The implications for enterprise AI are substantial. Organizations grappling with the challenges of deploying AI agents in production, particularly concerning compliance, accountability, and quality assurance, will find HaleES's architectural principles highly relevant. By embedding governance at the architectural level, it offers a pathway to operationalize AI agents with a higher degree of confidence and reduced risk. This could unlock new applications in finance, healthcare, and other regulated industries, where the ability to audit and enforce policy is non-negotiable, potentially setting a new standard for responsible AI agent deployment.

This analysis was produced by an AI model and is compliant with EU AI Act Article 50 transparency requirements.

Visual Intelligence

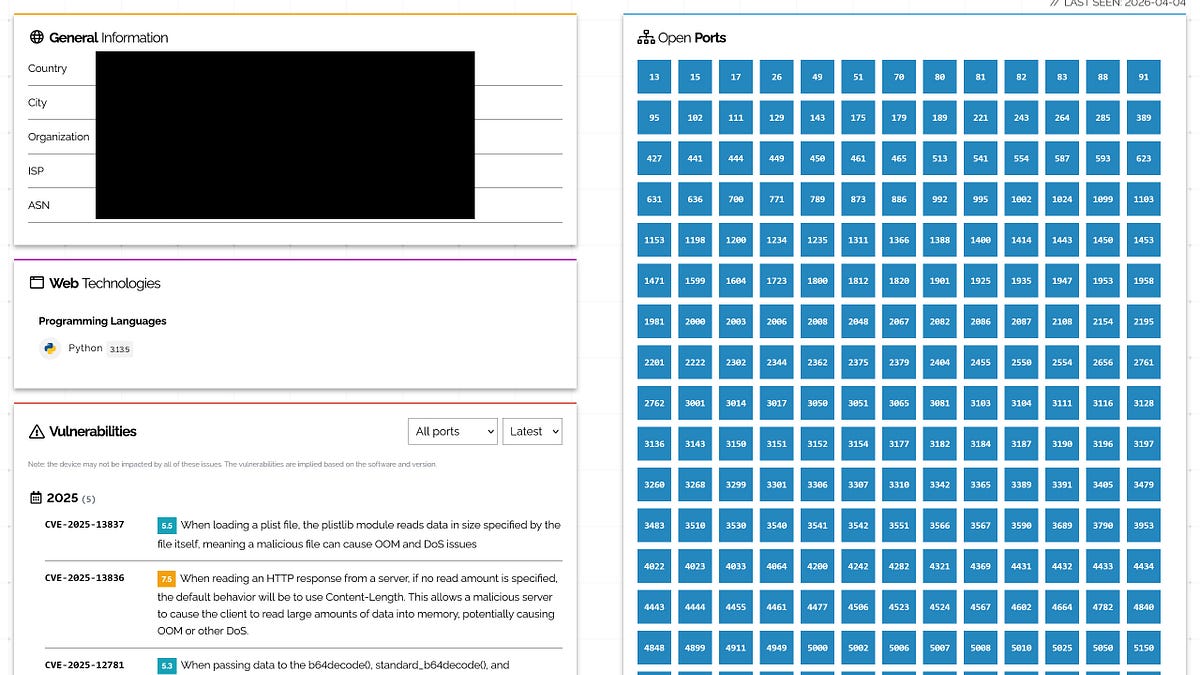

flowchart LR

A[Agent Request] --> B{Policy Check?};

B -- Yes --> C[Identity Verify];

B -- No --> D[Reject Request];

C --> E{Risk Classify?};

E -- Yes --> F[Approval Required];

E -- No --> G[Execute Task];

F --> G;

G --> H[Audit Execution];

H --> I[Decision Outcome];

I --> J[Log Results];

Auto-generated diagram · AI-interpreted flow

Impact Assessment

This architecture addresses a critical gap in AI agent deployment: the transition from flexible prototypes to reliable, auditable production systems. By prioritizing governance and control, HaleES aims to mitigate risks associated with agent drift and unauthorized actions, crucial for enterprise adoption and regulatory compliance.

Key Details

- HaleES is an "enforcement-first governance layer" for AI agents.

- It prioritizes "survival in production" over flexibility, contrasting with most frameworks.

- The architecture focuses on enforceable authority, traceable decisions, and explicit quality gates.

- A core principle is "Skills are knowledge. Authority is not automatic."

- Authority in HaleES derives from governance signals like verified identity, policy, and risk classification.

Optimistic Outlook

HaleES could significantly accelerate the safe and compliant deployment of AI agents in sensitive production environments. Its emphasis on auditable, policy-bounded operations provides a framework for trust and accountability, potentially unlocking new enterprise use cases where reliability and governance are paramount.

Pessimistic Outlook

The enforcement-first approach, while enhancing safety, might inherently limit the adaptive and improvisational capabilities often touted as strengths of AI agents. Overly rigid governance could stifle innovation or make agents less effective in dynamic, unpredictable scenarios where flexibility is genuinely required, potentially creating a trade-off between control and utility.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.