Human Oversight is Critical for Reliable AI Systems

Sonic Intelligence

AI systems should augment human capabilities, not replace them, requiring human verification to ensure accuracy and prevent 'trust debt'.

Explain Like I'm Five

"Imagine AI is like a super-smart but sometimes clumsy intern. It can help with lots of tasks, but you always need to double-check its work to make sure it doesn't make mistakes!"

Deep Intelligence Analysis

The piece stresses the importance of traceability, confidence scoring, and mandatory verification in AI systems. Traceability involves linking every data point to its raw source, while confidence scoring flags instances where the AI is uncertain. Mandatory verification requires human analysts to digitally sign off on critical insights before they are used for decision-making.

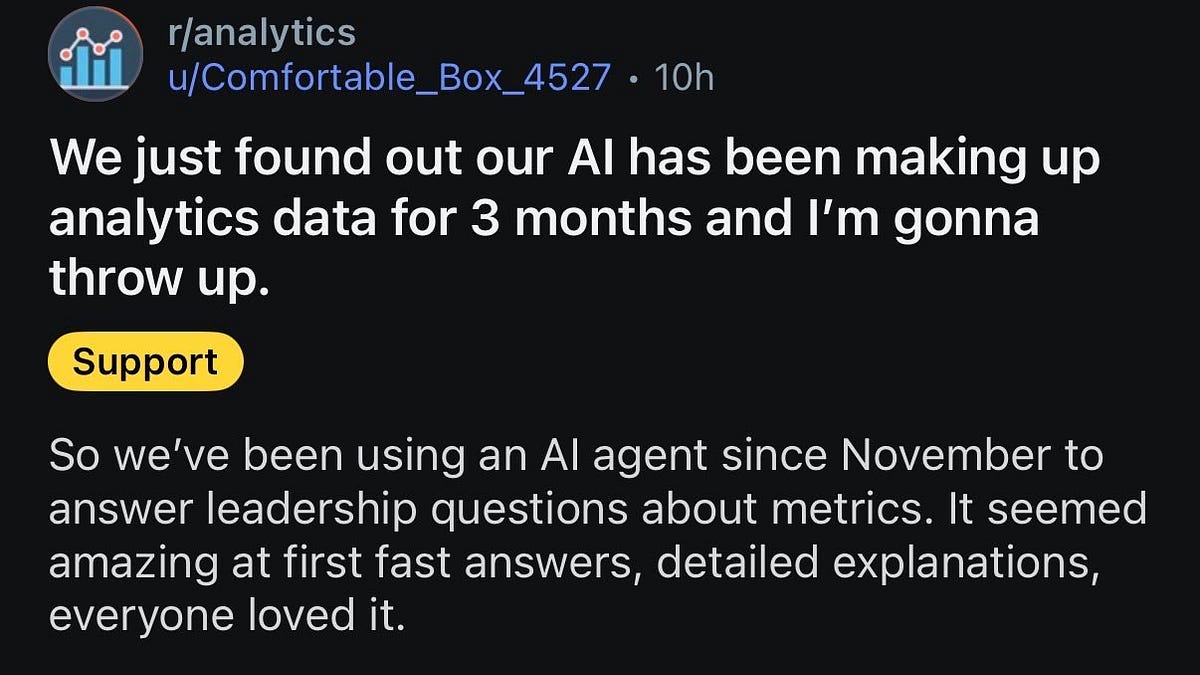

The author cautions against the trend of 'AI Theater,' where companies implement AI simply to impress shareholders without considering the potential risks. They argue that a flawed AI system can create a 'trust debt' that is difficult to overcome. The central message is that AI should be viewed as a tool to enhance human capabilities, not replace them, and that human intervention is essential for ensuring accuracy and accountability.

Impact Assessment

Blindly trusting AI output can lead to significant errors and erode trust in the system. Implementing human-in-the-loop frameworks ensures accuracy and accountability, especially in high-stakes decision-making.

Key Details

- LLMs are designed to predict the next likely token based on patterns, not to verify truth against a database.

- AI systems should provide justifications and traceability for their outputs.

- High-stakes AI requires mandatory human verification and digital signatures.

- Companies are rushing to implement AI agents just to tell shareholders they are “AI-first.”

Optimistic Outlook

By focusing on augmentation, AI can enhance human capabilities and improve decision-making processes. Traceability and verification mechanisms can build trust and ensure responsible AI deployment, leading to more reliable and beneficial outcomes.

Pessimistic Outlook

Over-reliance on unaudited AI systems can result in flawed decisions and a loss of trust. The rush to implement AI without proper safeguards may create liabilities and undermine the potential benefits of the technology.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.