LLM Python Library Refactors for Multi-Modal, Conversational AI

Sonic Intelligence

LLM library updates support multi-modal inputs and conversational message sequences.

Explain Like I'm Five

"Imagine your toy robot used to only understand one word at a time. Now, it can understand a whole conversation, see pictures, and even tell you things in different ways, like drawing or showing a video. This update helps computer programs talk to smart AI robots like that, making them much easier to build."

Deep Intelligence Analysis

Historically, LLM interactions were largely text-based. However, the advent of conversational interfaces, exemplified by ChatGPT and widely adopted by major API providers like OpenAI, necessitated a more dynamic input structure. The previous `conversation()` method, while functional for new interactions, lacked the flexibility to easily ingest pre-existing conversational histories, hindering the development of robust API emulations or persistent agentic systems. The new design directly addresses this by treating inputs as a sequence of messages, mirroring the industry-standard API patterns and enabling seamless integration of complex dialogue states.

This refactor has profound implications for developers building next-generation AI applications. By providing a unified abstraction for diverse input/output types and conversational flows, the library lowers the barrier to entry for leveraging advanced LLM features. It positions the LLM library as a more versatile tool for creating sophisticated AI agents, multi-modal interfaces, and applications requiring nuanced, context-aware interactions, thereby accelerating innovation in the broader AI ecosystem.

Impact Assessment

This refactor addresses the evolving capabilities of large language models, moving beyond simple text-in/text-out to support complex conversational flows, multi-modal data, and structured outputs. It enables developers to more easily integrate advanced LLM features into their applications, aligning with current industry trends.

Key Details

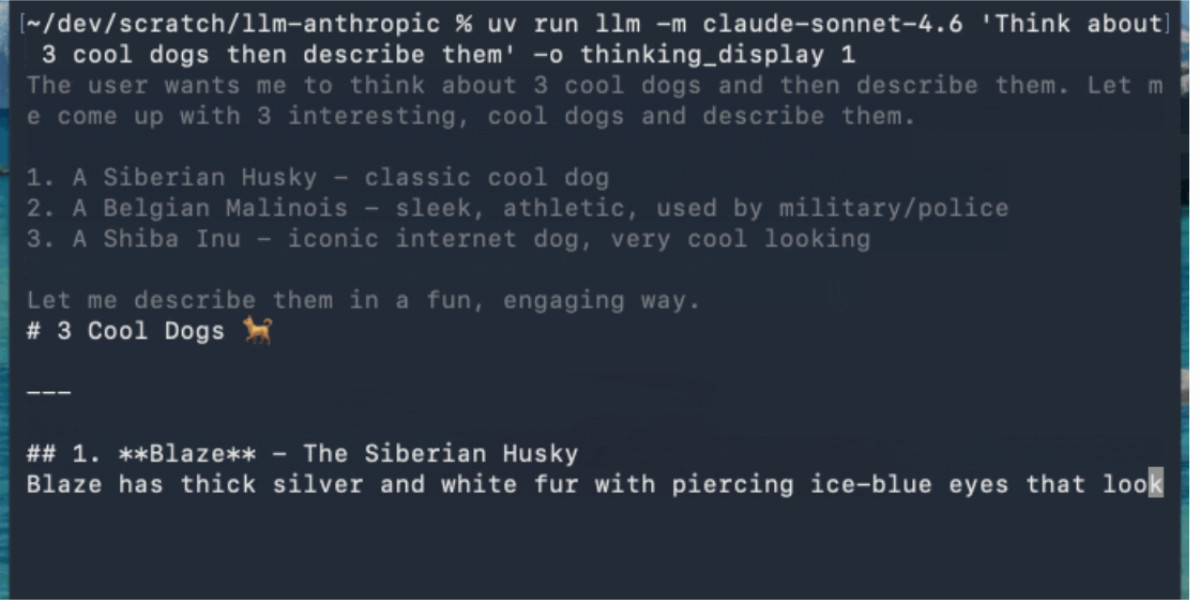

- LLM 0.32a0 is an alpha release of a Python library and CLI tool.

- Released on April 29, 2026.

- Key changes include model inputs as message sequences and responses as streams of differently typed parts.

- New abstraction supports image, audio, video input, structured JSON output, and tool calls.

- Aims to better handle diverse input/output types of frontier models.

Optimistic Outlook

The updated LLM library will streamline the development of sophisticated AI applications by providing a robust, flexible framework for interacting with advanced LLMs. This could accelerate innovation in conversational AI, agentic systems, and multi-modal interfaces, fostering a new generation of intelligent software.

Pessimistic Outlook

As an alpha release, stability and full compatibility with all existing plugins might be a concern for early adopters. Developers adopting it may face integration challenges or breaking changes in future stable releases, potentially slowing down project timelines and increasing development overhead.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.