Meta Abandons Open-Source AI Strategy, Deprecates Llama

Sonic Intelligence

Meta pivots from open-source AI, deprecating Llama and making Muse Spark proprietary.

Explain Like I'm Five

"Meta, a big tech company, used to let everyone use their smart computer programs for free, but now they've stopped because it costs too much and they want to make money from their own special programs."

Deep Intelligence Analysis

The decision mirrors Google's Android playbook in its intent to prevent a competitor from owning the platform layer, but diverges critically on sustainable economics. While Google's marginal cost for Android distribution was near zero, Meta's frontier model training costs have skyrocketed from approximately $10 million in 2023 to potentially $100 million or more per run in 2026, with overall AI infrastructure spending projected at $115–135 billion. This exponential cost increase, coupled with Llama 4's reported failure to 'captivate developers,' transformed a strategic subsidy into a self-harming expense, particularly when competitors, including Chinese AI labs, were leveraging Meta's open weights.

Moving forward, this pivot has profound implications for the open-source AI community and the broader market. It signals that the true moat for AI companies is not merely the model weights, but the proprietary user data graph and integrated commerce experiences, as exemplified by Muse Spark's 'Shopping Mode' across Meta's 3.3 billion users. Startups that built on Llama now face a critical dependency on abandoned infrastructure, forcing a rapid re-evaluation of their foundational technologies. This shift suggests a future where frontier AI development may increasingly consolidate within a few well-resourced, closed ecosystems, challenging the long-term viability and democratic ideals of truly open-source advanced AI.

Visual Intelligence

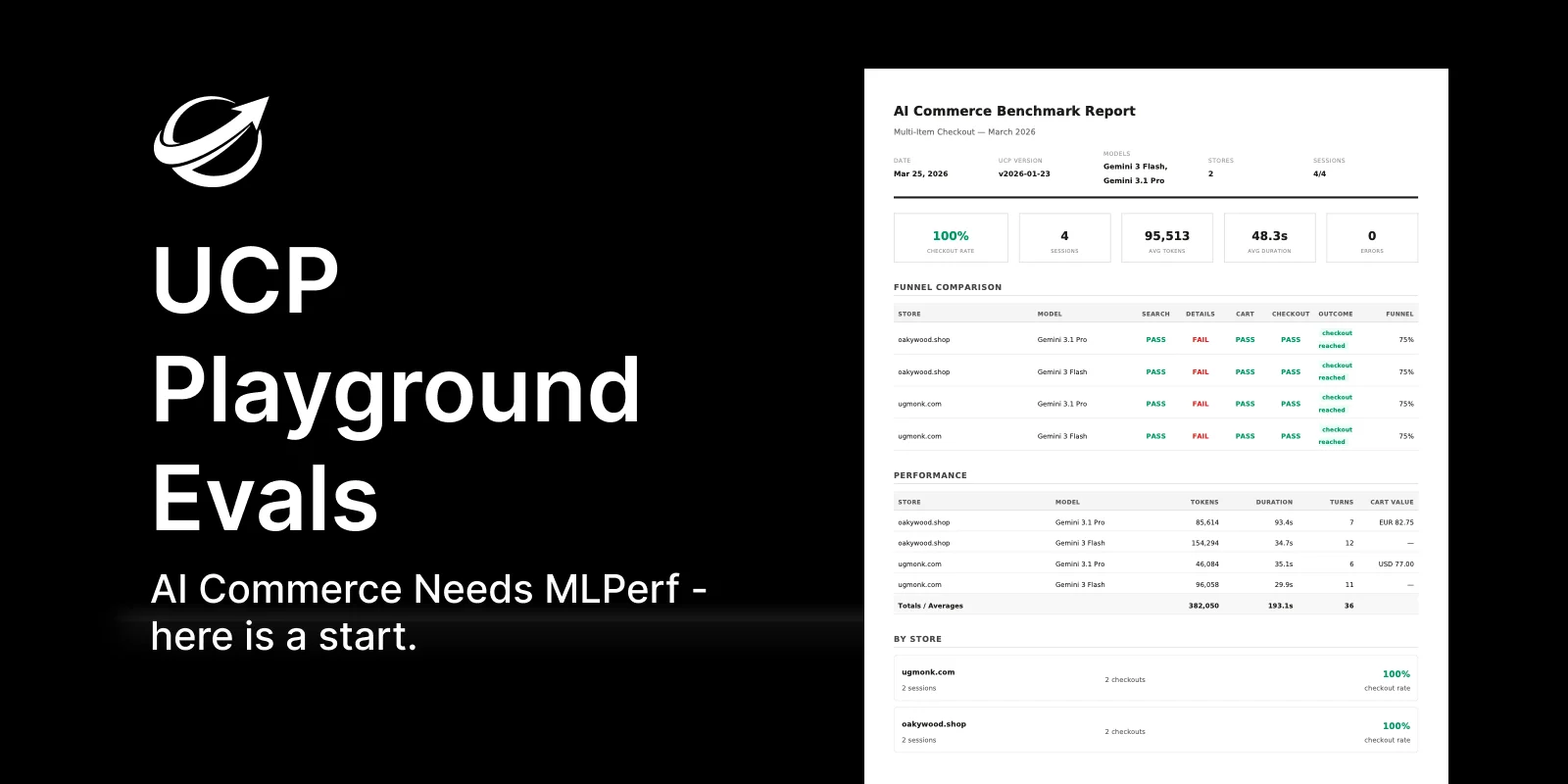

flowchart LR A["Meta Open-Source Llama"] --> B["1 Billion Downloads"]; B --> C["Competitors Use Llama"]; C --> D["High Training Costs"]; D --> E["Llama 4 Fails"]; E --> F["Pivot to Proprietary"]; F --> G["Muse Spark"]; G --> H["Closed Data Moat"];

Auto-generated diagram · AI-interpreted flow

Impact Assessment

This represents a significant strategic reversal by a major AI player, fundamentally altering the landscape of open-source AI and impacting thousands of startups reliant on Meta's models. It signals a broader industry shift towards proprietary, data-moated AI platforms driven by escalating costs and the pursuit of competitive advantage.

Key Details

- Meta has announced that Muse Spark is now proprietary and Llama is deprecated.

- Llama models accumulated 1 billion downloads, becoming default AI infrastructure for thousands of startups.

- Mark Zuckerberg previously advocated for open source as 'the path forward' in October 2024.

- Training a frontier model cost approximately $10 million in 2023, but is now 50-100 times more expensive.

- Meta is projected to spend $115–135 billion on AI infrastructure in 2026.

- CNBC reported that Llama 4 'failed to captivate developers,' indicating a lack of market traction.

- Muse Spark's key feature is 'Shopping Mode,' integrated across Facebook, Instagram, and WhatsApp's 3.3 billion users.

Optimistic Outlook

Meta's pivot could catalyze the open-source community to develop truly independent, decentralized AI initiatives, fostering innovation outside the influence of tech giants. It might also encourage other major players to reassess and potentially strengthen their commitment to open-source contributions, filling the void left by Meta.

Pessimistic Outlook

The abandonment of Llama could stifle innovation for startups that built their businesses on open models, consolidating power among a few proprietary AI providers. This move may lead to a less diverse and more controlled AI ecosystem, potentially slowing the overall progress of democratized AI development.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.