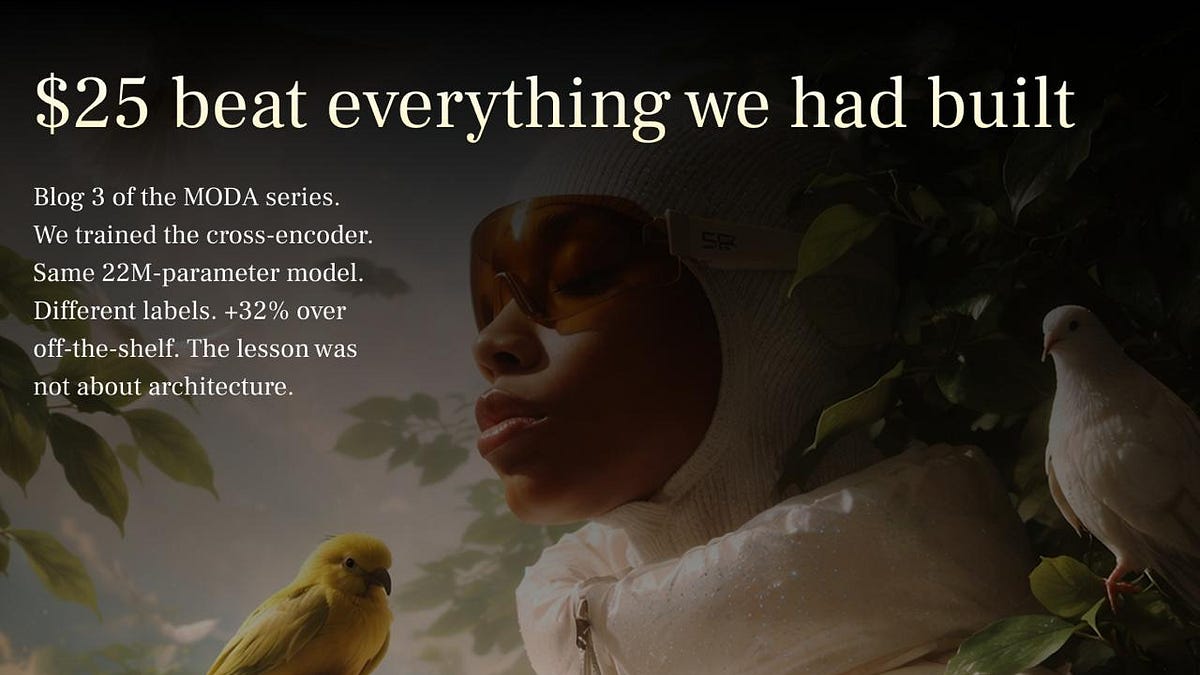

LLM-Graded Labels Outperform Millions of Purchase Data for Fashion Search Relevance

Sonic Intelligence

LLM-graded labels, costing $25, significantly improved fashion search over 1.5M purchase labels.

Explain Like I'm Five

"Imagine you want to teach a computer to find the best clothes for people. Instead of showing it millions of times what people *bought* (which might not be what they *wanted*), you ask a super-smart AI to carefully pick out the *perfect* clothes for just a few examples. It turns out those few perfect examples teach the computer much better than all the messy purchase data!"

Deep Intelligence Analysis

The technical backbone of this achievement is the cross-encoder, specifically a 22-million-parameter MiniLM-L-6-v2 model, which contributed a substantial 51% gain to the overall search pipeline. Unlike bi-encoders that embed queries and documents separately, cross-encoders process them jointly, allowing for a deeper contextual understanding and more accurate relevance scoring. The article underscores that the architecture itself was less critical than the quality of the training data. This suggests a strategic shift for developers: optimizing data generation processes, particularly through LLM-assisted grading, can yield disproportionately higher returns than continuous model scaling or architectural overhauls, especially in domain-specific applications.

The implications for data engineering and model training are profound. This approach offers a highly cost-effective and efficient pathway to developing high-performing models for niche domains where large, explicitly labeled datasets are scarce or expensive to acquire. It signals a future where LLMs are not just inference engines but also powerful data curation tools, democratizing access to high-quality training data. Industries beyond fashion, such as e-commerce, content recommendation, and specialized information retrieval, could leverage this methodology to significantly enhance their search and recommendation systems, driving better user experiences and operational efficiencies with optimized resource allocation.

This analysis was produced by an AI model and is compliant with EU AI Act Article 50 transparency requirements.

Visual Intelligence

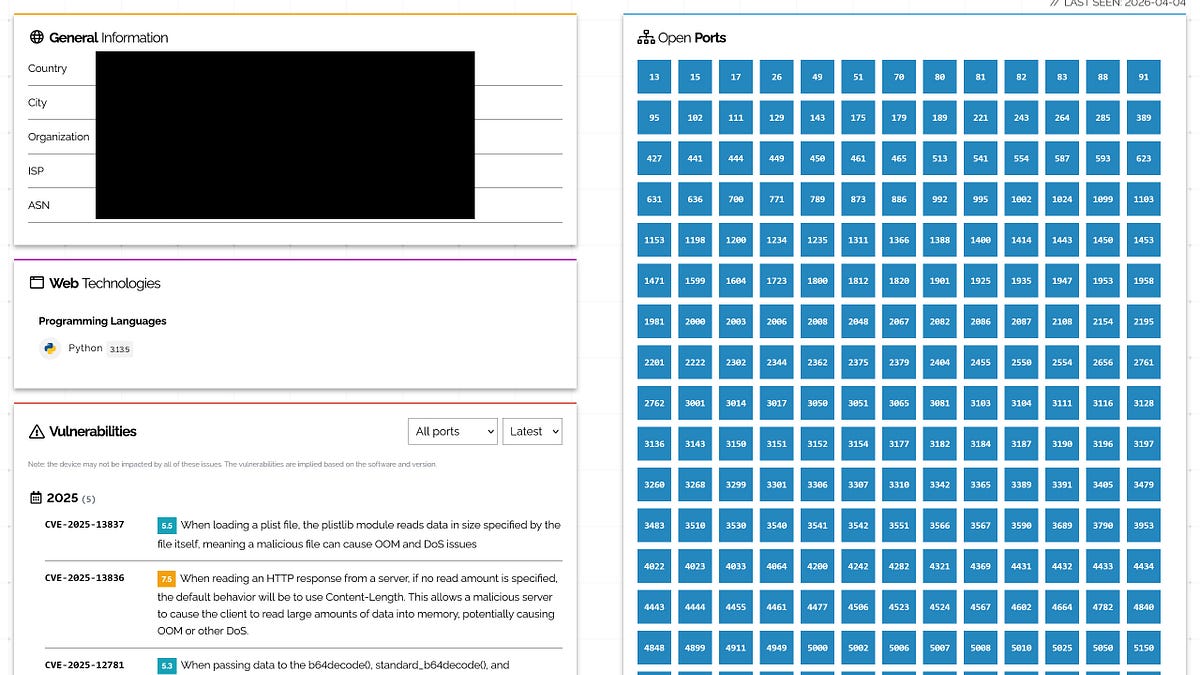

flowchart LR

A[Query Input] --> B[Bi-Encoder Retrieve Top-K];

B --> C[Cross-Encoder Rerank Candidates];

C --> D[LLM-Graded Labels];

D --> C;

C --> E[Improved Search Results];

Auto-generated diagram · AI-interpreted flow

Impact Assessment

This research demonstrates a paradigm shift in data labeling efficiency, showing that high-quality, LLM-generated labels can be vastly more effective than large volumes of implicitly derived data. It offers a cost-effective strategy for improving search relevance, particularly in niche domains, by leveraging advanced AI for data curation.

Key Details

- A $25 investment in LLM-graded labels improved fashion search relevance.

- This small dataset outperformed 1.5 million purchase labels.

- The cross-encoder model (22M-parameter MiniLM-L-6-v2) was responsible for a 51% gain in the search pipeline.

- The improvement was +32% over an off-the-shelf model.

- The key lesson is the superior impact of data quality over quantity for this task.

Optimistic Outlook

The findings suggest a highly efficient and scalable method for dataset creation, reducing the need for massive, expensive human labeling efforts or reliance on noisy implicit data. This could democratize access to high-quality training data, enabling smaller teams to build performant models for specialized applications with minimal budget.

Pessimistic Outlook

Over-reliance on LLM-generated labels, while efficient, could introduce subtle biases or limitations inherent to the LLM's training data, potentially leading to models that perform well on specific metrics but lack robustness in real-world edge cases. The "black box" nature of LLM grading might also complicate debugging or understanding model failures.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.