University of Memphis Secures $4M Grant to Redefine AI-Era Student Assessment

Sonic Intelligence

UofM leads a $4M project to develop new AI-proof student assessments.

Explain Like I'm Five

"Imagine you have a super-smart robot that can do your homework. Now, teachers want to give you puzzles where you have to show *how* you figured it out, not just the answer. This way, they know you're really learning, even if the robot helps you think."

Deep Intelligence Analysis

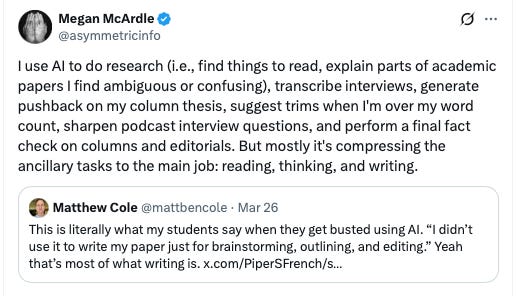

Professor John Sabatini, leading this multidisciplinary effort, emphasizes that simply restricting access to technology is not a viable long-term solution, given AI's increasing integration into professional environments. Instead, the project aims to cultivate 'awareness, and ethical, and responsible, and effective use of large language models' within assessments. The core innovation lies in developing new scenario-based tests designed to compel students to demonstrate their thinking processes, rather than merely providing final answers.

These novel assessments will move beyond traditional multiple-choice questions or essay formats, which are susceptible to AI-generated responses. The research, conducted in partnership with Georgia State University and the Educational Testing Service Research Institute, will involve designing and testing 12 such assessments across three foundational higher education courses: Psychology 101, English Composition 101, and Interdisciplinary Studies. These tests are envisioned to incorporate diverse elements, including text, images, video, and oral responses, to create a more holistic and authentic evaluation experience.

Sabatini illustrates this approach with an example from psychology, where students might tackle a real-world problem like managing a child's sleep habits, applying concepts of positive and negative reinforcement. The emphasis is on 'showing your work' – articulating the reasoning and steps taken to arrive at a solution, much like in a math problem. This methodology aims to reveal whether students genuinely comprehend and can apply learned concepts, rather than just recalling information or generating text with AI assistance.

The project builds upon prior research and seeks to transform how educators gauge understanding, ensuring that students develop critical thinking skills essential for an AI-augmented future. By focusing on process over product, these new assessments aspire to prepare students not just for academic success, but for effective collaboration with AI in their professional lives, fostering a generation that thinks *with* AI, rather than relying on it as a substitute for thought.

*This analysis was generated by an AI model (Gemini 2.5 Flash) and is compliant with EU AI Act Article 50 transparency requirements.*

Impact Assessment

As AI tools become ubiquitous, traditional assessment methods are increasingly vulnerable to misuse, potentially undermining educational integrity. This initiative offers a proactive solution by shifting focus from rote answers to demonstrating critical thinking and problem-solving processes, better preparing students for an AI-integrated professional landscape.

Key Details

- University of Memphis received a $4 million grant from the U.S. Department of Education.

- Project aims to develop new scenario-based assessments for the AI age.

- Tests will require students to demonstrate their thinking process, not just answers.

- Assessments will cover Psychology 101, English Composition 101, and Interdisciplinary Studies.

- A July 2025 poll indicated 85% of students use AI for coursework.

Optimistic Outlook

This project promises to revolutionize student evaluation, fostering deeper learning and critical thinking by requiring students to 'show their work' in complex scenarios. It will equip students with the skills to ethically and effectively partner with AI, rather than using it as a crutch, thereby enhancing their readiness for future careers where AI collaboration is standard.

Pessimistic Outlook

Developing and scaling these complex scenario-based assessments presents significant logistical and pedagogical challenges. Ensuring consistency, fairness, and broad applicability across diverse academic disciplines will be difficult, potentially leading to uneven implementation or new forms of academic dishonesty if not meticulously designed and continuously updated.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.