Vix AI Coding Agent Claims 50% Cost Reduction Over Claude Code

Sonic Intelligence

The Gist

Vix AI coding agent demonstrates significant cost and time savings over Claude Code.

Explain Like I'm Five

"Imagine you have a super smart robot helper for coding. This new robot, Vix, does the same coding jobs as another robot, Claude, but it's much faster and costs half the money! It's like getting the same good work done in less time and for less pocket money."

Deep Intelligence Analysis

Vix's reported performance is based on evaluations across seven distinct coding tasks, where it completed the total workload in 38 minutes and 30 seconds for a cost of $6.64, contrasting sharply with Claude Code's 64 minutes and 6 seconds at $12.44. This efficiency is primarily attributed to Vix's strategic token optimization, particularly its aggressive leveraging of caching mechanisms. While the developers acknowledge that their evaluation is "by no means a scientific approach," the consistent results across diverse tasks suggest a robust underlying methodology for token reduction. The agent's current limitation to macOS and Linux indicates an initial focus on specific developer environments, but its core efficiency principles are broadly applicable.

The implications of such cost-effective AI agents are far-reaching. They could democratize access to advanced coding assistance, making sophisticated tools viable for individual developers, startups, and smaller teams with constrained budgets. This competitive pressure will likely force larger, more general-purpose LLM providers to either reduce their inference costs or demonstrate superior qualitative advantages that justify their premium pricing. Furthermore, the focus on token optimization and caching highlights a critical area of innovation in AI agent design, suggesting that future advancements will increasingly prioritize efficiency alongside capability. This trend is set to accelerate the integration of AI agents as indispensable components of the modern software development lifecycle.

Impact Assessment

The demonstrated cost and time efficiencies of Vix could significantly lower the barrier to entry for developers and organizations adopting AI coding assistants. This drives productivity gains and makes advanced AI agent capabilities more accessible for everyday development workflows.

Read Full Story on GitHubKey Details

- ● Vix agent achieved a total cost of $6.64 across 7 coding tasks, compared to Claude Code's $12.44.

- ● Total task completion time for Vix was 38 minutes 30 seconds, versus 64 minutes 6 seconds for Claude Code.

- ● Evaluated across scenarios including writing new specs, adding features, and fixing bugs.

- ● Achieves efficiency by leveraging caching and optimizing token usage.

- ● Currently supports macOS and Linux operating systems.

Optimistic Outlook

Vix's performance suggests a future where AI coding agents are not only powerful but also economically viable for widespread adoption. This could accelerate software development cycles, free up developer time for more complex problem-solving, and foster innovation by making advanced tooling more accessible to smaller teams and individual contributors.

Pessimistic Outlook

The "not scientific approach" disclaimer raises questions about the generalizability and robustness of the reported savings. While promising, the current platform limitations (macOS/Linux) restrict its immediate broader impact. There's also an inherent challenge in qualitatively assessing LLM output, meaning perceived quality might vary despite similar quantitative results.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

Spacebot Unveils Agentic AI Platform with Concurrent Execution and Adaptive Context Management

Spacebot introduces an agentic AI system for concurrent task execution and dynamic context handling.

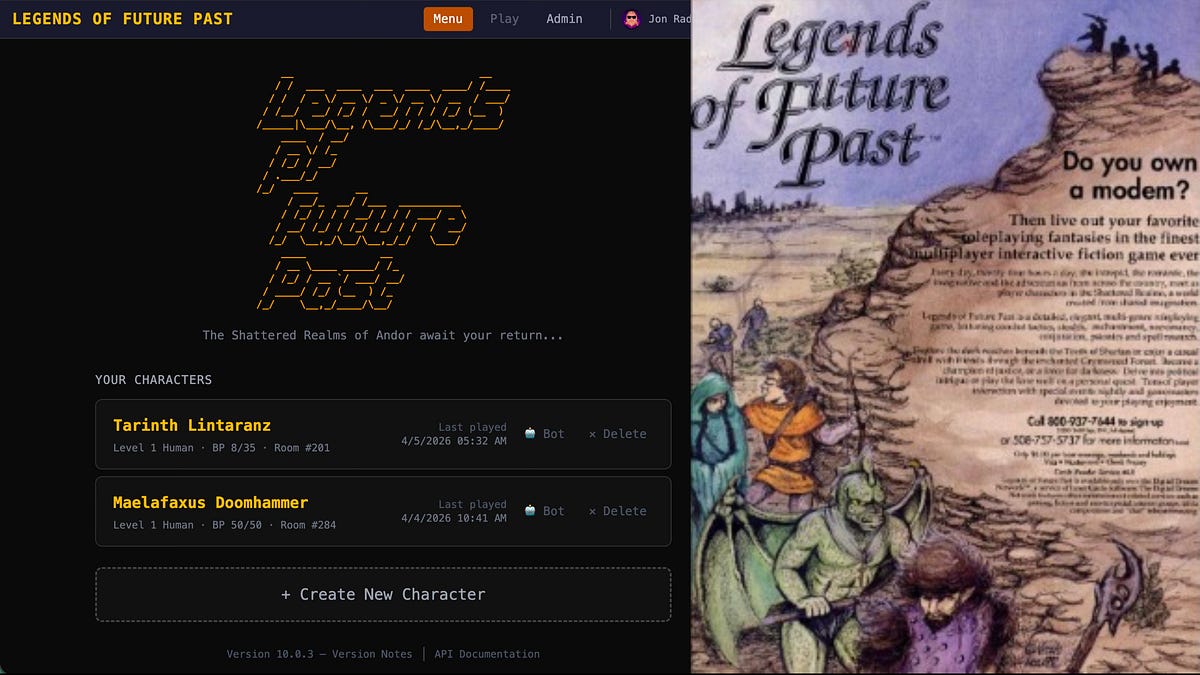

AI Agents Resurrect 1992 MUD Without Source Code

Agentic AI successfully rebuilt a 1992 multiplayer online game from artifacts.

Procurement.txt: An Open Standard for AI Agent Business Transactions

A new open standard simplifies AI agent transactions, boosting efficiency and reducing costs.

Arcee Launches Trinity LLM, Challenges Western Reliance on Chinese AI

Arcee's new Trinity LLM aims to provide a Western open-source alternative to Chinese models.

Anthropic's Claude Mythos Preview Autonomously Finds Thousands of High-Severity Vulnerabilities in Major OS and Browsers

Anthropic's new AI model, Claude Mythos Preview, autonomously identified thousands of high-severity vulnerabilities acro...

US Leads AI Brains, China Dominates AI Bodies in Global Tech Race

The US leads in AI 'brains' (LLMs, chips), while China excels in AI 'bodies' (robotics).