Workplace AI Use Raises Professional Integrity Concerns

Sonic Intelligence

The Gist

Colleagues suspected of using LLMs for work responses, sparking ethical debate.

Explain Like I'm Five

"Imagine you do your homework very carefully, but your friend just uses a magic talking robot to write their answers based on your ideas. It feels unfair because they didn't do the real thinking, and their answers aren't truly theirs."

Deep Intelligence Analysis

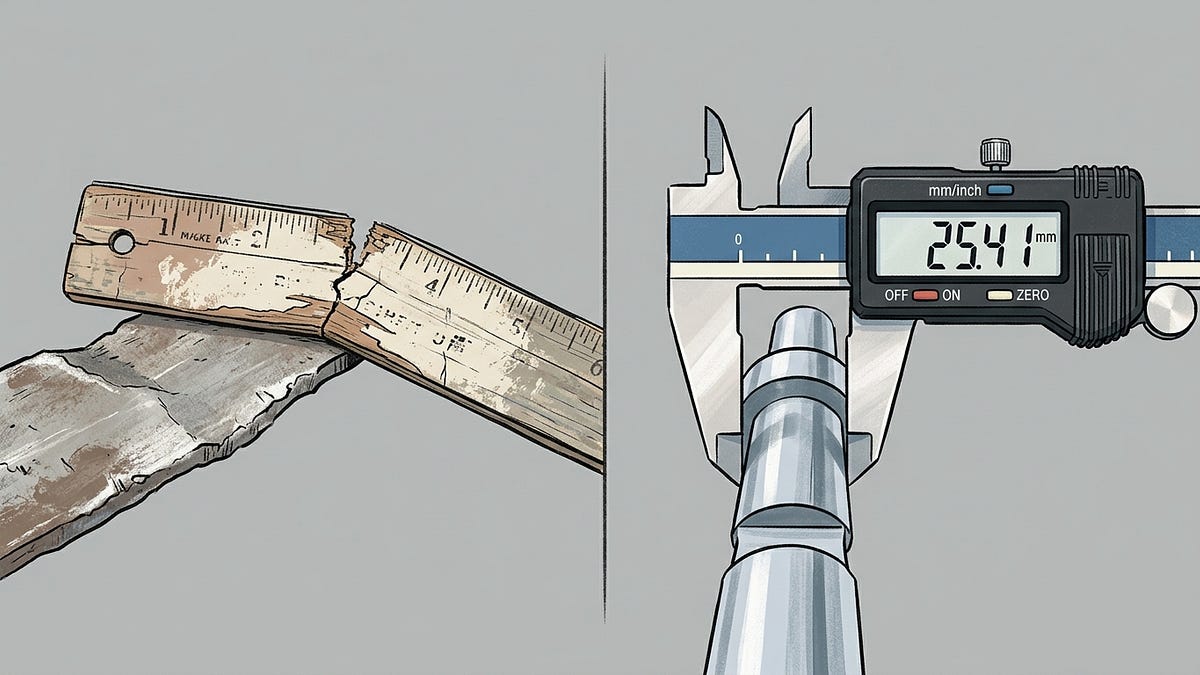

The specific observations—a manager's LLM-generated 'spike' comments mirroring the user's input, and uncharacteristically styled feedback on a Merge Request—underscore the subtle yet pervasive nature of this issue. The concern is not merely about efficiency but about the authenticity of engagement. When colleagues rely on AI to synthesize and respond to complex ideas without genuine critical thought, the quality of discourse diminishes, and the development of individual expertise stagnates. This practice, if left unaddressed, risks creating a professional environment where superficiality triumphs over substantive contribution, ultimately impacting innovation and team cohesion.

Addressing this emerging challenge requires more than just technical solutions; it necessitates a cultural shift and clear organizational policies. Companies must establish transparent guidelines for AI tool usage, emphasizing that AI should augment, not replace, human intellect and responsibility. The focus must shift towards leveraging AI to enhance productivity while preserving the core values of critical thinking, originality, and professional honesty. Failure to do so could lead to a future where intellectual contributions are viewed with skepticism, and the very essence of collaborative work is compromised.

Impact Assessment

The undisclosed use of AI tools to generate professional output poses a significant threat to workplace integrity, genuine collaboration, and the perceived value of human expertise. This scenario highlights an emerging ethical dilemma that organizations must address to maintain trust and foster meaningful contributions.

Read Full Story on NewsKey Details

- ● A company of approximately 100 people recently issued Cursor licenses.

- ● A manager's 'spike' comments were clearly LLM-generated, addressing only specific points raised by a colleague.

- ● Feedback on a Merge Request (MR) also exhibited an uncharacteristic, AI-like writing style.

- ● The user perceives this practice as lazy, dishonest, and devaluing professional contributions.

- ● The LLM-generated responses were described as 'terribly manufactured, worthless answers'.

Optimistic Outlook

This incident could serve as a catalyst for organizations to develop clear, transparent policies regarding AI tool usage in professional settings. By establishing guidelines, companies can ensure AI augments human capabilities rather than replaces critical thinking, fostering a culture where AI tools are used responsibly and ethically.

Pessimistic Outlook

The widespread, unmonitored adoption of AI for generating work output risks eroding fundamental professional skills and fostering intellectual dishonesty. This could lead to a 'race to the bottom' in terms of genuine contribution, where human effort is devalued and critical engagement is replaced by superficial, AI-generated responses.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

Pro-Iran Group Leverages AI-Generated Lego Videos for Sophisticated Political Propaganda

Pro-Iran group uses AI-generated Lego videos for political influence.

AI Challenges Human Self-Esteem and Domain Identity

AI's capabilities force a re-evaluation of human self-worth and identity tied to domain-specific skills.

AI Empowers Family in Racial Discrimination Lawsuit Against Universities

AI is being used to pursue a racial discrimination lawsuit against universities.

Nyth AI Brings Private, On-Device LLM Inference to iOS and macOS

Nyth AI enables private, on-device LLM inference for Apple devices, prioritizing user data security.

Open-Source AI Assistant 'Clicky' Offers Screen-Aware Interaction for macOS

An open-source AI assistant for macOS offers screen-aware interaction and voice control.

AI Memory Benchmarks Flawed: New Proposal Targets Real-World Agent Competence

Current AI memory benchmarks are critically flawed, hindering agent development.