Results for: "security"

Keyword Search 9 resultsMembrane: Revisable Memory for Long-Lived AI Agents

THE GIST: Membrane offers a revisable memory substrate for AI agents, enabling learning and self-improvement over time.

AI Agent Gains Persistent Memory, Bridging Gap Between Tool and Teammate

THE GIST: AI agents now have persistent memory, enabling them to retain user preferences and learn from past experiences.

Military AI Adoption Surpasses Global Cooperation Efforts

THE GIST: Military AI adoption is accelerating globally, while international cooperation on responsible use is lagging, particularly with reduced US and China engagement.

Mitigating AI Agent Attack Surfaces with Process-Scoped Credentials

THE GIST: AI agents inherit shell environment permissions, creating security risks like data theft and remote code execution via prompt injection.

Cisco Open Sources AI Bill of Materials Tool

THE GIST: Cisco releases an open-source tool to scan codebases and container images, creating an AI Bill of Materials (AI BOM).

Glean CEO on the Future of Enterprise AI Ownership

THE GIST: Glean's CEO discusses the shift in enterprise AI towards systems that perform tasks, not just answer questions, and the evolving AI architecture landscape.

The Security Risks of AI Assistants Like OpenClaw

THE GIST: AI assistants, like the viral OpenClaw, pose significant security risks due to their access to sensitive user data and potential vulnerabilities.

OpenClaw AI Agent: A Glimpse into the Future, Fraught with Risk

THE GIST: OpenClaw, a new AI agent, automates tasks but raises concerns about security and control.

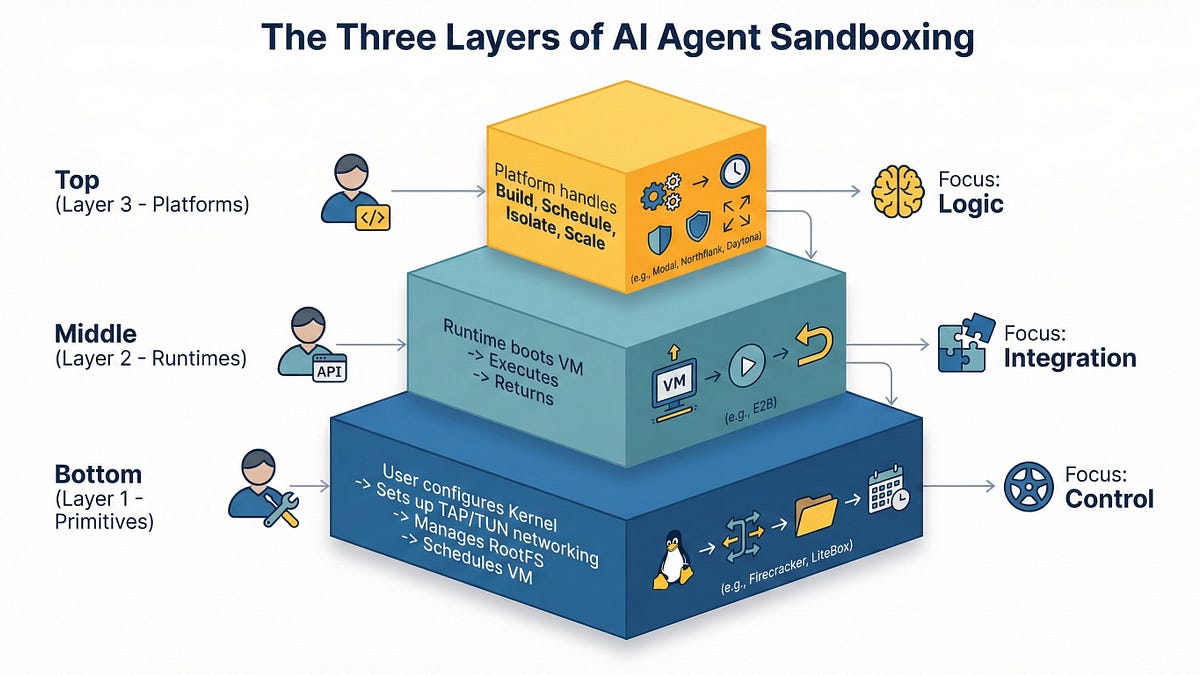

AI Agent Sandboxing: Navigating Primitives, Runtimes, and Platforms in 2026

THE GIST: In 2026, AI agent sandboxing requires careful selection between primitives, runtimes, and managed platforms due to the risks of executing untrusted code.