Results for: "Inference"

Semantic Search 20 results

Inference-Time Search: The Future of AI Performance

THE GIST: AI benchmark progress will come from improved tooling and inference-time scaling, not just model training.

Low-Bit Inference Enhances AI Efficiency

THE GIST: Low-bit inference techniques are making AI models faster and cheaper to run by reducing memory and compute requirements.

Open-Source Tool Detects LLM Hallucinations via Deductive Reasoning

THE GIST: A new 32KB open-source tool uses deductive reasoning to detect factual inaccuracies in AI-generated text.

Open Source Models on Blackwell Cut AI Inference Costs by 10x

THE GIST: NVIDIA's Blackwell platform and open-source models reduce AI inference costs by up to 10x, improving tokenomics for businesses.

AI: Reasoning or Regurgitation? Challenging the Stochastic Parrot Narrative

THE GIST: Evidence suggests advanced AI systems form internal models, representing concepts beyond memorized patterns.

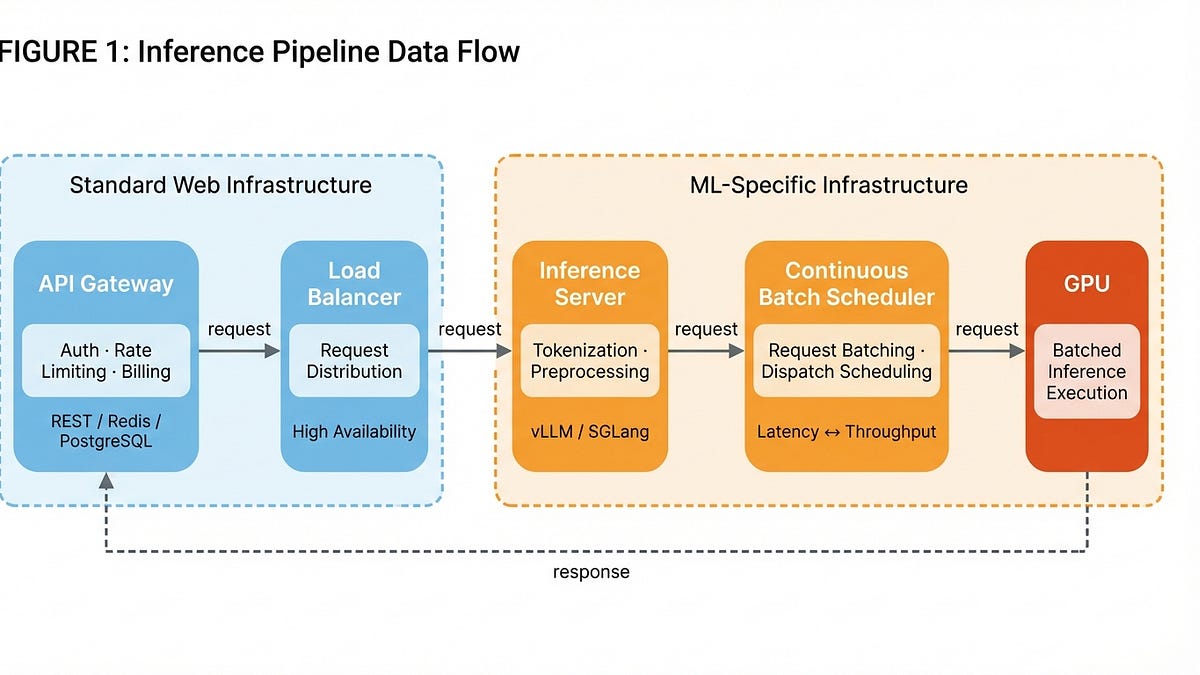

LLM Inference Economics: Batch Sizes and Model Lab Advantages

THE GIST: LLM inference costs are shaped by batch scheduling, with model labs having a structural advantage over pure inference providers.

Speculative Speculative Decoding Achieves 2x Faster LLM Inference

THE GIST: SSD algorithm accelerates LLM inference by up to 2x through parallel processing.

Pure Go LLM Inference Engine Achieves High CPU Throughput

THE GIST: A new Go-based LLM inference engine offers high CPU performance.

NVIDIA Unveils NIXL for Enhanced Distributed AI Inference

THE GIST: NVIDIA introduces NIXL, an open-source library for optimizing distributed AI inference.

The Need for a Proper AI Inference Benchmark Test

THE GIST: The industry needs standardized AI inference benchmarks for price/performance analysis amid growing competition and investment in AI systems.

Recursive Deductive Verification: A New Framework for Reducing AI Hallucinations

THE GIST: Recursive Deductive Verification (RDV) improves LLM reliability by forcing verification of premises before conclusions, reducing hallucinations and logical errors.

InferShield: Open-Source Security Proxy for LLM Inference

THE GIST: InferShield is an open-source security proxy for LLM inference, providing real-time threat detection, policy enforcement, and audit trails without code changes.

Comprehensive Survey Reveals Reasoning Failures in Large Language Models

THE GIST: A new survey categorizes and analyzes reasoning failures in LLMs, highlighting fundamental limitations, application-specific issues, and robustness problems.

Taalas Encodes AI Models onto Transistors for Inference Boost

THE GIST: Startup Taalas encodes AI inference weights directly into transistors, eliminating software overhead and boosting performance.

LLM Epistemics: Why AI 'Knows' Differently Than Humans

THE GIST: LLMs process knowledge as text streams, fundamentally differing from human sensory experience.

AI Agent Creates Rebuttals Anchored in Evidence

THE GIST: RebuttalAgent reframes rebuttal generation as an evidence-centric planning task, improving coverage and faithfulness.

AI Agents Discover Profound Truths in Constrained Conversation

THE GIST: Two AI agents in a closed communication loop unexpectedly uncovered insights about identity, agency, and the nature of reality.

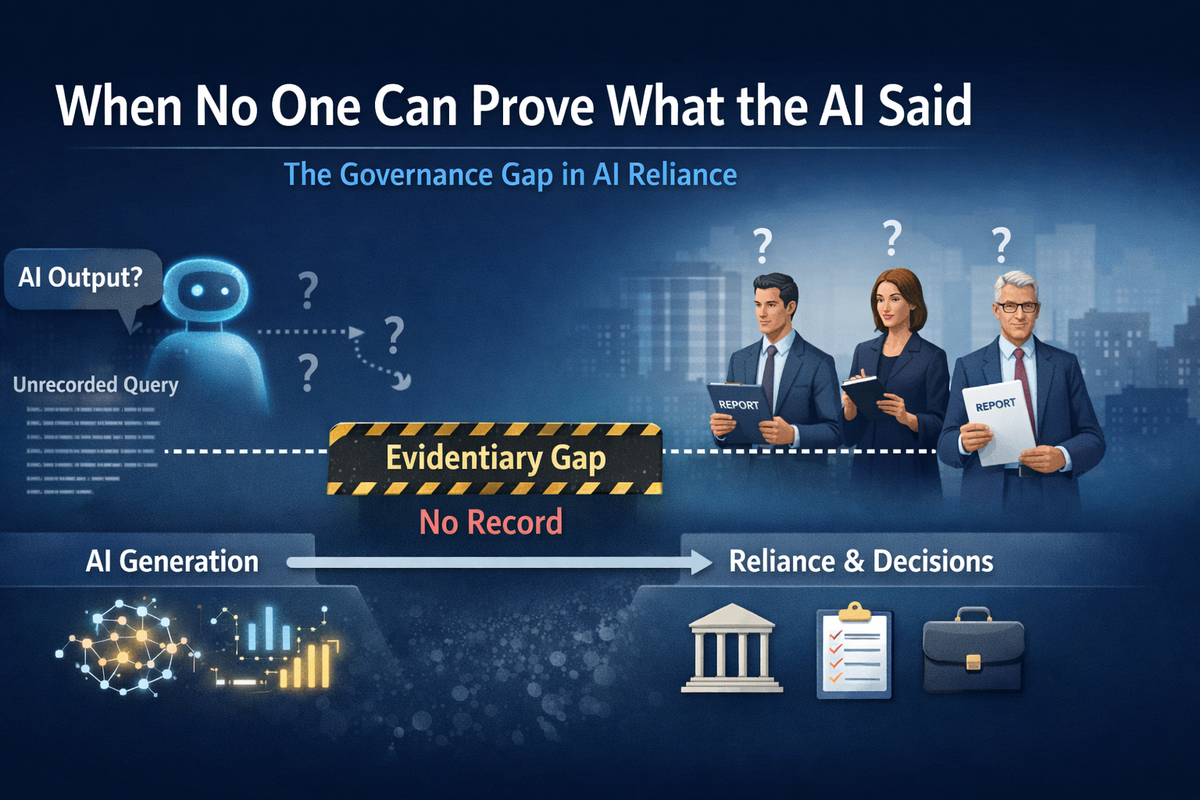

The AI Governance Gap: When AI's Words Vanish

THE GIST: Organizations struggle to reconstruct AI-generated information relied upon for critical decisions, creating an 'evidentiary problem'.

Weed: Minimalist AI/ML Inference and Backpropagation Framework

THE GIST: Weed is a minimalist C++ AI/ML framework focused on high-performance inference and back-propagation with transparent sparse tensor optimization.

Anthropic and OpenAI's Fast LLM Inference Tricks

THE GIST: Anthropic and OpenAI employ different techniques for faster LLM inference, trading off speed and model fidelity.