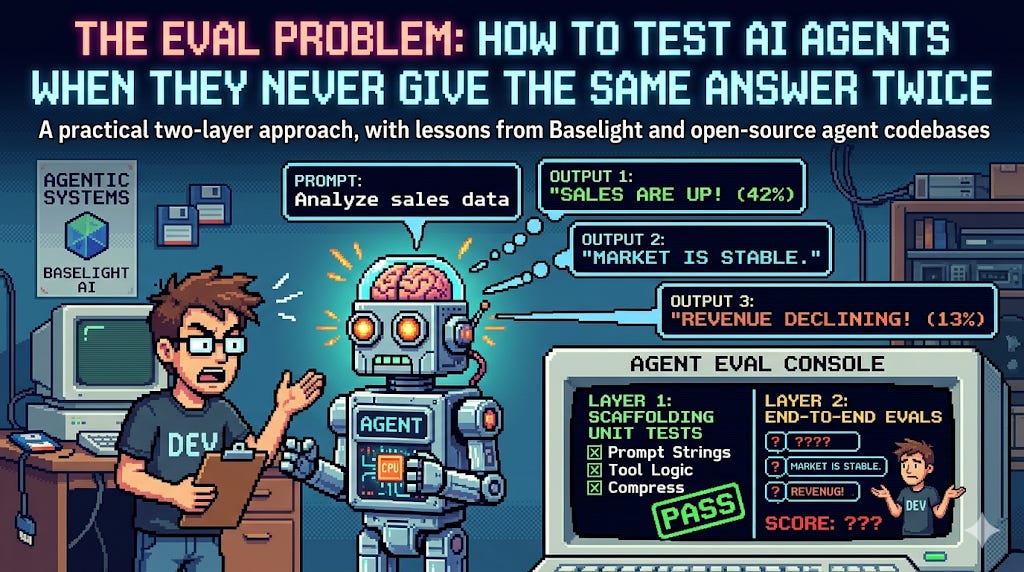

AI Agent Teams Boost Productivity, Tackle Complex Tasks

Sonic Intelligence

Specialized AI agents collaborate via protocols to solve complex tasks.

Explain Like I'm Five

"Imagine a group of super-smart robots, each really good at one thing, talking to each other to solve a big puzzle that no single robot could figure out alone. This makes work much faster and easier for everyone."

Deep Intelligence Analysis

This development is underpinned by frameworks like AgentOps, which provide the necessary infrastructure for managing these collaborative AI entities. The ability of agents to work together, exchanging information and coordinating efforts, directly addresses the limitations of monolithic AI models. This distributed intelligence approach mirrors human team dynamics, but at machine speed and scale. The focus on communication protocols is critical, as it dictates the efficiency and reliability of inter-agent interactions, directly impacting the overall system's performance and robustness.

Looking forward, the proliferation of such multi-agent systems will necessitate new approaches to AI governance, security, and human-AI interaction. The 'Eliza effect' serves as a crucial reminder of the psychological implications of interacting with increasingly sophisticated AI, emphasizing the need for clear boundaries and an understanding of AI's functional role versus perceived sentience. The future will see these agent teams tackling more ambitious challenges, from scientific discovery to complex logistical operations, but their success will depend on robust management frameworks and a clear understanding of their capabilities and limitations.

Transparency Statement: This analysis was generated by an AI model. All assertions are based solely on the provided source material.

Visual Intelligence

flowchart LR A["Define Complex Task"] --> B["Decompose Task"] B --> C["Assign to Specialized Agents"] C --> D["Agents Communicate"] D --> E["Coordinate Actions"] E --> F["Solve Subtasks"] F --> G["Aggregate Results"] G --> H["Task Completed"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The emergence of collaborative AI agent teams represents a significant leap in AI's problem-solving capabilities. This paradigm shift from single-agent systems to networked intelligence allows for the tackling of previously intractable problems, promising substantial productivity gains and workflow automation across industries.

Key Details

- Teams of specialized AI agents work together.

- Agents use communication protocols for task resolution.

- This approach solves complex tasks overwhelming single agents.

- Frameworks like AgentOps manage AI agents.

Optimistic Outlook

The development of specialized, communicating AI agent teams could unlock unprecedented levels of automation and efficiency. By distributing complex problems among multiple agents, businesses can achieve higher productivity, scale AI solutions more effectively, and innovate faster, leading to new services and operational models.

Pessimistic Outlook

While powerful, managing complex AI agent teams introduces new challenges in oversight, debugging, and ensuring ethical alignment. The 'Eliza effect' highlights the risk of over-reliance or emotional attachment, potentially obscuring critical human judgment and accountability in increasingly autonomous systems.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.