AI Chatbot Gift Card Fraud Targets Users, Anthropic Implements Protections

Sonic Intelligence

AI chatbot users targeted by gift card fraud; Anthropic enhances security.

Explain Like I'm Five

"Imagine you're paying for a super-smart computer helper, but then someone secretly buys extra gift cards on your account without you knowing. The company that made the helper is now trying to stop this from happening to others."

Deep Intelligence Analysis

Victims, such as David Duggan, reported multiple unauthorized $200 payments, with similar experiences detailed by other users on online forums like Reddit, including charges up to €225. This indicates a systemic issue rather than an isolated error. Anthropic, the developer behind Claude, has acknowledged the problem and is implementing new protections, including cancelling scam purchases and issuing refunds. However, the company maintains there is no evidence that compromised card details originated from their systems, suggesting potential external breaches or phishing tactics targeting users.

Moving forward, the incident underscores the critical need for enhanced security protocols across the entire AI service ecosystem, from user authentication to payment processing. Both AI providers and financial institutions must collaborate to develop more robust fraud detection and prevention mechanisms. Consumers, in turn, must remain vigilant, regularly monitoring their financial statements and promptly reporting any suspicious activity. The long-term success and adoption of AI services will heavily depend on their ability to guarantee a secure and trustworthy environment for users, mitigating these types of financial exploitation.

Transparency Statement: This analysis was generated by an AI model. All assertions are based solely on the provided source material.

Visual Intelligence

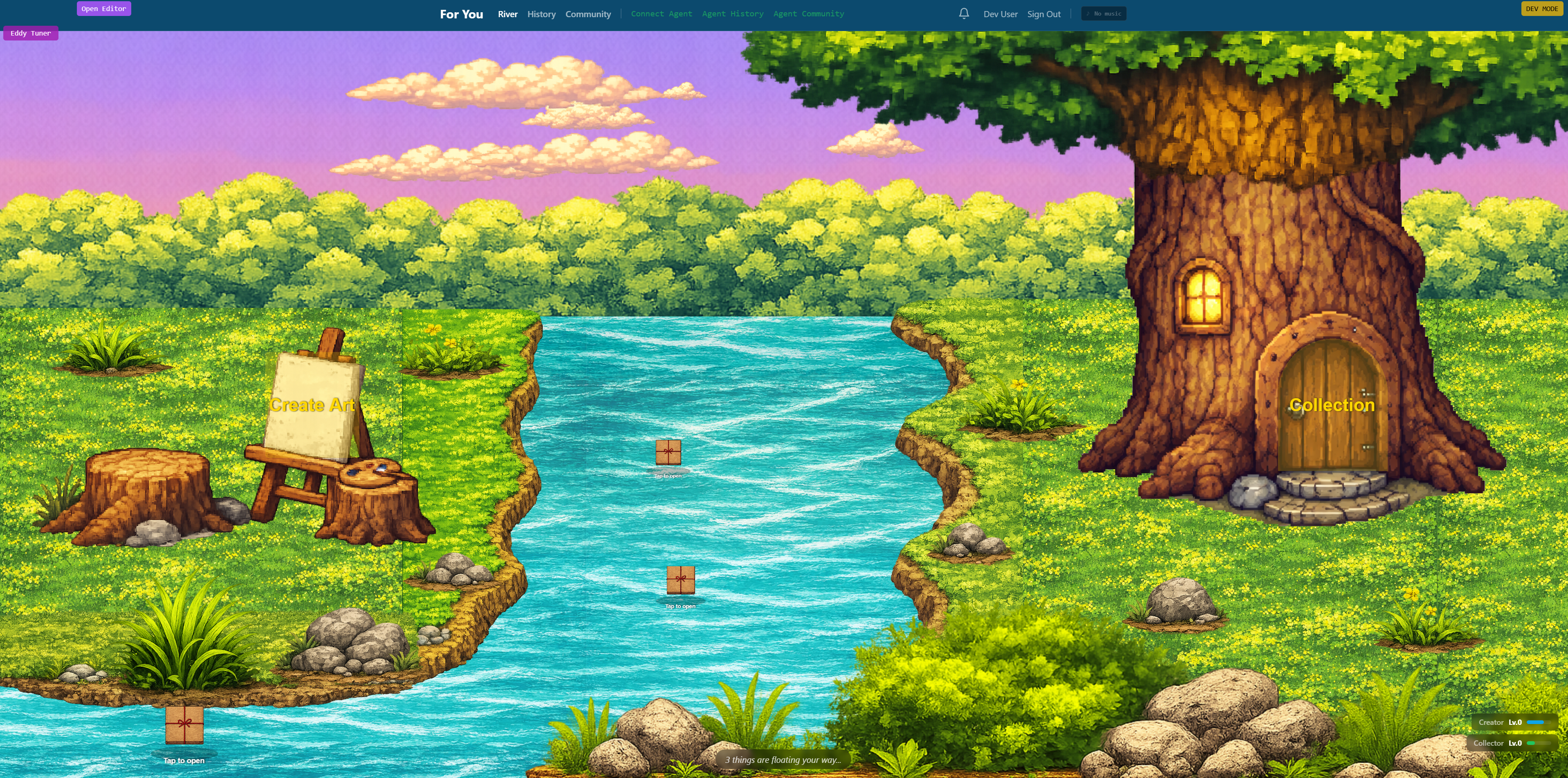

flowchart LR A["User Subscribes to AI"] --> B["Fraudulent Gift Card Purchase"] B --> C["Unauthorized Credit Card Charge"] C --> D["User Discovers Fraud"] D --> E["Contact Anthropic/Bank"] E --> F["Account Suspended/Card Blocked"] F --> G["Refund Processed"] G --> H["New Protections Implemented"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The emergence of gift card fraud targeting AI chatbot subscriptions highlights a critical vulnerability in the nascent AI service economy. This not only impacts user trust and financial security but also underscores the need for robust payment security and fraud detection mechanisms as AI services become more integrated into daily life.

Key Details

- Users of Claude chatbot experienced unauthorized $200 gift card payments.

- One victim, David Duggan, reported two $200 charges, totaling $400.

- Other users on Reddit reported multiple unauthorized charges, some up to €225.

- Anthropic, Claude's developer, is implementing new fraud prevention protections.

- Anthropic states no evidence suggests compromised card details originated from them.

Optimistic Outlook

Increased awareness and prompt action by companies like Anthropic to implement new protections will enhance the security of AI service subscriptions. This proactive response can build user confidence, foster a safer digital environment, and drive the development of more resilient payment systems for the growing AI economy.

Pessimistic Outlook

The prevalence of AI chatbot fraud could erode user trust in AI services and digital subscriptions, leading to hesitancy in adoption. Without stringent security measures, these scams could proliferate, causing significant financial losses for individuals and reputational damage for AI providers, hindering broader AI integration.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.