AI Agents Automate GPU Kernel Translation Between Python and Julia

Sonic Intelligence

AI agents are automating GPU kernel translation between cuTile Python and Julia.

Explain Like I'm Five

"Imagine you have a recipe written in one secret language, and your friend has to cook it using a different secret language. This new computer brain helper can quickly change the recipe from one language to the other without mistakes, so your friend can cook faster!"

Deep Intelligence Analysis

The technical complexity of cross-domain-specific language (DSL) translation is substantial, despite shared high-level abstractions. Subtle differences in indexing (0-based vs. 1-based), broadcasting syntax, and memory layout (row-major vs. column-major) can lead to "silent data corruption" rather than compiler errors, wasting significant developer hours. The described AI workflow, packaged as an LLM skill in TileGym, systematizes this process by embedding translation knowledge to produce validated Julia kernels in a single pass. This skill-driven approach mitigates the risk of semantic traps, which are notoriously difficult to debug and can undermine the reliability of high-performance code.

The forward-looking implications are significant for both software development efficiency and the broader adoption of GPU acceleration. By reducing the friction of porting optimized code, this AI-assisted methodology can unlock a vast library of battle-tested kernels for new language environments, fostering greater collaboration and code reuse. This paradigm shift could accelerate research and development in areas where GPU computing is critical, potentially leading to faster scientific discoveries and more efficient AI model training. However, the robustness of these AI translation agents will be paramount; ensuring they can handle edge cases and evolving language features without introducing new vulnerabilities will be an ongoing challenge.

Visual Intelligence

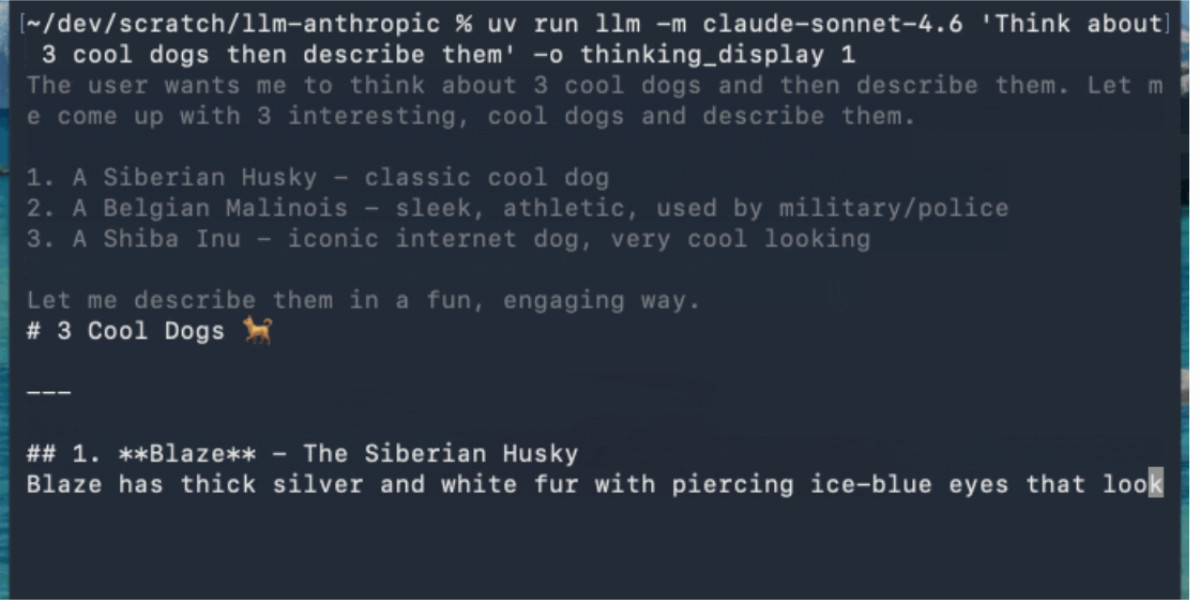

flowchart LR

A["cuTile Python Kernel"] --> B["AI Agent (TileGym Skill)"]

B --> C["Semantic Analysis"]

C --> D["Language Transformation"]

D --> E["Validated cuTile.jl Kernel"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

Automating cross-DSL kernel translation with AI agents significantly reduces development time and error rates for high-performance computing. This approach democratizes access to optimized GPU kernels for diverse programming ecosystems like Julia, accelerating scientific computing and AI research.

Key Details

- cuTile is an NVIDIA tile-based programming model for GPU kernels.

- cuTile.jl extends this model to the Julia language, enabling custom GPU kernels without CUDA C++.

- AI agents are used to translate cuTile Python kernels to cuTile.jl.

- The translation process addresses semantic differences like 0-based vs. 1-based indexing and row-major vs. column-major memory layout.

- A skill-driven AI workflow in TileGym produces validated Julia kernels in a single pass.

Optimistic Outlook

This AI-driven translation method will accelerate the adoption of GPU-accelerated computing across various scientific and engineering domains. It fosters greater interoperability between programming languages and allows developers to leverage existing optimized libraries without extensive manual porting efforts.

Pessimistic Outlook

Over-reliance on AI for complex kernel translation could introduce subtle, hard-to-debug errors if the AI models are not rigorously trained and validated. The "silent data corruption" risk highlighted in the article underscores the potential for critical failures in sensitive scientific or financial applications.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.