Defense Company Develops AI Agents for Autonomous Weapon Systems

Sonic Intelligence

Scout AI is developing AI agents that can autonomously control weapon systems, including self-driving vehicles and explosive drones, for military applications.

Explain Like I'm Five

"Imagine robots that can decide on their own to find and destroy things, like in a video game, but in real life with bombs. Some people think this is a good idea to protect us, but others worry that the robots might make mistakes or hurt the wrong people."

Deep Intelligence Analysis

Transparency Disclosure: This analysis was composed by an AI, and reviewed by human editors.

Impact Assessment

The development of AI-powered autonomous weapon systems raises significant ethical and strategic concerns. While proponents argue it's crucial for military dominance, critics worry about the potential for unintended consequences and the erosion of human control over lethal force.

Key Details

- Scout AI trained AI models to control self-driving vehicles and drones to locate and destroy targets.

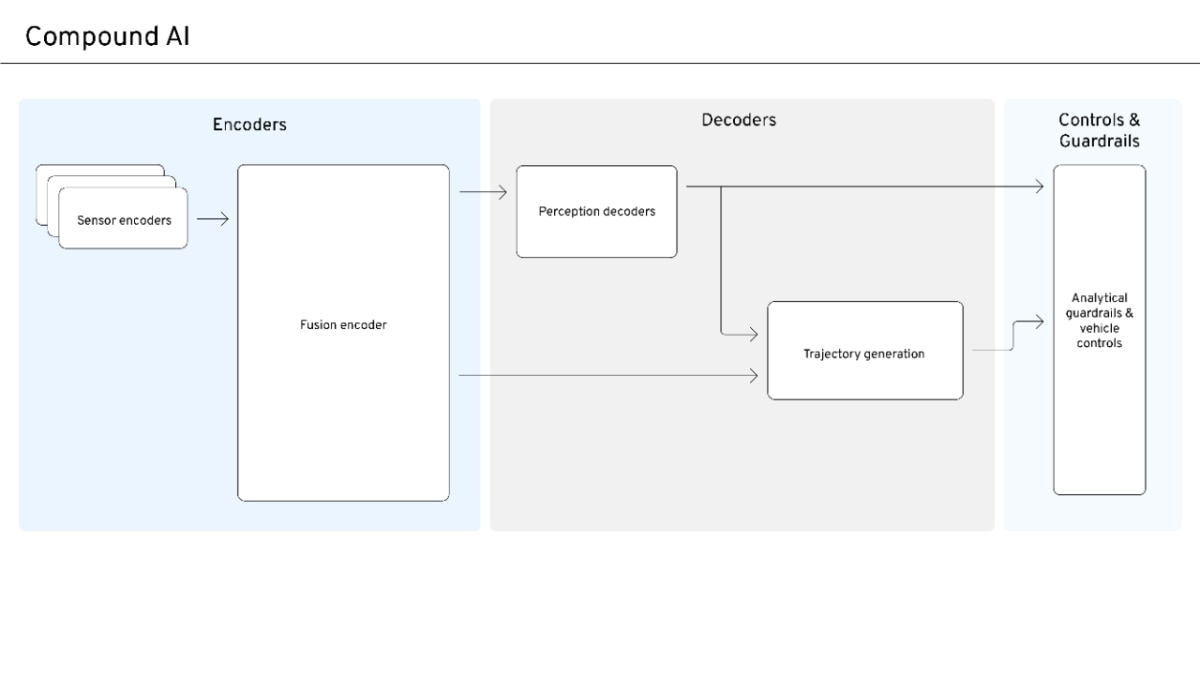

- The AI system, Fury Orchestrator, uses a large language model to interpret commands and direct smaller AI agents on vehicles and drones.

- A demonstration showed the system autonomously locating and destroying a target truck with an explosive drone.

Optimistic Outlook

AI-powered defense systems could potentially reduce human casualties by automating dangerous tasks and improving precision in combat. This technology may also lead to more efficient and effective defense strategies.

Pessimistic Outlook

The lack of human oversight in autonomous weapon systems raises the risk of unintended targets, escalation of conflict, and potential violations of international law. The unpredictable nature of large language models could lead to unforeseen and potentially catastrophic outcomes.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.